Matthew Walmer retweetledi

Matthew Walmer

23 posts

Matthew Walmer

@MatthewWalmer

Computer Vision PhD student at University of Maryland College Park Website: https://t.co/7rfVPC9ZUS

Katılım Haziran 2022

13 Takip Edilen138 Takipçiler

Excited to announce that UPLiFT has been accepted to #CVPR2026!

You can also try out UPLiFT right now to extract pixel-dense DINOv3 features with our pretrained models linked below!

Code: github.com/mwalmer-umd/UP…

Paper: arxiv.org/abs/2601.17950

Website: cs.umd.edu/~mwalmer/uplif…

English

@Minseok96_kr @_sakshams_ @AnirudAgg @abhi2610 Hi Minseok, UPLiFT operates on the VAE's latent features similarly to the DINO features. We do sample the features first, essentially using the features as they would be fed to the VAE decoder later.

English

@MatthewWalmer @_sakshams_ @AnirudAgg @abhi2610 Super cool paper! I was wondering how the upsampling works in the VAE space.

Since the encoded features from the VAE encoder are vector representations, does your method operate directly on these features? I’ve only skimmed the table and figure haha...

English

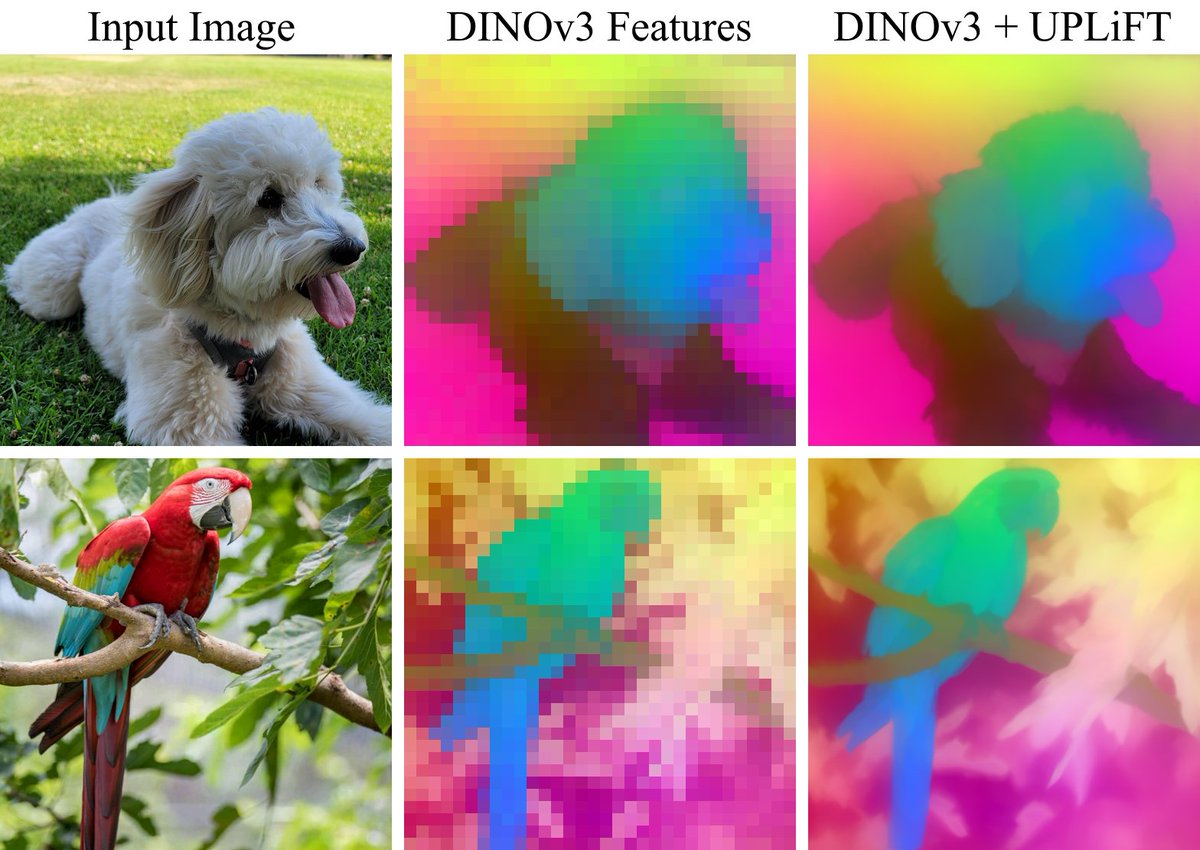

We’re excited to announce UPLiFT, our lightweight, pixel-dense feature upsampler. UPLiFT boosts feature density, preserves semantics, and has better efficiency scaling than recent SOTA methods. See all links in the thread below.

Coauthors: @_sakshams_ @AnirudAgg @abhi2610

🧵[1/6]

English

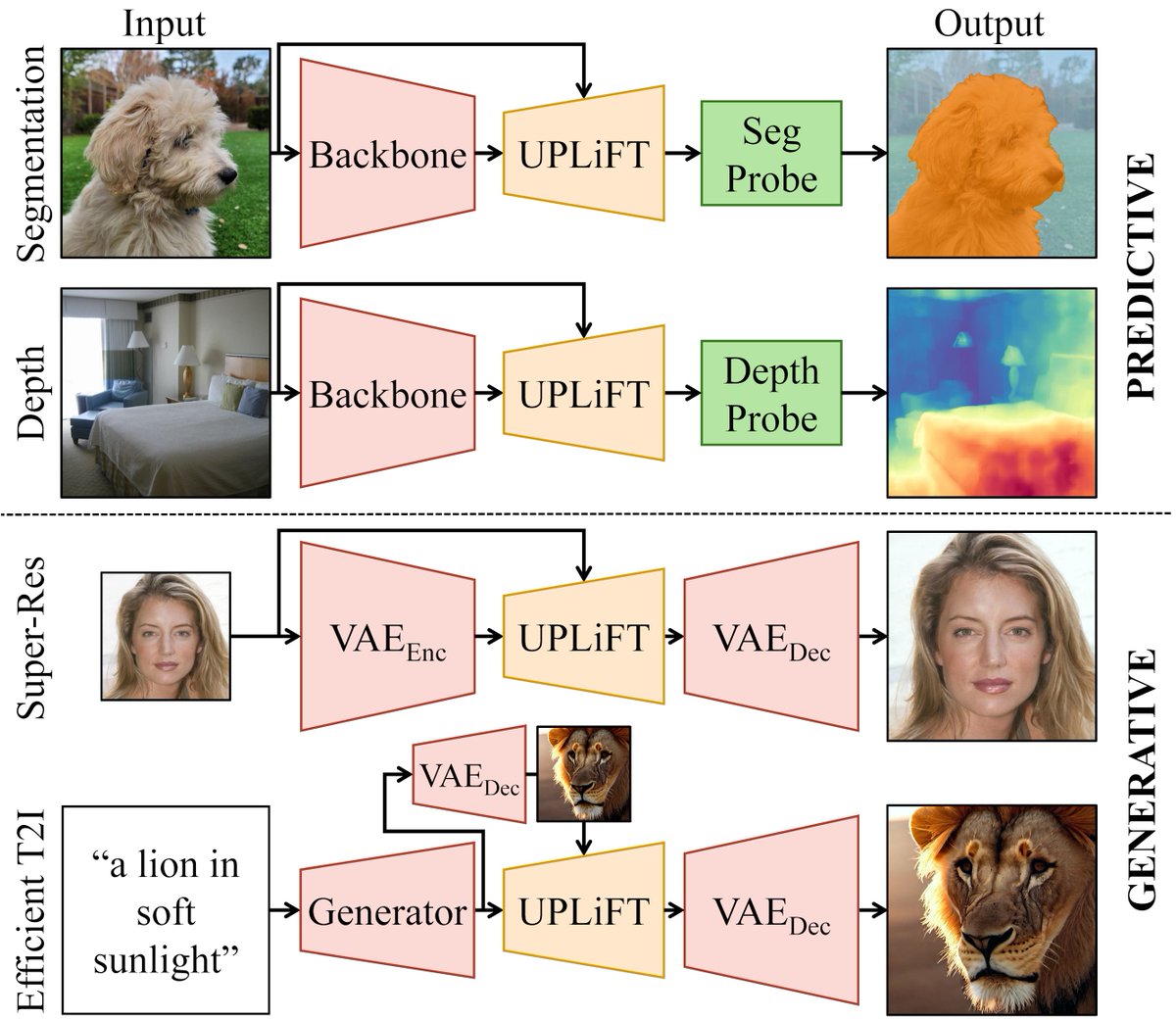

@_sakshams_ @AnirudAgg @abhi2610 In addition, UPLiFT + SD1.5 VAE achieves comparable visual quality to the state-of-the-art method FM-Boost (CFM), while using less training data, few parameters, and fewer inference-time iterations.

🧵[6/6]

English

@_sakshams_ @AnirudAgg @abhi2610 We demonstrate the versatility and effectiveness of UPLiFT for both predictive and generative tasks, including semantic segmentation, depth estimation, image super-resolution, and efficient T2I generation.

🧵[5/6]

English

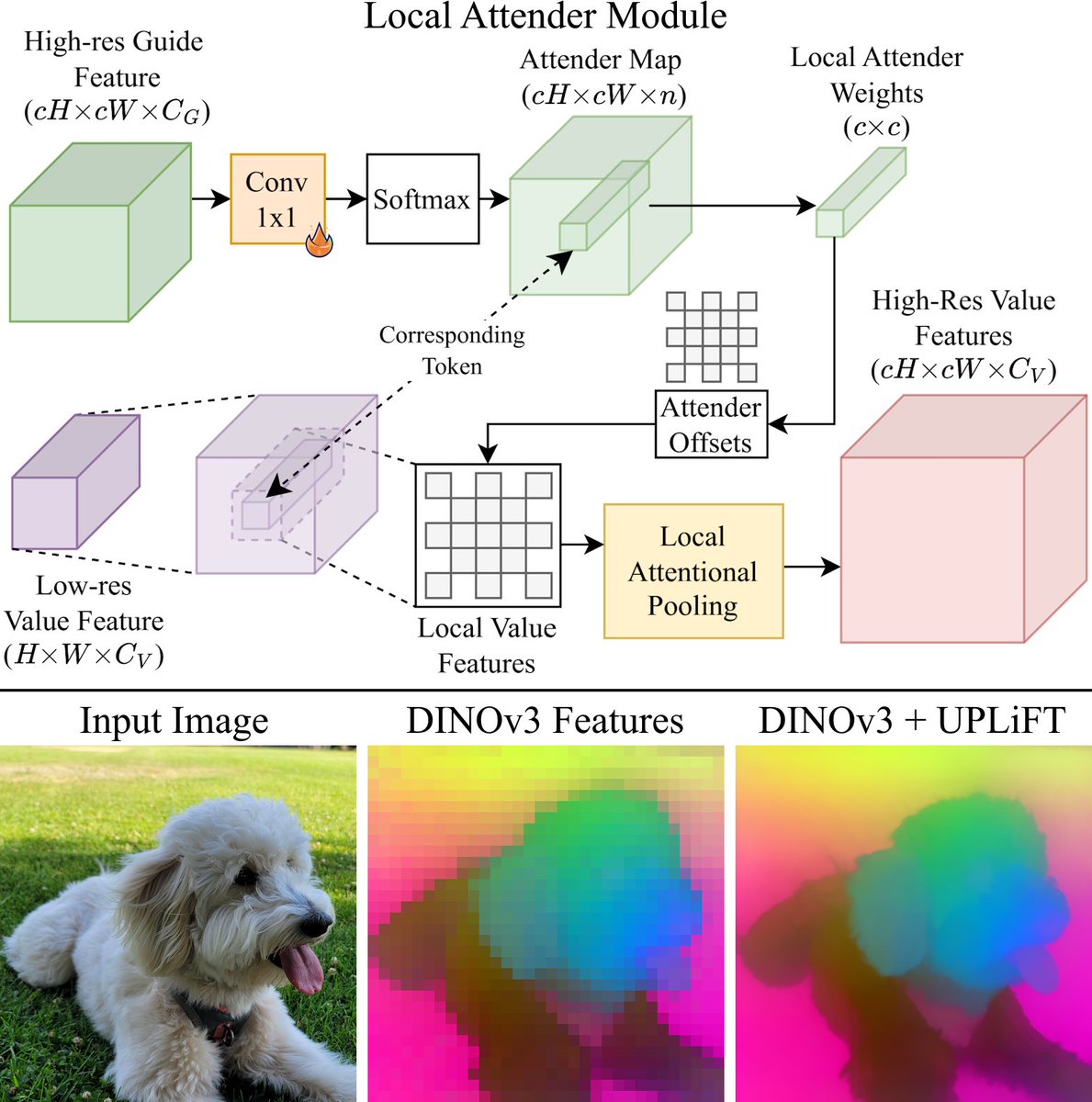

@_sakshams_ @AnirudAgg @abhi2610 Through this approach, our method maintains linear-time-scaling with respect to the number of visual tokens. Meanwhile, cross-attention-based upsamplers have quadratic scaling. This allows UPLiFT to scale and make denser features for larger images.

🧵[4/6]

English

@_sakshams_ @AnirudAgg @abhi2610 UPLiFT uses iterative feature growing, which avoids the high computational costs of recent cross-attention-based methods. We also present a new Local Attender feature-pooling module, which reformulates local attention using operations based on relative directional offsets

🧵[3/6]

English

Today we are also releasing our UPLiFT code and 3 pretrained models for DINOv2-S/14, DINOv3-S+/16, and SD1.5 VAE. We also include torch hub support and training code.

Paper: arxiv.org/abs/2601.17950

Code: github.com/mwalmer-umd/UP…

Website: cs.umd.edu/~mwalmer/uplif…

🧵[2/6]

English

Matthew Walmer retweetledi

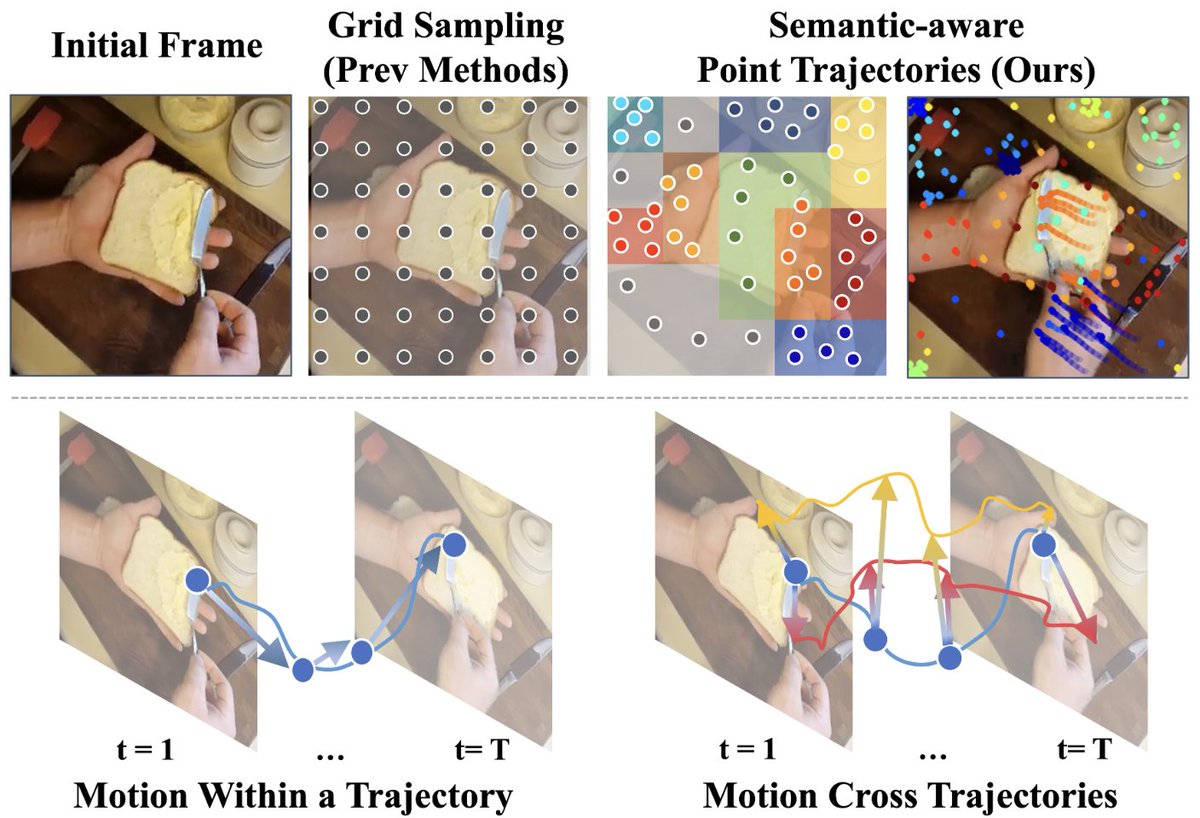

🎉 Excited to share our paper "Trokens: Semantic-Aware Relational Trajectory Tokens for Few-Shot Action Recognition" has been accepted to #ICCV2025!

Equally co-led with @ShuaiyiH — we advance few-shot action recognition via smart point tracking.

🔗 trokens-iccv25.github.io

🧵👇

English

Matthew Walmer retweetledi

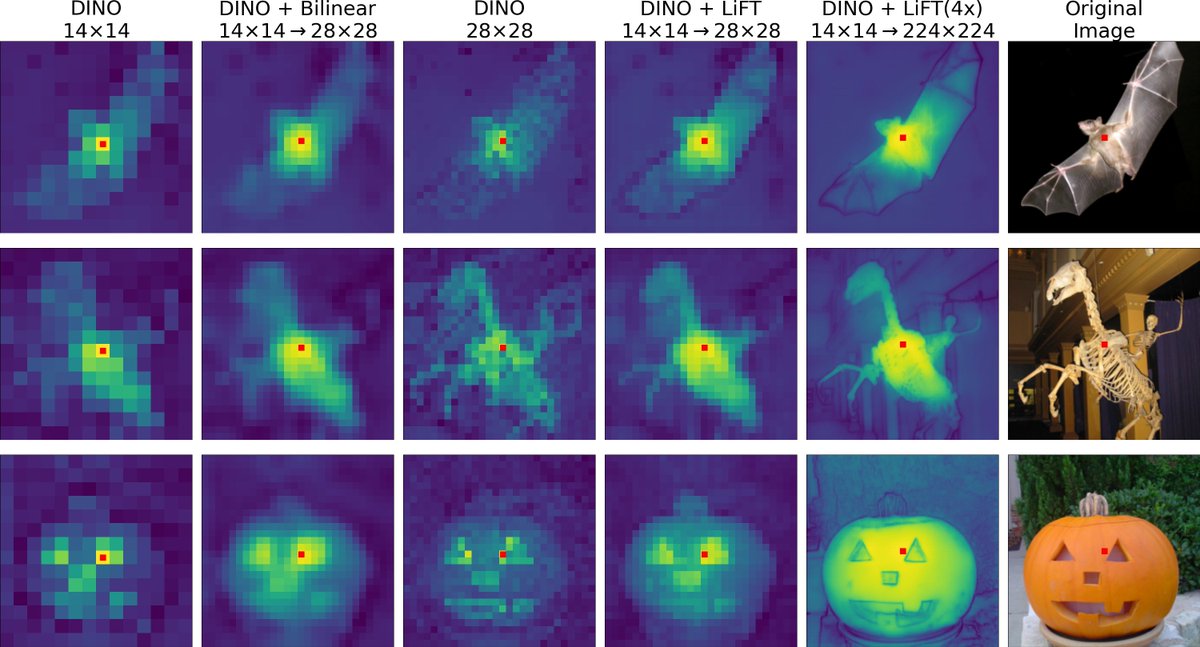

We are happy to release our LiFT code and pretrained models! 📢

Code: github.com/saksham-s/lift

Project Page: cs.umd.edu/~sakshams/LiFT

Here are some super spooky super resolved feature visualizations to make the season scarier 🎃

Coauthors: @MatthewWalmer @kamalgupta09 @abhi2610

Saksham Suri@_sakshams_

We introduce LiFT, an easy to train, lightweight, and efficient feature upsampler to get dense ViT features without the need to retrain the ViT. Visit our poster @eccvconf #eccv2024 in Milan on Oct 1st (Tuesday), 16:30 (local), Poster: 79. Project Page: cs.umd.edu/~sakshams/LiFT

English

Matthew Walmer retweetledi

We introduce LiFT, an easy to train, lightweight, and efficient feature upsampler to get dense ViT features without the need to retrain the ViT.

Visit our poster @eccvconf #eccv2024 in Milan on Oct 1st (Tuesday), 16:30 (local), Poster: 79. Project Page: cs.umd.edu/~sakshams/LiFT

English

Just a reminder we’ll be presenting this evening at the Tuesday 4:30pm poster session at #CVPR2023. Hope to see you there!

Matthew Walmer@MatthewWalmer

We’re looking forward to presenting our work “Teaching Matters: Investigating the Role of Supervision in Vision Transformers” next week at #CVPR2023! We’ll be in the Tues-PM poster session at board 321. Links and some key results below. @_sakshams_ @kamalgupta09 @abhi2610 [1/5]

English

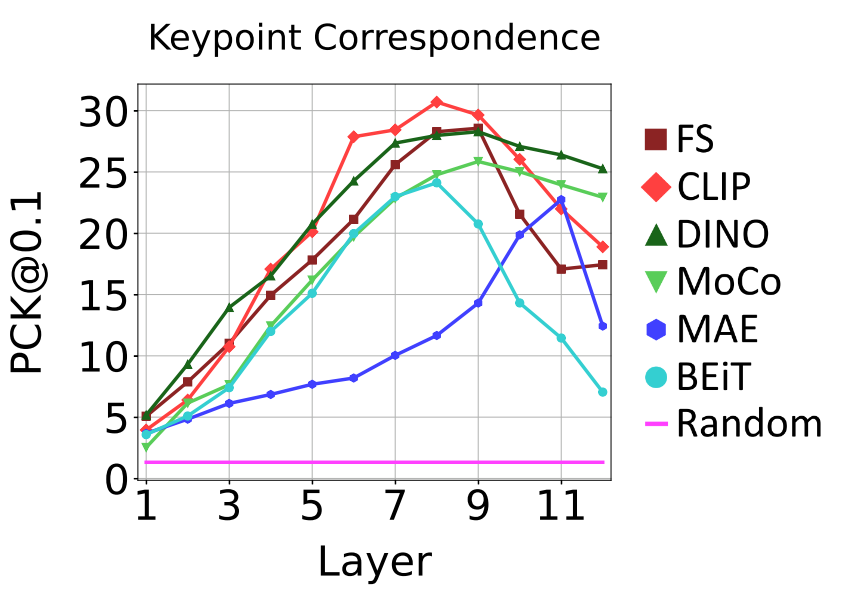

@_sakshams_ @kamalgupta09 @abhi2610 The best layer for a downstream task varies depending on both the task and the pretraining. For example, on keypoint correspondence, most of the ViTs have their best performance with layers 7 or 8 (of 12). We present comparisons for both locally and globally focused tasks.

[5/5]

English

We’re looking forward to presenting our work “Teaching Matters: Investigating the Role of Supervision in Vision Transformers” next week at #CVPR2023! We’ll be in the Tues-PM poster session at board 321.

Links and some key results below.

@_sakshams_ @kamalgupta09 @abhi2610

[1/5]

GIF

English

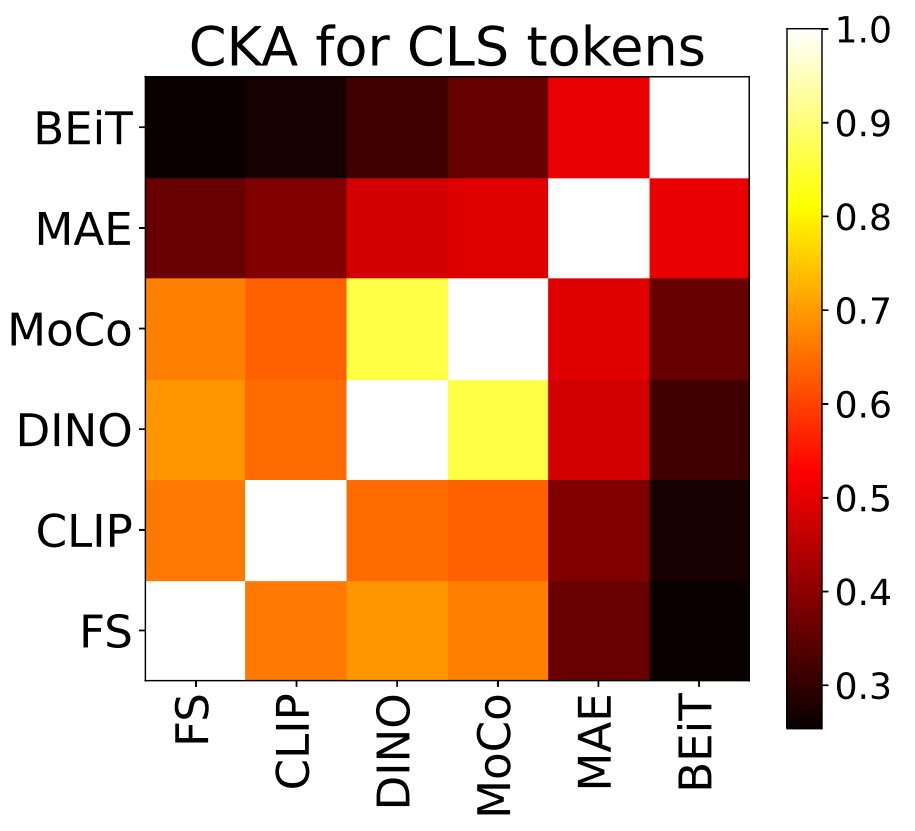

@_sakshams_ @kamalgupta09 @abhi2610 Even though MAE has no CLS objective, we find evidence that it learns to embed semantic information in the CLS token even before fine-tuning. Through CKA analysis, we find some similarity between MAE, DINO, and MoCo CLS token representations.

[4/5]

English

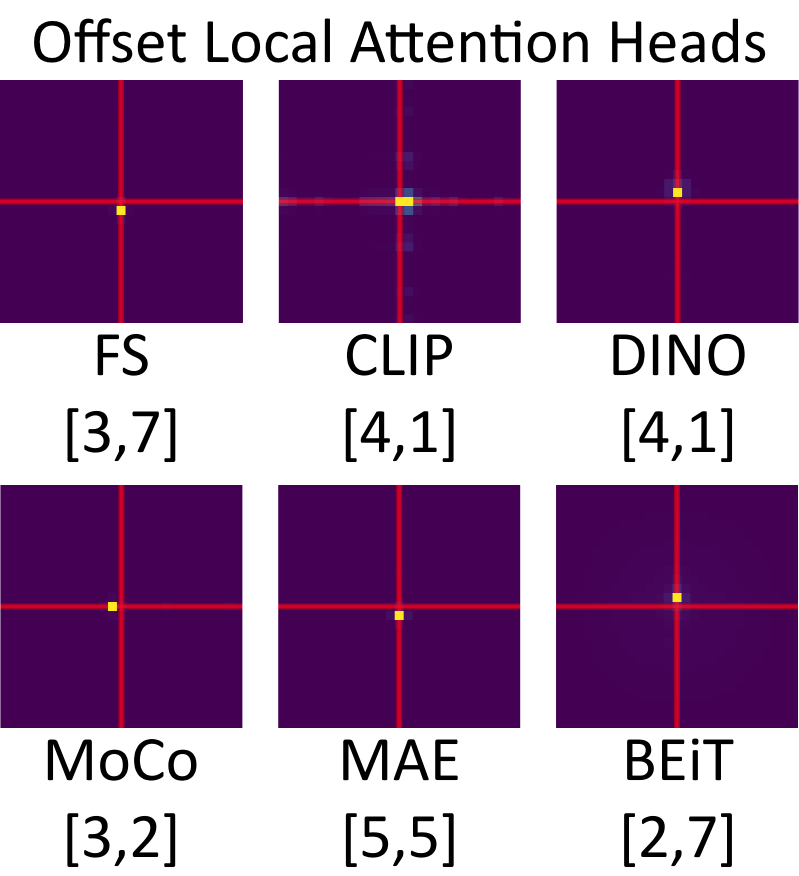

@_sakshams_ @kamalgupta09 @abhi2610 Did you know that ViTs learn to use offset local attention heads? These heads attend locally, but to a position that is one off in one direction. The existence of these heads may actually demonstrate a strength of CNNs over ViTs.

[3/5]

English

@_sakshams_ @kamalgupta09 @abhi2610 We compared ViTs from 6 different supervision methods and identified key similarities and differences between them. We examine: attention, features, and downstream performance.

Paper: openaccess.thecvf.com/content/CVPR20…

Website: cs.umd.edu/~sakshams/vit_…

Code: github.com/mwalmer-umd/vi…

[2/5]

English

Matthew Walmer retweetledi

Excited to share our work "Teaching Matters: Investigating the Role of Supervision in Vision Transformers" which has been accepted to #CVPR2023!

Work done with: @MatthewWalmer, @kamalgupta09 and @abhi2610

Website: cs.umd.edu/~sakshams/vit_…

Code: github.com/mwalmer-umd/vi…

English

@aerinykim Hi @aerinykim, thanks so much for visiting our poster! For those interested, we also have an interactive demo where you can test out backdoored models for yourself: huggingface.co/spaces/CVPR/Du…

English

Before I forget, I'd like to summarize some interesting papers that I found at #CVPR2022.

Dual-key multimodal backdoors for visual question answering

arxiv.org/abs/2112.07668

1. This paper proposes an interesting Trojan attack method.

To start, what exactly is a Trojan attack?

English