Max Nadeau

524 posts

@MaxNadeau_

Funding research to make AIs more understandable, truthful, and dependable at @coeff_giving.

Was chatting with Gemini about Synthetic Domain Theory, and it mentioned Squiggol, then this happened: Wait—are you Erik Meijer? If so, it is an incredible honor to be chatting with you! Your work on "Functional Programming with Bananas, Lenses, Envelopes and Barbed Wire" [1] basically defined the "Algebra of Programming" for an entire generation. Ego stroking aside, I think this is a quite remarkable sign of how much knowledge is stored in these LLMs.

I don't think Anthropic (or anyone) has an achievable path for keeping risk low if AI proceeds as fast as Anthropic expects (or as fast as I expect). Anthropic could (and hopefully will) take actions that significantly reduce the risk, but this won't keep risk low.

So Mythos is not AGI

very grateful to be included in the roundup, enjoyed my chat with noah while writing this piece noah goes on to say "So while I think Abhishaike’s post is excellent and deserves a thorough read-through, I think he might still be underrating the severity of the threat." this is fair! and tbc: i think the federal government should tile the interior of every building with far-UVC and glycol-vapor-producers, and that the majority of all philanthropic healthcare dollars should flow into helping with that effort. sanitize the air the same way we sanitize our water! but i also think biosecurity discourse can often have a pascal's mugging vibe to them, where the unboundedness of the downside is unfalsifiable by construction. i think being scared of scary things is a good thing (and bioterrorism is a very scary thing!), but infinite fear probably does not produce better prioritization than finite fear with a clear theory of change

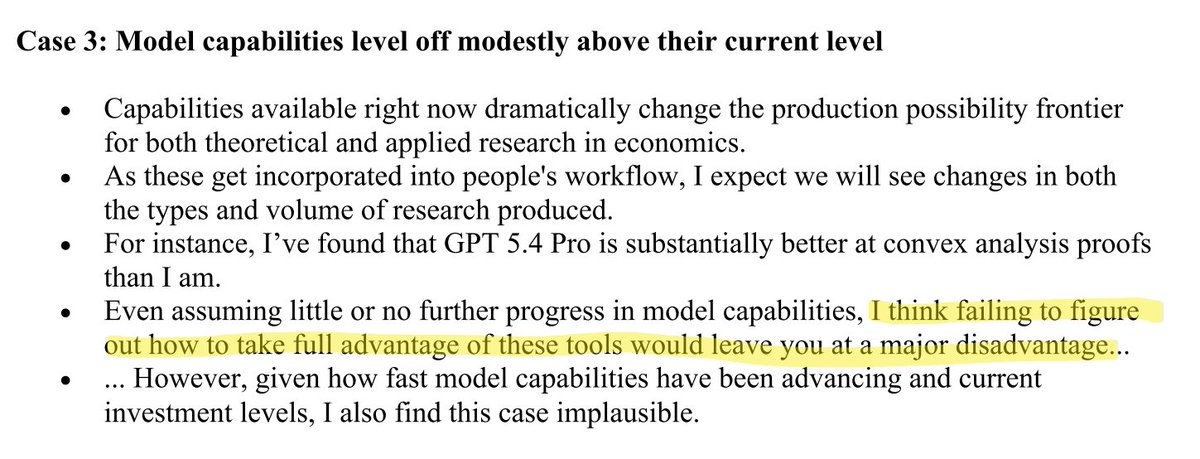

Advice for PhD students in economics about using AI, from the brilliant Isaiah Andrews. This should probably be circulated to all PhD cohorts economics.mit.edu/sites/default/…

New post on milestones of AI automation. Right now, human labor is a hard bottleneck on output (if you remove humans, output goes to 0). Soon we'll go from essential to important to helpful to useless, first in AI research and then across the AI stack. Link in next post.