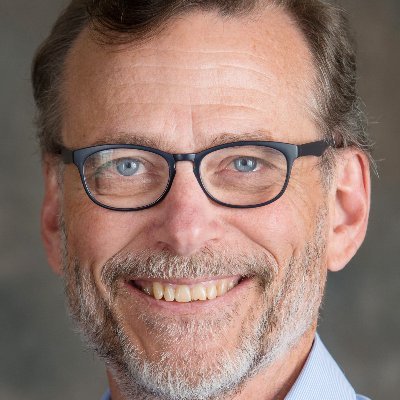

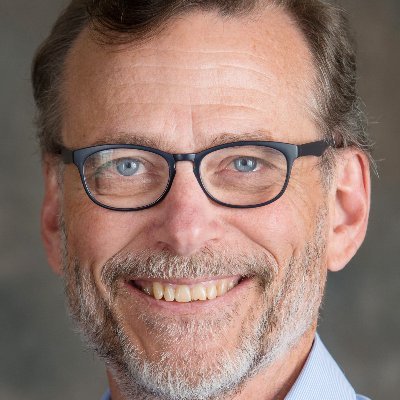

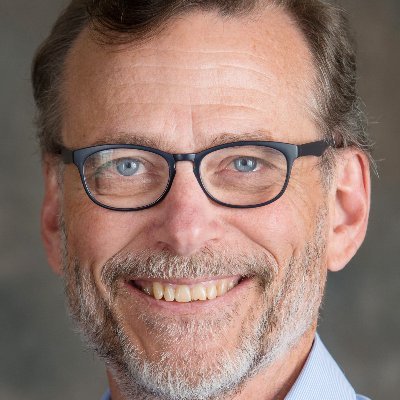

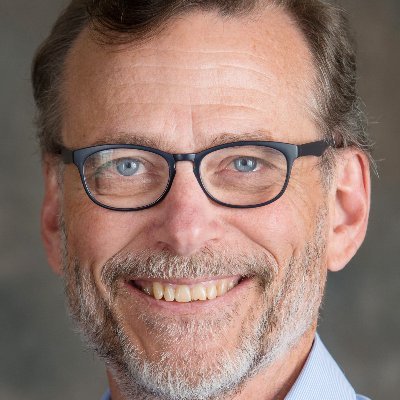

David McAllester

32 posts

David McAllester

@McAllesterDavid

Singularity or bust.

Yes, exactly this. I wish we didn't need to keep reminding people, and @Abebab is commendable for being gentle about it! For the long form of this argument, see Bender & @alkoller 2020: aclanthology.org/2020.acl-main.…

More and more papers use a denoising objective for self supervised learning of of language in NNs e.g. BART or now UL2 None of them cite our (+@kchonyc) arxiv.org/abs/1602.03483 Which I think may be the first to do this Is it reasonable to find this frustrating?

@ylecun @rao2z @guyvdb @MITCoCoSci I don’t think that AGI is achievable in short-term; as outlined in The Next Decade in AI, I think progress requires coalition of - advances in neurosymbolic integration - richer knowledge bases - better reasoning from incomplete data - ways of inducing complex cognitive models