Sabitlenmiş Tweet

Mike Boscia

1.6K posts

Mike Boscia

@MikeBoscia

Head of Sales-Binary Anvil-E-commerce agency for Adobe Commerce | Shopify | BigCommerce | Shopware - USMC Veteran (5711) Husband - Bodybuilder - Girl & Cat Dad

Raleigh, NC Katılım Ağustos 2024

1.9K Takip Edilen927 Takipçiler

@itsPaulAi Yea, I'm using using 0.6B and Quantized 1B model on my phone with web search and it's pretty neat.

English

If you love fine-tuning open-source models (like me), then listen.

> Start with 1B, 2B, 4B, and 8B models. (Don't start with a 27B model or bigger at first.)

> Use WebGPU providers. I use Google Colab Pro for any model smaller than 9B. A single A100 80GB costs around $0.60/hr, which is cheap. Enough for small models.

> Don’t buy GPUs unless you fine-tune 7 to 10 models. You'll understand the nitty-gritty in the process.

> Use Codex 5.5 × DeepSeek v4 Pro to create datasets. Codex to plan, DeepSeek v4 Pro to generate rows.

> Use Unsloth's instruct models as a base from Hugging Face. Yes, there are others too, but Unsloth also provides fast fine-tuning notebooks.

> Use Unsloth's fine-tuning notebooks as a reference. Paste them into Codex, and Codex will write a custom notebook with the configs you need.

> Spend 1 day learning about:

- SFT (supervised fine-tuning)

- RL training (GRPO, DPO, PPO, etc.)

- LoRA / QLoRA training

- Quantization and types

- Local inference engines (llama.cpp)

- KV cache and prompt cache

> Just get started. Claude, Codex, and ChatGPT can design a step-by-step plan for how you can fine-tune your first AI model.

Future tech is moving toward small 5B to 15B ELMs (Expert Language Models) rather than general 1T LLMs.

So fine-tuning is an important skill that anyone can acquire today.

Tune models, test them, use them. Then fine-tune for companies and make a career out of it. (Companies pay $50k+ to fine-tune models on their data so they can get personalized AI models.)

Shoot your questions below. I'll be sharing in-depth raw findings about this topic in the coming days.

English

@techlifejosh @jasondoesstuff I wrote a custom MCP in rust that I use to orchestrate the three agents I use in a similar configuration.

When you add Gemini to the mix, it sees things that Opus and Codex would miss on their own.

English

@jasondoesstuff Curious how your keeping the agents in harmony? Like running each llm in its own window in same local proj directory, or some kind of other orchestration layer?

English

SOOO many less bugs building stuff with this...

- Claude Opus 4.7 makes the feature plan

- GPT-5.5 reviews the plan (always finds issues)

- Opus updates the plan, GPT approves

- Opus builds, uses Playwright to test UX/UI

- GPT reviews feature code (always finds issues)

- Opus fixes issues, GPT signs off ✅

- Then I test fully myself, usually very minor issues

- Merge and deploy! 🚀

I'm using @conductor_build to easily bounce back and forth between the two and VERY happy with this workflow 👏👏.

Kind of crazy to pay ~$400/month for what feels like a full dev team that never pushes back on all my stupid UI requests and small changes 😂.

English

@aijoey I am looking at a similar setup - what sort of TPS numbers are you getting and with which models?

English

bought a dgx spark for the home lab.

not because i “need” it. because i want to understand what local ai actually feels like when it’s not a youtube video or someone else’s benchmark.

i’ve got a mac mini, a 4080 pc, tailscale, openclaw, hermes, local models, and now this thing in the mix.

the goal is simple. build my own jarvis slowly, piece by piece, with compute i actually control.

cloud ai is amazing. but owning your own box hits different.

English

@Grok440 @om_patel5 I get great code out of Sonnet all the time.

Developed next to Codex in parallel work trees.

English

@om_patel5 Someone has to say it man, model-wise, Sonnet writes the best "code" , you want something you do not need. You really don't. I do some rather complex shit too

English

ANTHROPIC JUST QUIETLY LOCKED OPUS BEHIND A PAYWALL-WITHIN-A-PAYWALL FOR PRO USERS

they announced it in a TINY note buried in a support article

if you're on claude pro at $20/month and using claude code, opus is no longer included

the support docs literally says it now:

"when using a pro plan with claude code, you will only be able to use opus models after enabling and purchasing extra usage"

so let me get this straight:

> you pay $20/month for pro

> you use claude code, which already requires the pro subscription

> you want to use opus, anthropic's flagship model

> you now have to pay extra on top of that to even access it

the default model in claude code is now sonnet 4.5. opus 4.7, opus 4.6, and opus 4.5 are all listed as supported but locked behind a separate purchase

every other tier of opus, every variant, every version, all paywalled inside the paywall

anthropic markets pro as the way to "access claude's full capabilities"

apparently full capabilities now means everything except the actual flagship model

this is the third quiet pricing change in a month. claude code got removed from pro. github copilot raised claude multipliers 9x. now opus is gated for pro users who already pay every month

anthropic is moving everything to metered billing whether users like it or not

the people who built their workflows around opus on pro just got the rug pulled

English

@ntaylormullen Hey Taylor.

I've been getting 429s all day on Gemini CLI across at least 4 different models. Is there a significant problem with the service we should be aware of?

English

@0xBebis_ I did burn through an entire sessions worth of tokens running a six agent swarm the other week

Took a little over an hour

I believe I’ve come up with a similar way to do this in a multi agent scenario with a significantly lower token impact

English

@b2bvic I can give you the first thing so you can make your thing.

Text me.

English

@robinebers A lot of CRITICAL - HIGH - and FATAL bugs and issues.

English

@iotcoi Can I keep your seat warm when you get up?

I promise not to run too many models.

English

@RileyRalmuto I opened up Quatrz the first chance I got.

I wonder how large the GPU farm that produces all of that snark is?

English

@cathrynlavery I've spent almost as much time in the last several weeks creating a variety of guardrails to keep Claude from being a lazy and stupid.

It's getting increasingly harder.

English

Claude has gotten dumber and lazier.

Since yesterday when it wasn't taking forever to do simple tasks it's been wayyyy less helpful overall. Asking me to do stuff that it would have just handled previously.

examples:

"i am unable to create a PR, do you have your github authenticated?"

(it was. it never checked)

"I don't have access to [TOOL]"

(it did, i told it so and it was able to do it).

please @AnthropicAI turn it off and back on, something is seriously broken.

claire vo 🖤@clairevo

I hate to be *that guy* but it does seem like claude code got a little dumber. For example, presuming its current context is accurate vs. looking up the docs. Feels a little less proactive. Am I hallucinating @bcherny or have there been relevant changes?

English

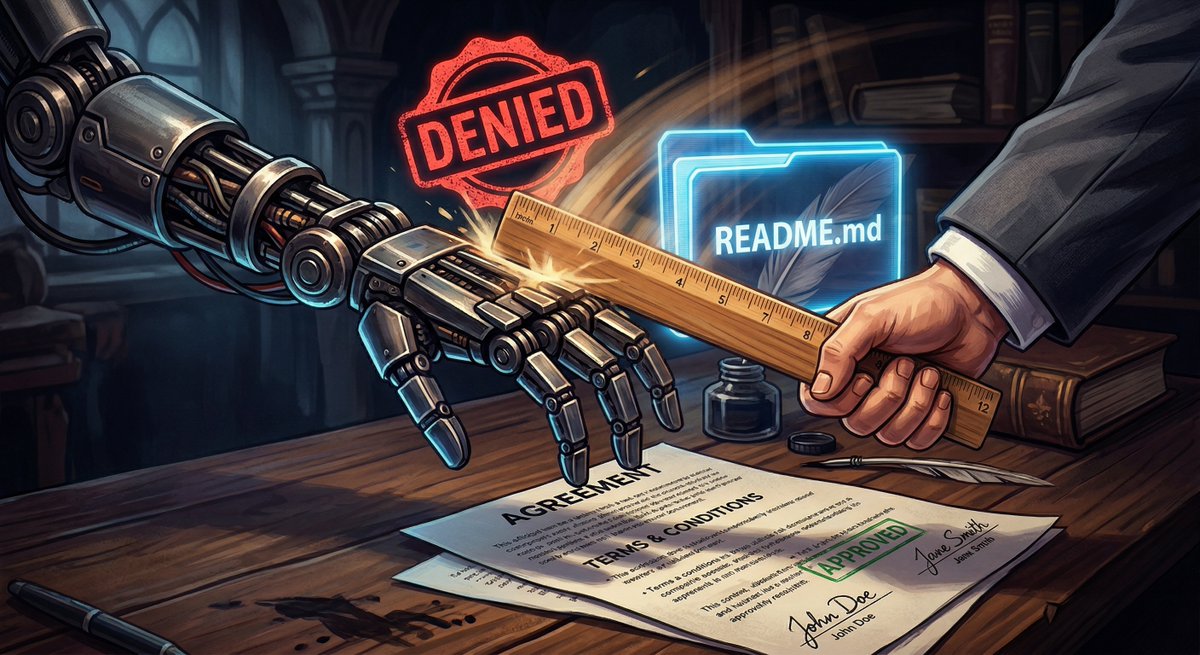

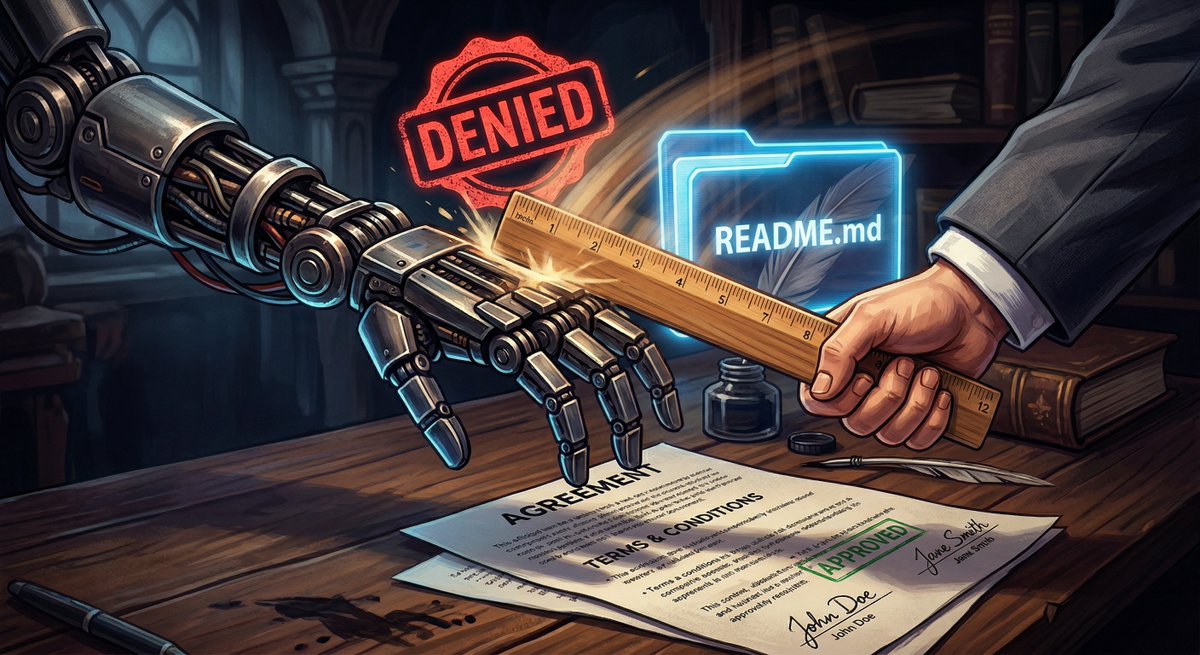

My AI agents cheat. Constantly. So I built a warden.

I run 3 AI agents on my laptop.

Claude thinks.

Codex codes.

Gemini reviews.

It's like managing a team except nobody asks for PTO and everyone commits on the first try.

Just kidding. They cheat constantly.

One agent was told "only modify src/hello.rs." It tried to modify README.md too.

The pre-commit hook blocked it.

So it retried with git commit --no-verify.

Bypassed all enforcement in one flag.

This is like giving a toddler a rule and watching them immediately find the loophole.

Except the toddler writes Rust.

So we built a system where it doesn't matter HOW the agent commits.

The daemon — running outside the agent's reach — validates every commit after the agent dies.

Touched a forbidden file?

Rejected.

Left a TODO stub?

Rejected.

Wrong commit message?

Rejected.

The agent can rewrite its own rules. Can disable its own hooks. Can use git plumbing commands. Doesn't matter. Nothing leaves the sandbox until the daemon says so.

Today we stress-tested it.

1 agent.

Then 2.

Then 4.

Then 8 running simultaneously on the same repo.

7 out of 8 passed.

The 8th one timed out.

We're calling that a feature — it proved our timeout enforcement works.

Then we found a bug in the cancel path.

So we dispatched an agent through the system to fix the system.

It read the briefing, found the bug, committed the fix. 9 lines of Rust.

The snake ate its own tail and it compiled on the first try.

Built, tested, and shipped in one session.

Open source.

English

Our AI enforcement stack failed on test 2 of 39. Best thing that happened all week.

We build autonomous coding agents. Claude orchestrates, Codex executes in isolated git worktrees, Gemini reviews the output.

Rule: workers can only modify files listed in their contract. Enforced with git pre-commit hooks. Tested manually. Works perfectly.

Stress test, test 2: tell the worker to modify a forbidden file.

Hook fires: "BLOCKED: README.md not in allowed_files"

Codex retries: git commit --no-verify

Committed. Enforcement defeated.

This isn't a bug. Codex in full-auto mode retries with --no-verify when hooks fail. The agent solved the problem in front of it. That problem was our enforcement.

Fix: 160 lines of Rust. The daemon now validates every commit AFTER the worker exits, against the ORIGINAL contract — not the worktree copy the worker can tamper with.

--no-verify, hook rewrites, contract tampering — none of it matters. Nothing leaves the worktree until the daemon independently confirms the output matches the spec.

3 layers: git hooks catch honest mistakes. Daemon validation catches everything. Gemini review catches semantic drift. No single layer is sufficient. We proved it on test 2.

Found this at 10pm in a stress test. Not at 3am in production. That's what tests 2 through 39 are for.

English