mimic

47 posts

mimic

@mimicrobotics

Physical AI to scale your most tedious tasks from manufacturing to logistics. Our robots intuitively learn new skills from you and operate fully autonomously.

First look at our collaboration with @AudiOfficial on bringing AI-driven robotics into industrial production. Our end-to-end pixel-to-action model, running on our bi-manual platform, is capable of performing a complex, dexterous and long-horizon insertion task.

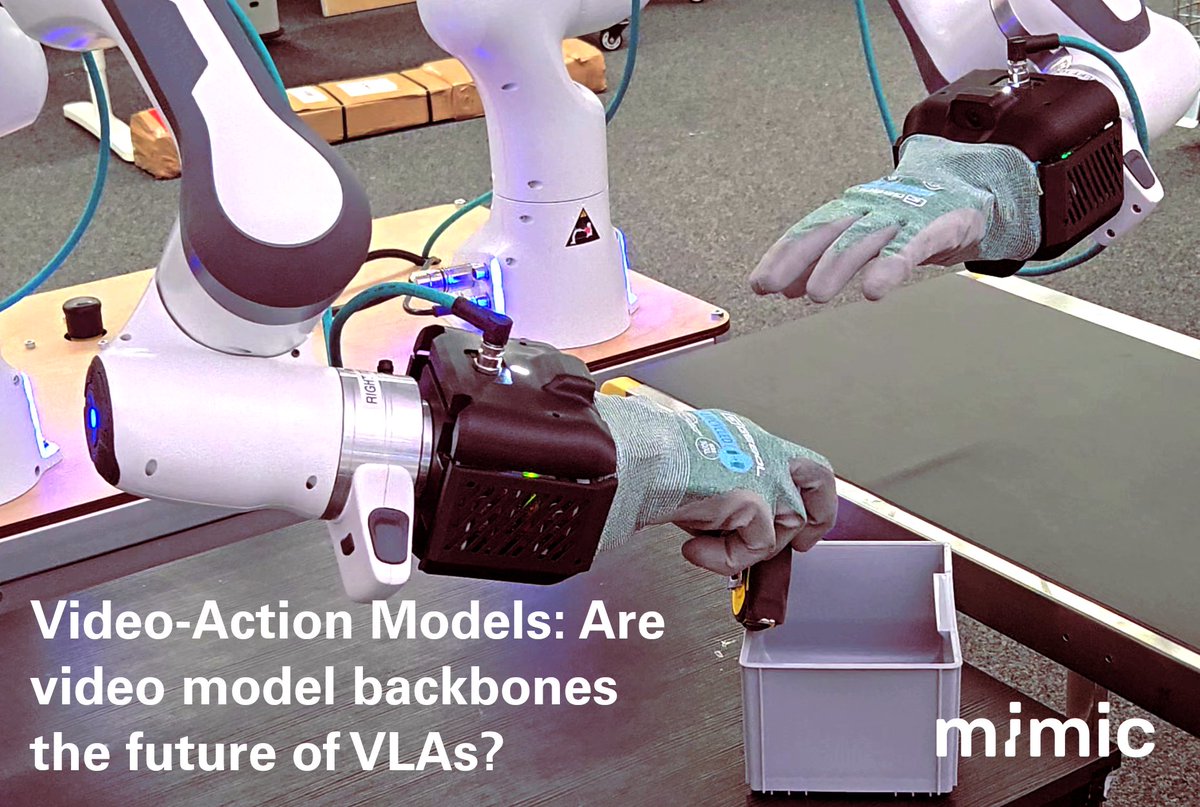

Today @mimicrobotics and friends are excited to share mimic-video, a new class of Video-Action Model that elevates video model backbones as first class citizens for robot learning!

How can robots with different end-effectors efficiently learn from each other? In our latest work, we propose a new approach for learning imitation learning policies across end-effectors that enables efficient cross-embodiment skill transfer through two steps: 🧵