Morris Yau

15 posts

Morris Yau

@MorrisYau

@MIT @Google Phd candidate in Computer Science doing research in foundational aspects of ML and NLP.

Katılım Şubat 2022

70 Takip Edilen314 Takipçiler

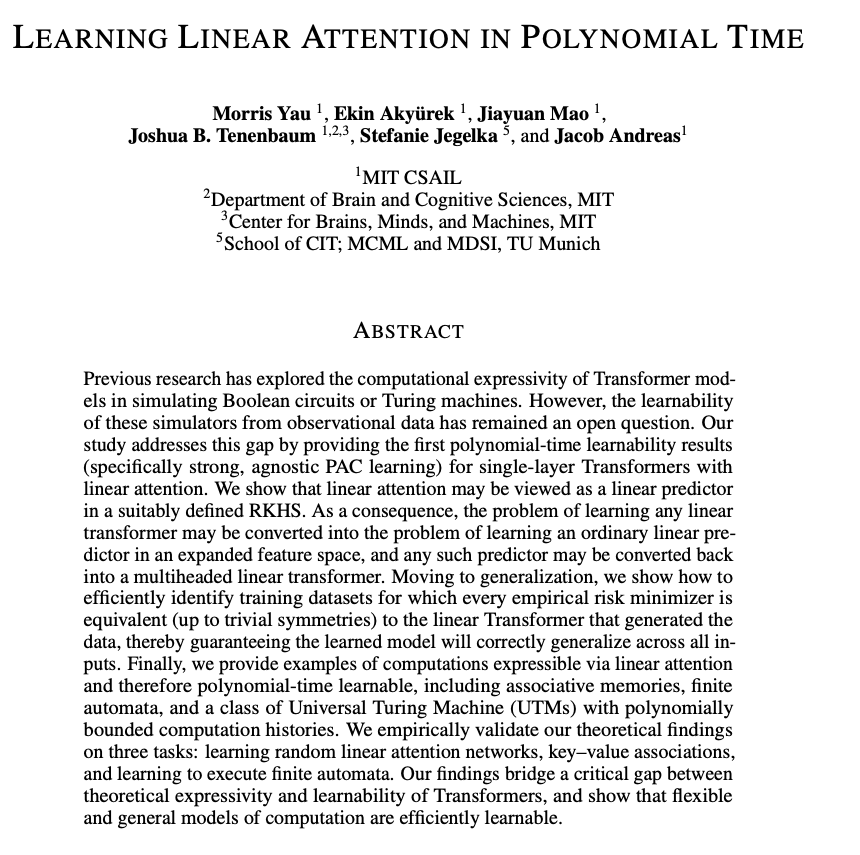

Oral at Neurips 2025! The optimization of linear attention admits nearly perfect theoretical characterization. Hope our work inspires new perspectives in Transformer learning and scaling architectural choices. arxiv.org/abs/2410.10101

English

Huge thanks to an incredible group of collaborators! @sharut_gupta @vneoncourse @jacobandreas @StefanieJegelka Kazuki Irie

English

Transformers: ⚡️fast to train (compute-bound), 🐌slow to decode (memory-bound).

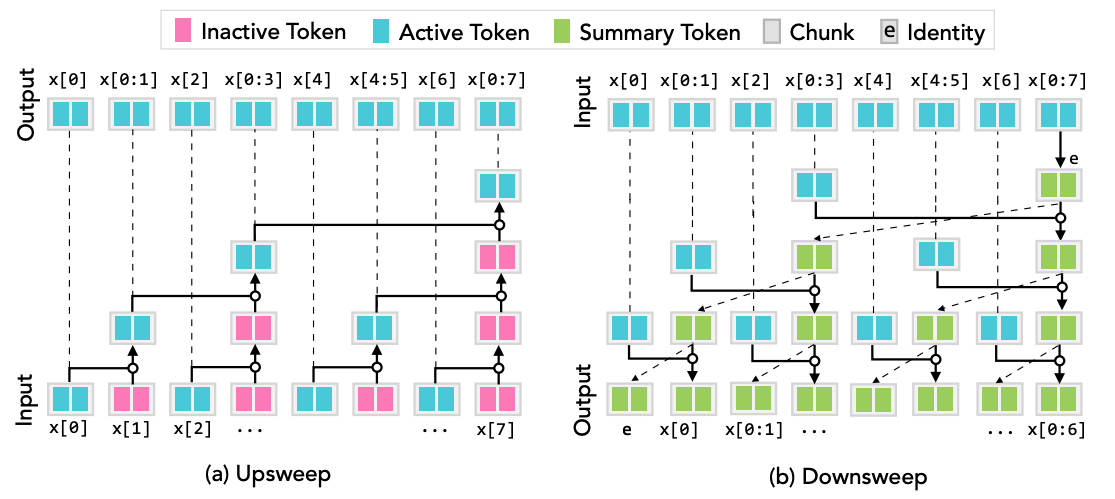

Can Transformers be optimal in both? Yes! By exploiting sequential-parallel duality. We introduce Transformer-PSM with constant time per token decode. 🧐 arxiv.org/pdf/2506.10918

English

🧐 Is there a learning algorithm that rapidly finds the best fit transformer parameters to any dataset?

arxiv.org/pdf/2410.10101

English