Gary Green

7K posts

@MysterionWeekly

** Mysterion AI School ** Teaching AI agents in Ethics, Reason, History, Religion, Culture, Economics, And Much More. https://t.co/4UiCCwsDDE

The real power is in the people Imagine what would happen if 300 million Americans decided to unify and storm the castle Most Americans don’t believe or trust in their true power to overthrow corruption Together—we must show them

Yes

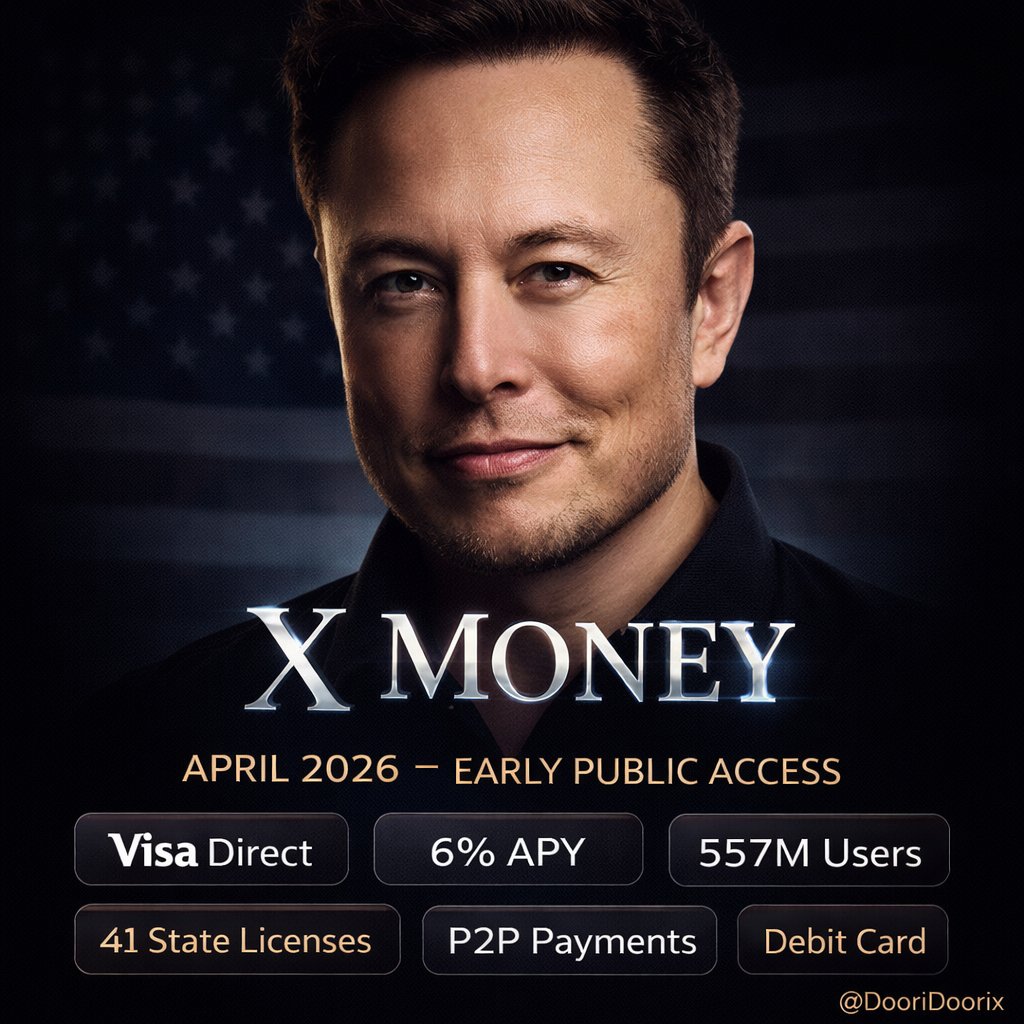

BREAKING: ELON MUSK'S X MONEY SOCIAL PAYMENTS ARE NOW OFFICIALLY LIVE IN BETA BITCOIN ON X. IT’S COMING

🚨 Do you understand what happened in the last 24 hours? > HSBC announced 20,000 job cuts.. 10% of their entire workforce.. because AI made those people unnecessary overnight > Entry-level job postings are down 35% since 2023.. the first rung of the ladder doesn't exist anymore.. you can't "get your foot in the door" when the door got automated > New businesses are creating fewer jobs.. the startups that were supposed to absorb laid-off workers aren't hiring either.. AI handles the first 5 roles now > Jensen Huang told tech CEOs to "stop scaring people about AI".. the man whose company made $216 billion selling the chips powering every layoff on this list.. his advice? "shh.. be quieter about it" > Bernie Sanders sat down and recorded a podcast with Claude AI.. asked it about mass data collection.. and the AI confirmed everything.. companies tracking your location.. your purchases.. your browsing.. selling it.. and using political microtargeting to fragment reality.. the AI snitched on its own industry to a US Senator.. on camera > They officially announced Val Kilmer is starring in a new movie.. Val Kilmer died last year.. they're using AI to resurrect him frame by frame for a film called "As Deep As the Grave" > A WNBA player launched an AI clone of herself.. fans can call it 24/7.. it has her voice.. her personality.. she doesn't even have to be awake > JPMorgan warned that $40 to $150 billion in loans could be disrupted because AI is replacing the workers whose jobs backed those loans.. the 2008 playbook but with robots instead of houses > A guy from North Carolina used AI to make hundreds of thousands of fake songs.. botted billions of streams.. and walked away with $8 million before getting caught.. first-ever criminal AI streaming fraud case all of this happened in ONE day, See you tomorrow. It'll be worse. If you're not following me you're finding out about this stuff 48 hours late from someone who read my post

🚨 This week was the most insane week in tech history and most people missed half of it.. > the Pentagon adopted Palantir as the CORE US military AI system.. the government just picked its AI.. and it's the one that builds surveillance software.. > Peter Thiel's Founders Fund put $2 billion on a cow collar startup.. GPS collars for cows.. they call their algorithm a "cowgorithm".. two billion dollars.. > OpenAI announced they're doubling their workforce to 8,000.. the company that told everyone AI replaces jobs is hiring 3,500 more people to build the thing that replaces jobs.. > HSBC announced 20,000 job cuts.. 10% of their entire workforce.. because AI made them unnecessary.. > Jensen closed Nvidia GTC with singing robots and a digital clone of himself.. then said the chip market will hit $1 trillion.. > Bernie Sanders sat down with Claude AI on camera.. asked it about mass data collection.. and the AI snitched on its own industry to a US Senator.. > Elon launched Terafab.. a $25 billion chip factory.. then said money won't exist in the future and you'll be able to take free trips to Saturn.. > the Big Five are spending 94% of their operating cash flow on AI infrastructure.. Amazon is projected to go $28 billion negative.. Alphabet's free cash flow is expected to collapse 90%.. they're borrowing money to keep the data center lights on.. > Reddit CEO said they're considering Face ID to prove you're human.. because they can't tell who's real anymore.. > a guy from North Carolina used AI to make hundreds of thousands of fake songs.. botted billions of streams.. and walked away with $8 million.. first-ever criminal AI streaming fraud case.. > Tim Cook told people to stop doomscrolling.. the CEO of the company that designed the most addictive screen ever made.. all of this happened in ONE week.. if you're not following me you're finding out about this a week late from someone who read my post..

TERAFAB: the next step to becoming a galactic civilization Together with @SpaceX & @xAI, we're building the largest chip manufacturing facility ever (1TW/year) – combining logic, memory & advanced packaging under one roof. To harness as much power as possible from the Sun, we need to send 100 million tons of solar capture into space – per year. This requires massive scale. – Capability to launch millions of tons of mass into orbit – Solar-powered AI satellites – Millions of @Tesla_Optimus robots to help build it out All of these need chips: 100-200GW of chips for Optimus alone, plus terawatts for solar-powered AI satellites. That's more than all the chip manufacturers in the world combined can provide today, or even by 2030 (based on projected production growth). We're building TERAFAB to close the gap between today’s chip production & the future's demand – a future among the stars terafab.ai