In response to a question about whether the network can support all these modes across real-time and offline jobs:

“typical cloud gaming hardware is not ideal for multi-gpu or offline rendering or compute /AI jobs (like Spock synthesis mentioned above). But the render network can support all these modes across rt and offline jobs and may also deliver much less latency for RT streams due to node diffusion spread out in almost every country on the globe, vs a few dozen data centers. This isn’t a huge issue with depth buffer streaming (needed for time warping for ar/vr cloud streams)”

@JulesUrbach 12.12.21

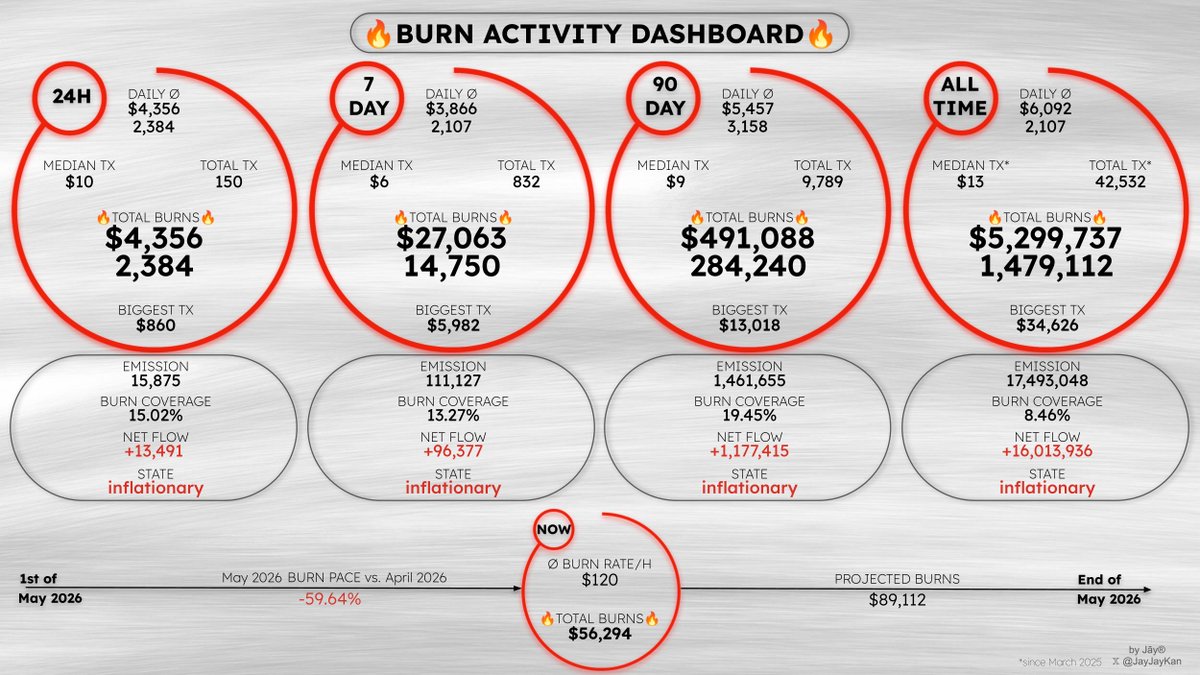

$RENDER

English