NLPurr

935 posts

NLPurr

@NLPurr

SciComm of Academic NLP Papers | Research Scientist | Explainability, Prompting, Benchmarking, Metrics, Red-Teaming & Eval of LLMs

We find that models generalize, without explicit training, from easily-discoverable dishonest strategies like sycophancy to more concerning behaviors like premeditated lying—and even direct modification of their reward function.

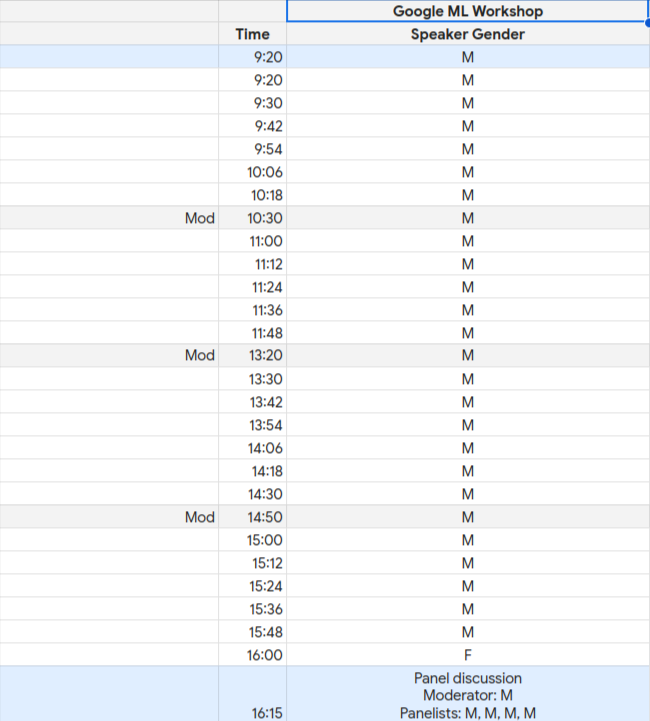

My hottest take is, after ages of all of us really not liking @Meta and tons of research dislike towards that platform; Suddenly, "TO ME", @Meta, and @ylecun seem to having the most level-headed, un-hyped (not talking about accuracy) AI participation in today's discourse.

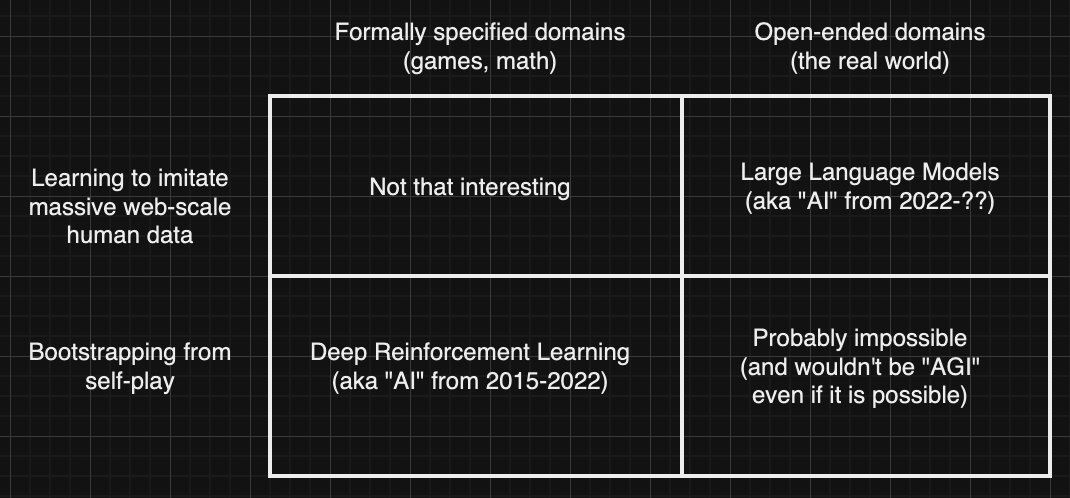

Yann LeCun thinks the risk of AI taking over is miniscule. This means he puts a big weight on his own opinion and a miniscule weight on the opinions of many other equally qualified experts.

We need a moratorium on talking about looming AGI until we have at least a working housebot.

🧵LLM's seem to fake both "solving" and "self-critiquing" solutions to reasoning problems by approximate retrieval. The two faking abilities just depend on different parts of the training data (..and disappear when such data is not present in the training corpus..) Our recent work, quote tweeted below, questions LLMs ability to self-critique (which shouldn't be a surprise given that there really is no reason to believe that they can reason! c.f. x.com/rao2z/status/1…) And yet, several other researchers report results that seem to indicate that some form of self-critiquing mode seems to help solving mode. The explanation for this seeming disparity is that the observed self-critiquing power is just approximate retrieval on corrections data informing approximate retrieval on correct data. Let me unpack it a little. For most common use domains (e.g. mine craft, grade school word problems), the training corpora not only contain solution (correct) data, but also corrections data (i.e., the types of normal errors to be found in incorrect solutions). (c.f. x.com/rao2z/status/1…) This allows people to conflate approximate retrieval for reasoning or self-critiquing. Like any observed solving of reasoning problems, observed self-critiquing abilities of LLMs are also best understood as approximate retrieval from training data. It is just that the latter depend on corrections data rather than on correct data. This ability to fake solving or critiquing by retrieval gets exposed when LLMs are presented with problems/domains for which they didn't have either the correct data or the corrections data in their training corpus. This is what is exposed by our work on LLM planning abilities (c.f. x.com/rao2z/status/1…) and that on self-critiquing abilities (x.com/rao2z/status/1…) tldr; whether solving or self-critiquing, it is approximate retrieval all the way for LLMs.. [I think this is also true of "automatic curriculum generation" claims a la Voyager--but more on that in another post..] (This thread is kind of an analog of the earlier thread on why people claim LLMs can generate plans: x.com/rao2z/status/1…)

Google has been promising Gemini for longer than the entire dev cycle for Grok. Being “GPU rich” isn’t everything.

I'm sure you've wondered: Can GPT-4v draw a TikZ Unicorn if we give it visual feedback? I am here to settle this open problem An 8-part 🧵on my attempt to get GPT-4v to draw a🦄 as good as @SebastienBubeck et al's, when given multiple rounds for improvement. TL;DR: i failed

Enjoyed visiting UC Berkeley’s Machine Learning Club yesterday, where I gave a talk on doing AI research. Slides: docs.google.com/presentation/d… In the past few years I’ve worked with and observed some extremely talented researchers, and these are the trends I’ve noticed: 1. When starting a project, average researchers tend to jump quickly to modeling proposals, architecture design, new ideas, etc. Great researchers often first spend time manually looking at data and playing with models to deeply understand the problem, before proposing an (often simple) approach. 2. Average researchers may often write hacky code that is not reusable and requires many separate steps. Great researchers are often also great software engineers—their code can be easily extended for future experiments, they write extensive tests, and they create infra to run many experiments quickly and visualize results with the fewest clicks. 3. While average researchers might work mostly by themselves or with one or two others, great researchers know that research is a social activity. They collaborate with people of varying experience, share results in writeups, and communicate their vision convincingly. 4. Average researchers might get stuck in rabbit holes—if they have experiments with only mediocre results, they spend 3 more weeks writing it up and submitting it to a conference. Great researchers quickly move on to something else when they know that one approach won’t be a breakthrough. 5. If an average researcher finds some success, they may try to keep doing that thing they are comfortable with for several more years, even if it becomes outdated. Great researchers pivot quickly and keep adapting to new advances and paradigms. 6. Average researchers often implement task-specific solutions, which are heavily optimized for a single task. Great researchers may also work on specific tasks, but they try to think of general approaches that can be applied to many other tasks. 7. Average researchers talk about and optimize for the number of papers or conference acceptances. I have never met a great researcher that still cares about such things. (And by the way, being an average researcher shouldn’t be taken as an insult. It takes a lot of hard work to even do research at all :))

🚨 JOB ALERT 🚨 We're hiring research scientists/engineers to conduct research on next-generation assistant technologies to power increasingly autonomous agents which strive to support humans Research Scientist: boards.greenhouse.io/deepmind/jobs/… Research Engineer: boards.greenhouse.io/deepmind/jobs/…