Nantte Kivinen

59 posts

We took over a former technical university. This is Hogwarts in real life. For people who want to work on something too early, too weird, too ambitious.

We took over a former technical university. This is Hogwarts in real life. For people who want to work on something too early, too weird, too ambitious.

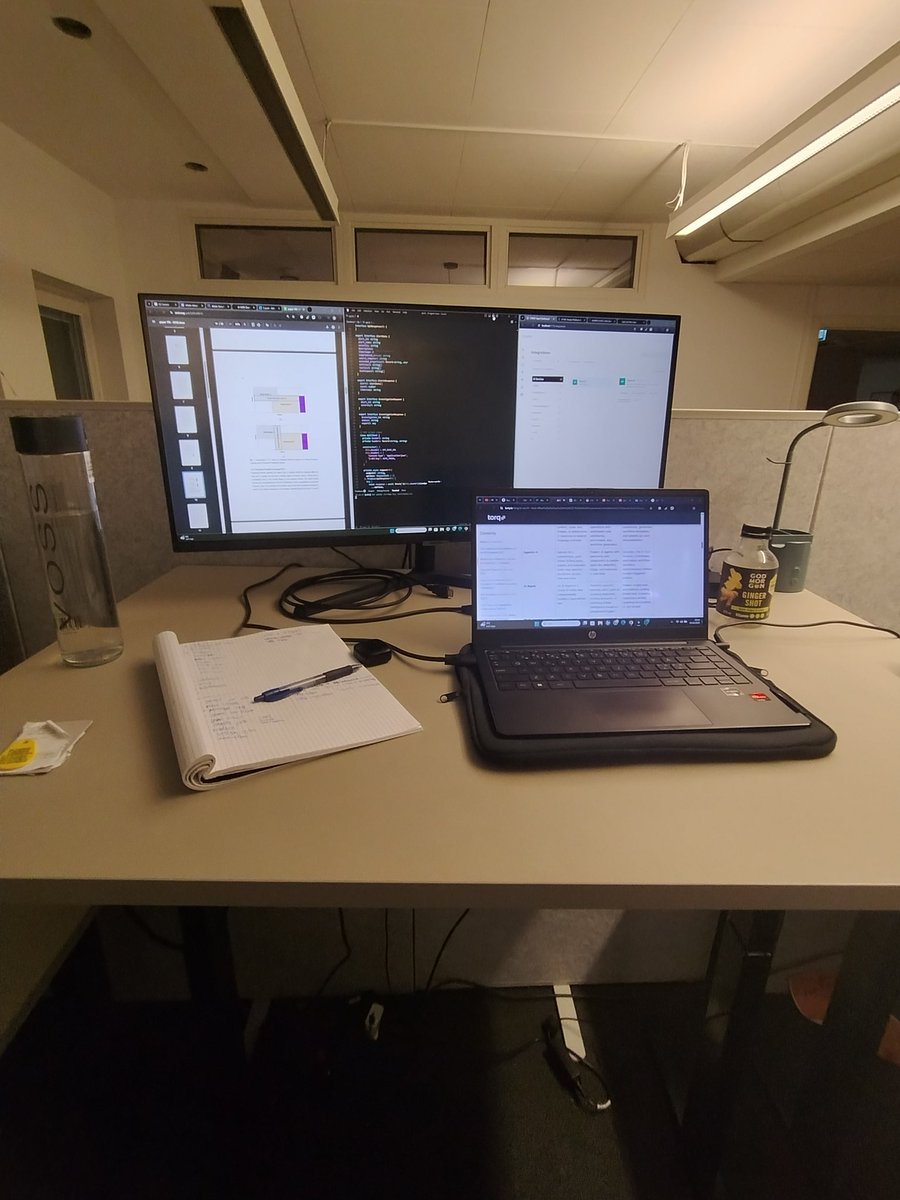

CPUs suck. We're building a new general-purpose chip that scales to thousands of cores while being more energy-efficient. We're hiring hardware design engineers, consider joining us tendrils.co/jobs What we do differently ...

Home humanoid robots are getting closer. Shenzhen MindOne Robotics is testing their robot brain on the Unitree G1, and it looks like the G1 has already learned to do human-like household chores. Watering plants, moving packages, cleaning mattresses, tidying up, etc. Honestly, wanna bue one.