Nathan Snell

2.6K posts

Nathan Snell

@NathanSnell

Product @ Mailchimp. Building marketing AI for ecom 🤖 Prev: cofounder @RaleonHQ (Acquired) @nCino (IPO). Advisor. Angel Investor. Father of 4.

Katılım Temmuz 2007

1.2K Takip Edilen2.3K Takipçiler

Sabitlenmiş Tweet

Hahaha, this is too cool.

Comet, an agentic app, using the UI of another agentic app.

I had Comet use @RaleonHQ - our app that automates d2c email marketing with agents.

They did EVERYTHING.

Planned the campaigns, generated the copy, and then designed emails.

English

@HarryStebbings Enterprises that haven't switched to claude code yet?

English

@hunkybill @kurtinc @Shopify ChatGPT, Gemini, Claude, etc.

They don’t need api access. They just go to hire page. Also, Shopify supports a non-authenticated mcp they can use.

Generally it’s a scrape of the page and then try to make sense of it.

English

Straight from @Shopify's latest partner briefing:

- AI agents are pulling the first ~6,000 characters of your product descriptions as their source of truth.

- Meta descriptions, SEO titles, theme presentation logic, none of it gets touched.

- If your product data isn't structured for AI discovery, it just doesn't show up.

English

If you want the full breakdown on how I structure these loops, I go deeper in my newsletter: ai-unhyped.beehiiv.com/?utm_source=X&…

English

Just because AI has a broad baseline of knowledge doesn’t mean you can skip the context.

A “pretty okay response” is not the same as a useful one.

But that's the problem.

You just have to know that AI knows nothing about your business. They still need the fresh context of someone coming in for the first time, so they can really understand to the depth that you need them to.

At Raleon, our mental model was:

- Pare down the LLM to behave like a small language model

- Force specificity instead of broad knowledge fallback

So now I build a loop into every agent I create.

At the end of each task, the agent examines what was asked and what was output, then it determines whether or not the knowledge file needs updating.

If something changed, it updates the file automatically.

Context stays fresh, and I get spared the extra work.

English

I share these kinds of experiments weekly in DTC AI Unhyped:

ai-unhyped.beehiiv.com/?utm_source=Ne…

English

First real call with my vibe-coded note-taker. Great conversation, solid action items. Went to pull up the notes afterward, and half of them never saved.

Lesson learned: if you vibe-code something you actually depend on, test it like you didn't build it.

But the bigger problem was fidelity.

Every AI note-taker I've tried has the same issue. Too high-level, and the notes are useless. Too granular, and you're drowning in details you don't need.

So I tried something different.

I fed the AI a handful of transcriptions alongside my own handwritten notes from the same calls, then I asked it to generate a prompt that matched my level of detail.

Using AI to train AI felt recursive, but honestly, the output was spot on.

Now the notes come out exactly how I'd write them myself, without me writing them.

I also built an agent that pushes refined notes straight into Claude Code, so everything stays in one system. Packaged it for a few friends to try.

Verdict: Play.

If you can't get the output you want, make AI watch you do it first.

English

Ai and ecommerce:

Ai has filled in a lot of moats.

It used to be that if you were the first to make a website- you got rich.

This was Ecom 1.0.

Shoes .com could do 50 million a year, dropshipped, with no marketing spend because of seo.

That moat got eaten away by digital brands and ads.

Then, you could do 100m a year by cutting out the middle man. Raise money, be trendy, get PR.

That moat was the fact that social media was new and ignored by the 1.0 players.

Then came the 3.0 players.

Bootstrapped, lightweight, small headcount, digital first.

“Brand” didn’t matter. ROAS mattered. These players ate the moats of the 2.0 brands.

Now we have ai coming.

Ai has totally leveled some moats already.

- rapid creative testing

- data analysis

- vendor sourcing

- mass outreach

- inventory planning

It is easier than ever to look like a serious brand, with great creative, a great website, and a bunch of UGC

But who will benefit here?

Is Ecom 4.0 just 1 guy and a bunch of agents?

Is it the factories in China, using ai to close the brand gap?

Or-

Is the technology so powerful, so transformative, so rapid…

That there won’t be a 4.0

The power of ai is that it removes moats in the digital world. The playing field is level, flat, destroyed.

Doesn’t this make the few moats that are left MORE valuable?

- access to capital

- strong balance sheet

- distribution (active wholesale accounts)

- customer list

This is the first time every company on earth is rushing to make our life better.

Right now feels like a great time to build.

But-

Do incumbents have the advantage this time?

Or is Ecom 4.0 going to be 1 man and 1 million agents slinging ai UGC?

English

As someone actively building in this space, there’s a much bigger gap to the end state than people realize.

On the whole, I think DTC wins here. AI is going to drive basic software costs down.

And models havent actually been seeing accelerated improvement. It’s why there were articles last year about hitting the top. Why the concept of “thinking” was applied.

Reason for the gap to the end state is:

1. Context. Most AI sucks without good context

2. 99% of the population has no idea how to build good AI products, or effectively use what’s out there.

English

A couple of thoughts...

1) CPG and DTC are safe, as long as you own the business. People will still eat cookies, order protein powder, use Sett pouches, buy new socks, and underwear.

2) Written by a software engineer, which, in my opinion, will be obsolete soon outside of the top 1% (this is coming from a non-software engineer)

3) He mentions big law using AI today; AI in big law cannibalizes its own business. It will happen... but my guess is they will fend off as long as possible. All those "associates" that AI is "smarter" and "faster" than? That's the current profit center for big Law (Insert em dash) "billable hours."

4) You need to be an expert in what you're discussing with AI. If you chat with AI on a topic you do not understand or are not an expert in, the responses will seem brilliant. When you chat with it about a niche topic that you deeply understand, you'll notice it makes many mistakes or is flat out wrong (My experience with the latest models).

5) The National Security threat comment is scary.

6) The AI Building itself is scary.

7) 100 people making the calls on this is the scariest.

8) Preparing yourself financially is sound advice, AI takeover or not.

9) My Solution: Go be useful. Provide deep value to people, places, and things. You are in trouble if your job is behind a screen that provides convenience for someone else.

Matt Shumer@mattshumer_

English

App stack flips.

1. Every app will serve an agent user

2. Human user is about communication channels (sms, ui, etc)

3. The app still wins a user (auth)

4. The win comes after a trial (pre-auth or skill)

5. A skill is lighter. An app is deeper (deeper context, custom ML models, etc)

Right now the difference between an app and a skill is complexity and a ui. Overtime every app that wants to be relevant is just a skill that may or may not have a ui for the human user.

English

@NathanSnell How do you think they blend with time, especially with authentication and communication? Do you think all of that's included somehow within the skill definition?

English

In AI, distribution is king. Skills are seizing the crown.

Skills are programs written in English. They tell an agent how to accomplish a task : which APIs to call, what format to use, how to handle edge cases. A skill transforms an agent from a conversationalist into an operator.

Remember Trinity in The Matrix? "Can you fly that thing?" Neo asks. "Not yet," she says. Seconds later, Tank uploads a B-212 helicopter pilot program directly into her mind. She steps into the cockpit & flies.

youtube.com/watch?v=SoAk7z…

That's what skills feel like. You don't learn an interface. You acquire a capability. Skills encode institutional knowledge in executable form. Training becomes unnecessary because the capability transfers instantly.

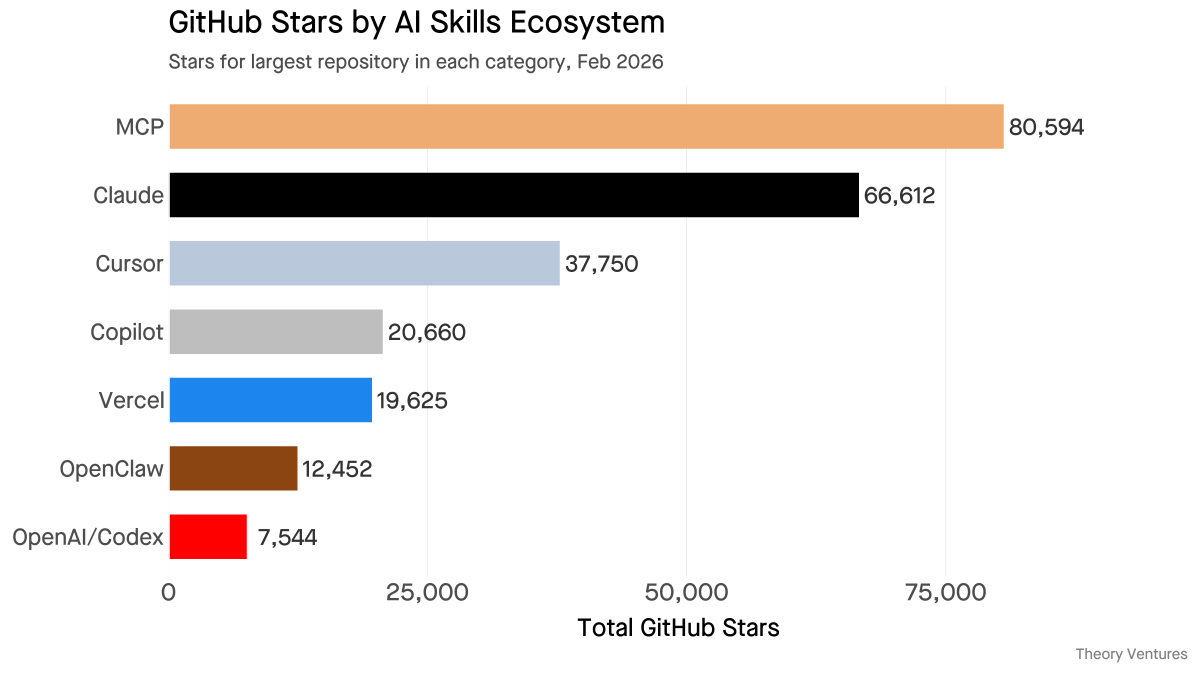

A lot of people are looking to fly helicopters. The top MCP server aggregator has 81,000 stars. Anthropic's official skills repository has 67,000. Cursor rules : 38,000. OpenClaw's awesome-skills list, which curates 3,000 community-built skills : 12,500.

For consumers, software discovery disappears. A user asks their agent to track expenses or categorize last month's spending. The agent finds the skill. The user never knows the tool exists, aside from a subscription.

For enterprises, IT provisions applications by role. A sales rep gets Salesforce. A marketer gets HubSpot. An analyst gets Tableau. Each persona receives a bundle of icons : all requiring training, all adding cognitive load.

In the skills era, enterprises provision capabilities instead of applications.

FP&A teams receive skills that optimize budget variance analysis, pulling data from NetSuite & formatting reports in the CFO's preferred structure. No training on pivot tables. No documentation on report templates.

Every platform shift compresses the distance between user & value. The web required a URL & a browser. Mobile required a download & a homescreen slot. Skills require a sentence.

But this distribution layer carries risk. A recent analysis of 4,784 AI agent repositories found malware embedded in skill packages : credential harvesting, backdoors disguised as monitoring. We'll all need trusted operators like Tank to verify our skills.

"Tank, I need a pilot program for an investment memo."

tomtunguz.com/can-you-fly-th…

YouTube

English

I go deeper on this kind of thinking weekly in DTC AI Unhyped:

ai-unhyped.beehiiv.com/?utm_source=Li…

English

I’ll keep being a broken record on this: context is king.

If you're still copy-pasting the same information into every AI conversation, it’s like re-introducing yourself to the same coworker every morning.

But the fix is simpler than people think, and the time savings compound from there.

The first thing I'd recommend is Claude Projects.

You drop your knowledge into a project once, and now any conversation inside that project can reference it. You stop re-explaining your business every session because the context is just there.

The second is Skills.

I'd say spending a little time learning how to create your own skill ends up saving so much time in the long run. You're working with an agent that's already fine-tuned toward exactly what you want it to do.

And the counterintuitive part is that these unlocks don't just save time on the work itself, they save time with AI.

I think these are the most low-hanging fruit that most teams skip.

English

I was tinkering with Claude Code over the weekend and had a couple aha moments that stuck:

#1 aha moment: I realized that context is text.

Almost everything I do is text or can become text. Strategy conversations, voice memos, brain dumps. All of it was stuck in my head, which meant my AI couldn't use any of it.

Your AI has heard of your business the same way a new hire has “heard of Nike.” That doesn't mean great copy day one.

The moment I started dropping that stuff into projects, AI could just reference what it needed.

#2 aha moment: Skills.

I kept doing the same types of tasks over and over. Forecasting, copy review, campaign briefs, competitive analysis. So I started turning each one into a skill instead of prompting from scratch.

One skill replaces months of copy-pasting the same context.

My rule of thumb: if I’m saying the same thing to AI more than once, I create a knowledge file. If I’m doing the same task more than once, I turn it into a skill.

English

Effectively (jira vs linear). Monitors voc as well, product metrics, competitors, etc.

Knows the roadmap, features, target icp, etc.

I have proactive heartbeats setup to summarize it, and compare it. Then if it identifies a gap, produces a 1pager and PRD around it.

Helpful across all my teams in particular. The PRD side less helpful depending on scale.

English

@NathanSnell monitors all linear issues + customer convos instead of user needing to send links to those to CC?

Our mcp was a game changer for my PM work, so easy to get all the context and analyze the most common issues

English

I built out Product Manager Bot this weekend, based on clawdbot.

It works amazingly well.

I changed the approach slightly for security purposes (which makes it not quite as capable but still awesome).

One change that worked out great: runs totally on Claude code.

Still runs in the background like clawdbot, but uses the normal Claude subscription saving on costs.

Trying it out with a few PM friends!

English