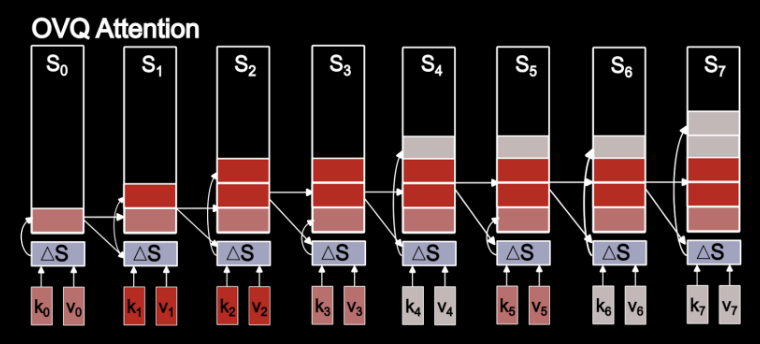

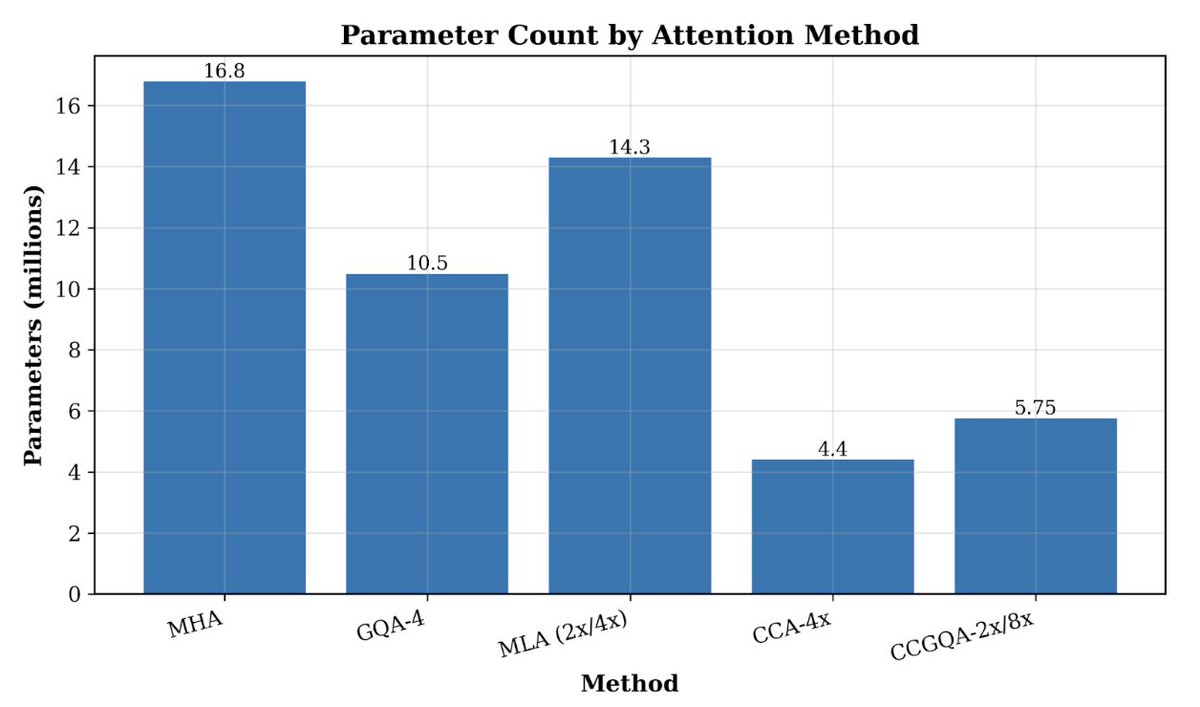

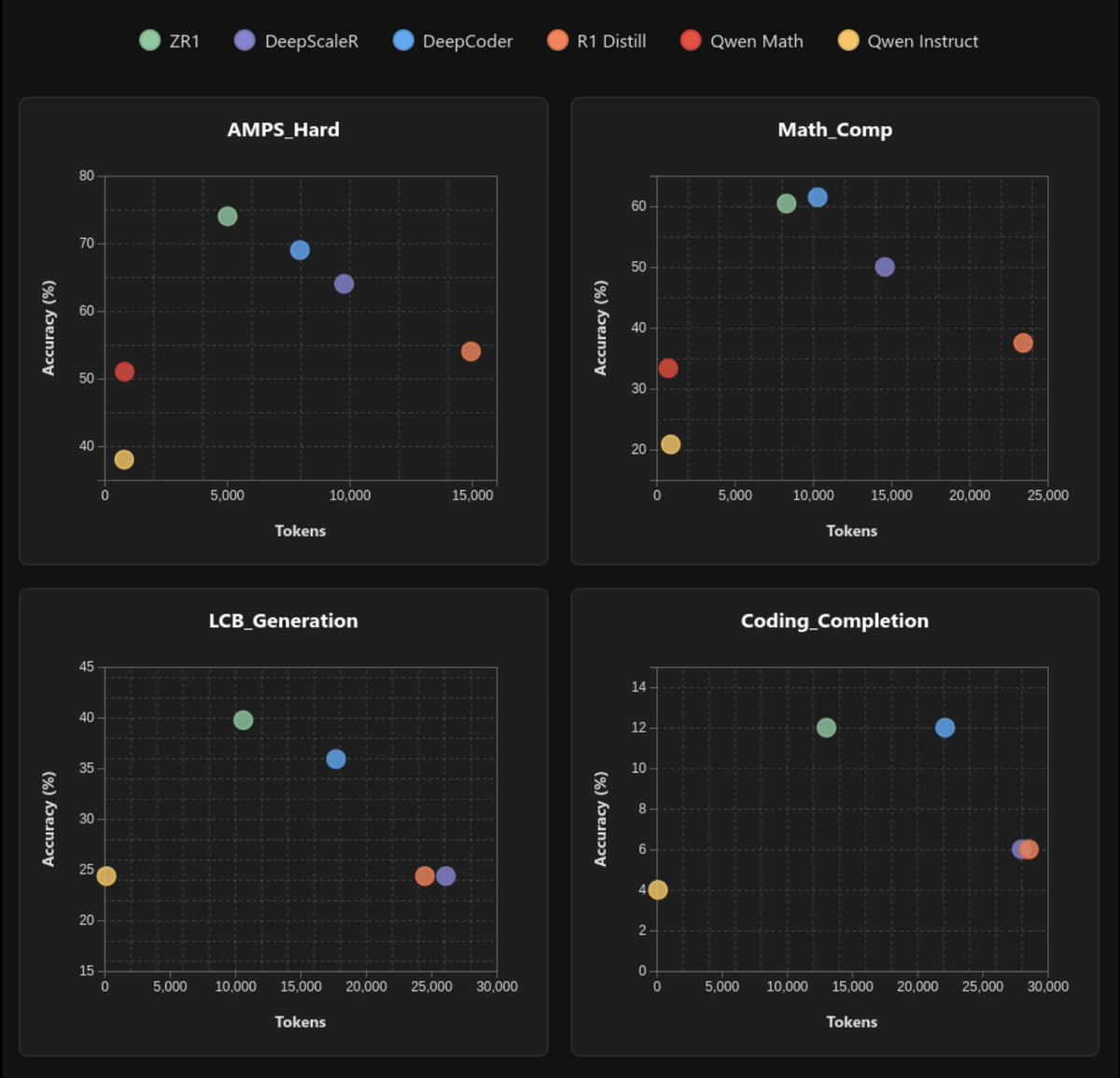

Today @ZyphraAI releases OVQ-attention, an advancement for efficient long-context processing! Existing LLM layers compress input too much, leading to poor long-context understanding, or too little, leading to expensive memory+compute. OVQ-attention is an alternative path. 🧵