Sabitlenmiş Tweet

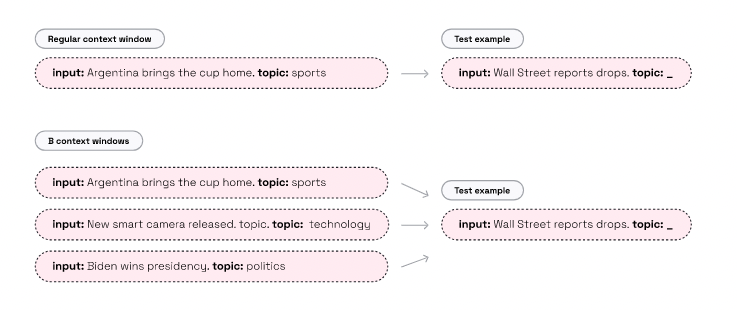

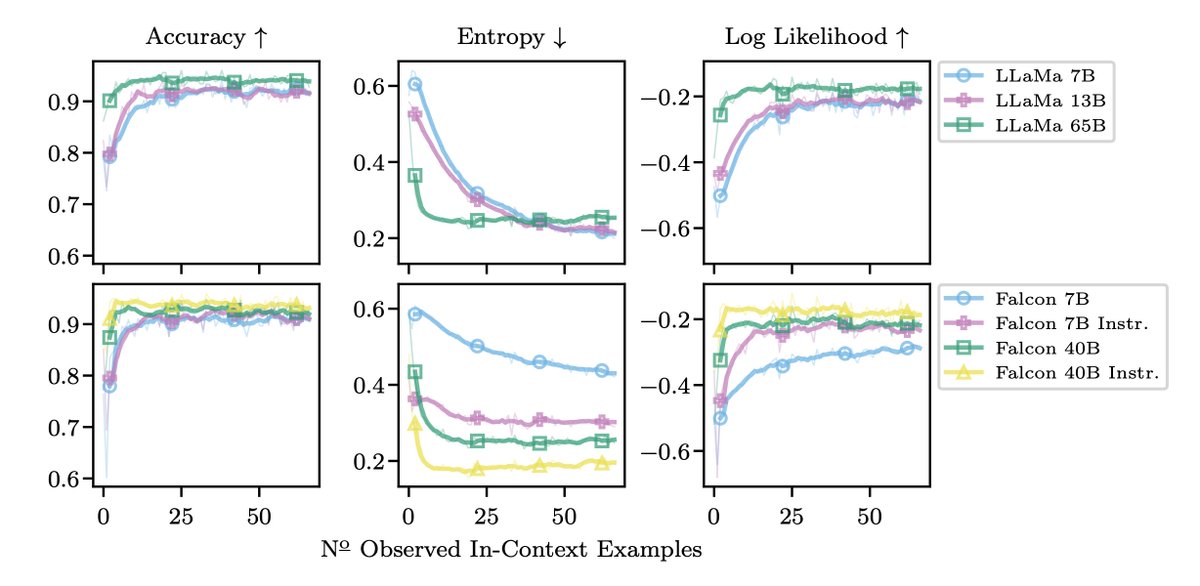

LLMs can attend to way more text than their original context window -- Accepted to ACL 2023 main conference 🥳🥳

"Parallel Context Windows for Large Language Models"

Paper: arxiv.org/abs/2212.10947

Code: github.com/AI21Labs/Paral…

#ACL2023 #ACL2023NLP #NLProc

English