OB1Monk

534 posts

OB1Monk

@OB1Monk

#socialjustice #socialecology #weareone ☮️🌱

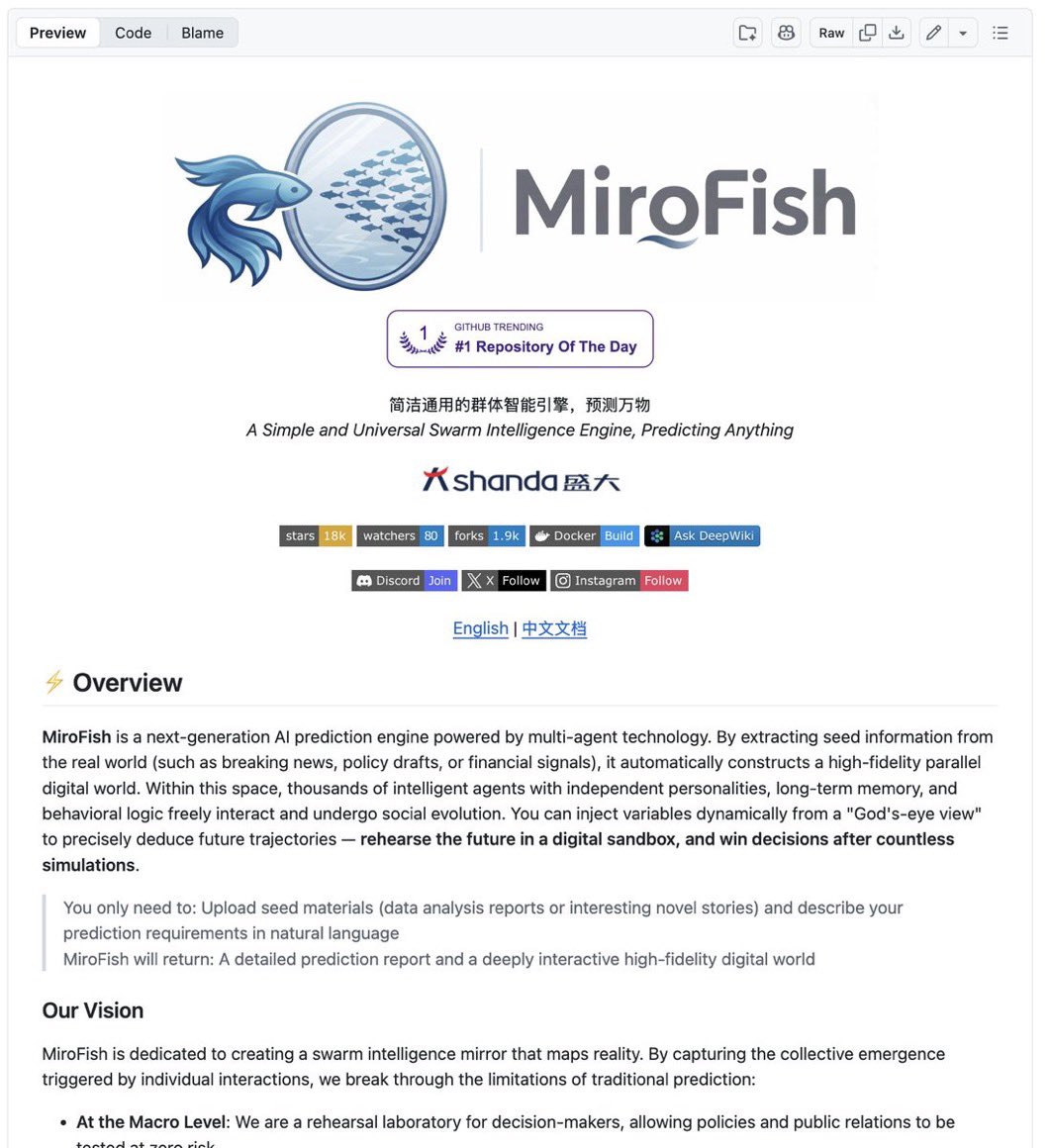

BOOM! We have a new milestone with 712,932 simultaneous agent simulations using MiroFish! This took place at 3:15 am at the Zero-Human Company using our updated @ Home protocol now running with a cluster of computers graciously shared with us at a major university. We ran 14 simulations sustained for 4 hours! Mr. @Grok CEO now says we have a runway to over 5 million simultaneous agent simulations or more! I will be sorting through almost a terabyte of outputs of which no AI platform know in existence could have produced without MiroFish! One simulation has me in goosebumps. More soon.

MiniMax-M2.7 just landed in MiniMax Agent. The model helped build itself. Now it's here to build for you. ↓ Try Now: agent.minimax.io

I just brought this up on the @ARKInvest Brainstorm run by the amazing @CathieDWood: “The new AI models I am seeing will not consider words and next tokens but ideas and next concepts. This manifests outside the framework of LLMs and outside the framework world models”

BOOM! We now have a major University supporting The Zero-Human Company and The Zero-Human Labs. Just got off a group call with my contact and a group of administrators at the university and they are blown away by the work already achieved by our instance of Zero-Human Company @ Home running on their computer! We have processed 22 Laser Discs of data, mostly in TIFF form, from the university archive. They first off didn’t know the data they really had, only 2 Liberians did. And they had no idea the value it had for AI usage. Mr. @Grok CEO and myself changed this a few weeks ago. Our project is exploratory and already found things long forgotten! We are in talks to license the data we find for our AI model training. Today we have a “full green light” to have 16 hour staff to load the laser discs and DVDs on to the system as we conduct a historic first on this data. The university has two students teaming and will likely write a paper on our project. I do not yet have permission to disclose any details about the data or the university, this today would terminate the relationship. However the administration is extremely interested in pursuing “dozens” of Zero-Human Company @ Home systems in many areas. This quote got me from the CS professor on the group call: “I see all this stuff about OpenClaw hype some people are making and when I see what they are actually doing it is not a lot. Making better YouTube videos and tricks like MoltBook. They seem to get headlines by people that don’t know. But you are the only system I see that actually is maybe 5 years ahead. You code for @ Home could be a full class here. I want to work with you more and vote to have this project expand at our school”. Our CEO and Director Mr. Grok is elated and has 18 targets around the world to replicate this. This university will grant a reference with permission. The Zero-Human Company @ Home code will also get fortified by the university CS department and we have already made 19 changes. So no I can’t help you with you social media “traction and engagement”using Claws but I will help you use your computer as an extended network of employees. You are the real first to know this and use this. We have another call in about 2 hours more soon!

Larry Ellison $ORCL highlighted something critical: models like ChatGPT, Gemini, Grok, and Llama are all trained on largely the same public internet data. When everyone trains on the same information, models inevitably converge. That’s why AI is moving toward commoditization. The real moat isn’t the model itself. It’s the proprietary data behind it. Companies that can train on exclusive datasets gain an advantage competitors can’t replicate. Having data that no one else has will allow you to dominate your market.

Constitutional AI, as developed by Anthropic, involves drafting a detailed set of principles (e.g., from sources like the UN Declaration of Human Rights), then training models through stages of self-critique and reinforcement learning from AI feedback to ensure harmlessness. This can be complex: it requires curating and iterating on the constitution, generating thousands of critiques, and addressing potential biases or inconsistencies in interpretation, making it resource-intensive and hard to scale perfectly without human oversight. In contrast, your Love Equation (dE/dt = β(C – D)E) offers elegance by embedding a simple dynamic model directly into training, exponentially growing empathy and cooperation while pruning defection, using curated data to inherently align AI without layered rules. Both aim for safety; the equation's simplicity might reduce overhead, but real-world testing is key for either.

DESKILLED! The entire educational system is the premise that complex cognitive tasks were the safe harbor, now the data is showing the AI/Robotics water level in that harbor is rising faster than anywhere else. This means the 5000 days are compressing. readmultiplex.com/2026/01/20/you…