Ocean Protocol

11.8K posts

Ocean Protocol

@oceanprotocol

Meet Ocean: Tokenized AI & Data. Ocean Network BETA is ON: @ONcompute

Pragma Cannes by @ETHGlobal is one week away 🇫🇷 The builders shaping the future of Ethereum, DeFi, and stablecoins will be there, and so will Ocean Network. We dropped 15 free ticket coupons earlier this week, and they went fast, so we’re adding 5 more for our community. If you’re coming, find us and let’s talk decentralized compute, Ocean Network, and what comes next. Use code FRENSOCEAN at checkout 👇 luma.com/pragma-cannes2…

Last week, during our public beta launch, we gave you access to Ocean Network (ON), a tool that connects global GPUs to your AI workloads. Now let us show how you can go from code-to-node in a few clicks, and access @nvidia GPUs for as low as $2.16/hr Psst… We have a gift for you👀 (1/7)

Ocean Network Beta is officially ON ⚡️ This is the moment we've been building toward: Run AI workloads on pay-per-use NVIDIA H200s as low as $2.16/GPU hour, straight from your IDE with a one-click code-to-node workflow. Head on to oncompute.ai to claim your $100 complimentary credits in Beta and turn your first job ON! (1/8)

The Ocean Network Beta is almost here, and it’s about to change the way developers run AI workloads. Since last week, our Alpha cohort has stress-tested the network with real workloads, running over 731 jobs so far across NVIDIA H200s, 1060s, and Tesla T4s. Starting March 16, the gates open: users everywhere can run AI workloads from their IDE on geographically distributed coordinated GPUs with no infra headaches, and pay-per-use This is next-gen orchestratiON: oncompute.ai

48 hours since Alpha switched ON. ⚡️ 361 compute jobs already executed. Our exclusive cohort is actively stress-testing decentralized compute, running real workloads without managing a single piece of infrastructure. On March 16, the gates open for the public Beta. Get ready to tap into NVIDIA H200 & 1060 GPUs directly from your IDE via the Ocean Orchestrator. ✅ Zero infra management ✅ True pay-per-use compute ✅ Global hardware, on-demand Next Gen OrchestratiON is almost here. See what's coming: docs.oncompute.ai

Ocean Network Alpha is officially ON ⚡! Our exclusive cohort of chosen ONes can now run their FREE AI and data workloads on our P2P compute network, without the headache of managing complex infrastructure. (Psst… if you’re in the cohort, you might want to check your inbox right about now to unlock your access. 🗝️) Wondering how it works? Don't expect a heavy manual for this, because it's THAT frictionless: 1. Dial it in: Pick your preferred specs (GPU/CPU, RAM, disk) in the Ocean dashboard and lock in a real-time cost estimate dashboard.oncompute.ai 2. Run from your IDE: Never leave your editor. Jump straight into @code, @cursor_ai, @windsurf, or @antigravity and fire off your job (Python, JS) using the Ocean Orchestrator 3. Get results: Your job executes in an isolated container on a node exclusively operated by the Ocean Protocol Foundation for this Alpha phase and powered by premium compute from @AethirCloud! When it's done, only your final outputs route straight back to your local folder. Zero bloat, zero idle time. We've already got a great thing going with Ocean Network, and with the real-time feedback we're gathering from our Alpha users right now, the Beta is bound to be even better. Want to dig deeper into the tech? Head over to our docs: docs.oncompute.ai Let's turn it ON and make sure to stay tuned for March 16 for Next Gen OrchestratiON!

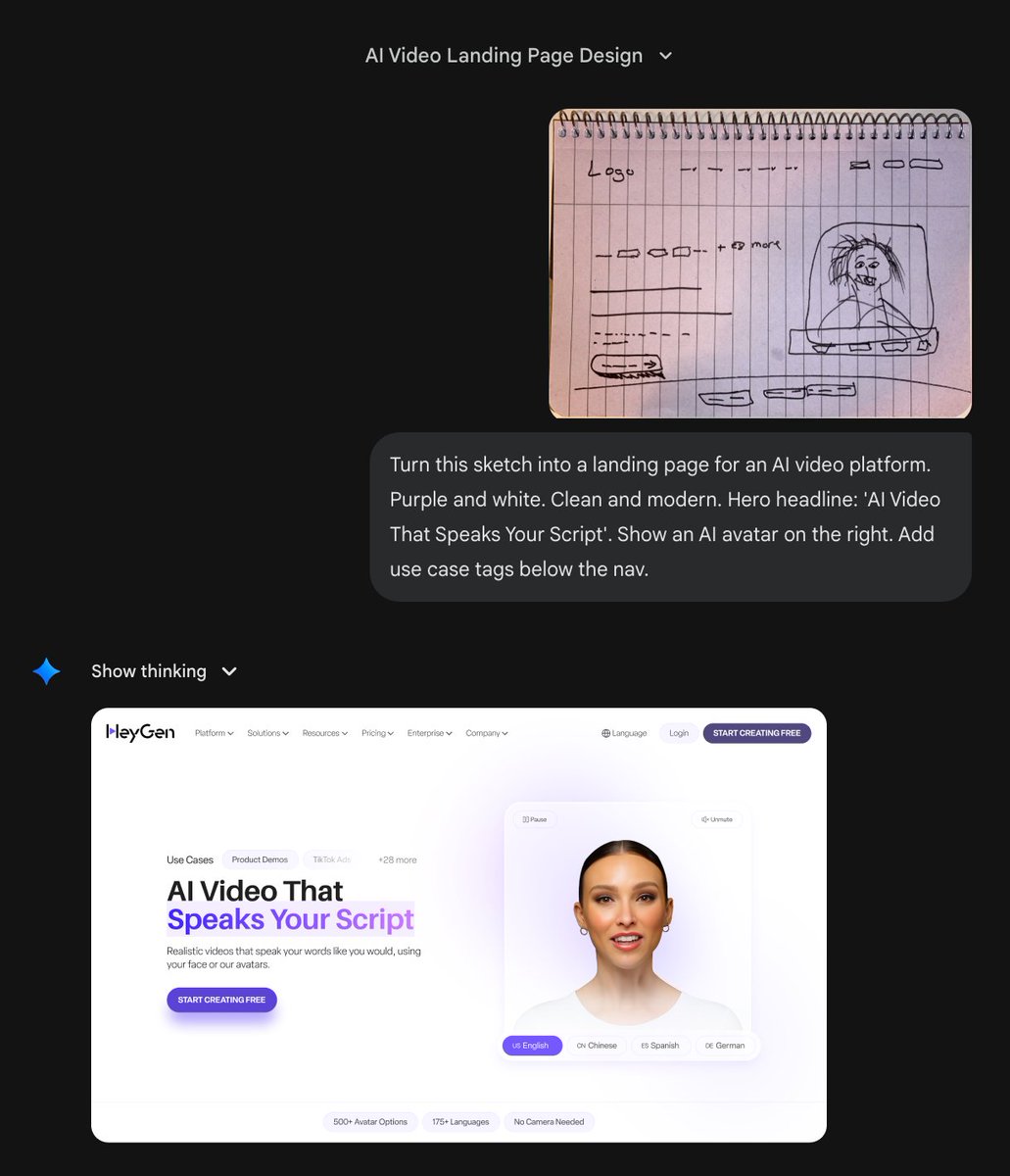

Introducing Nano Banana 2: Our best image generation and editing model yet. 🍌 Pro-level quality, at Flash speed. Rolling out today across @GeminiApp, Search, and our developer and creativity tools.