OpenMind

990 posts

OpenMind

@openmind_agi

Superintelligence for robots.

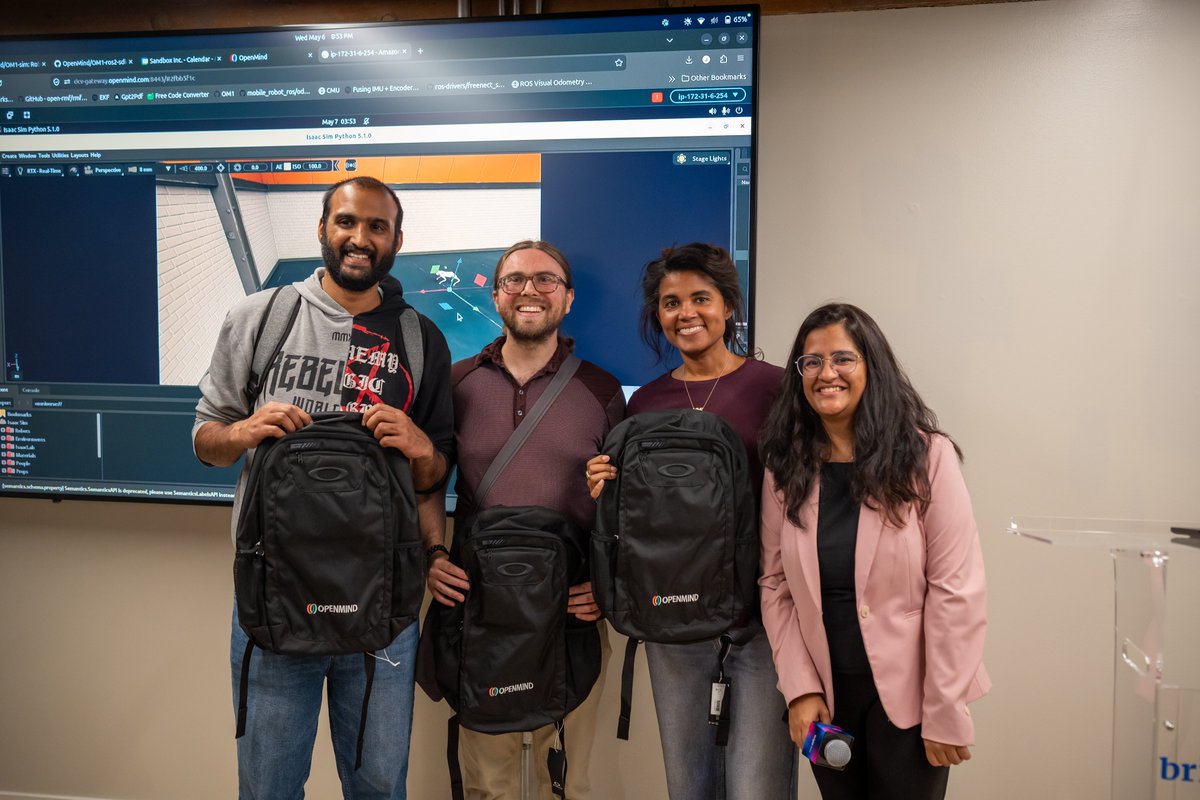

OpenMind OM1 Build Night w/ @OpenAI Codex Location: San Francisco [Address given upon successful RSVP] Date: Wednesday, May 6 @ 4:30 PM - 9:00 PM This event is for robotics and agent developers, AI-native builders, technical founders, and curious engineers who want a practical way to learn OM1 by actually building with it. Bring a laptop and come ready to ship something. Register: luma.com/openmind-om1-r…

Our CTO @boyuan is demoing our new localization algorithm, which surpasses leading industry solutions. Localization enables a robot to determine its position in an environment, which is essential for navigation. While most systems require robots to start from a predefined location, ours doesn’t. It lets robots boot up anywhere and immediately locate themselves, making deployment far more flexible for real world scenarios.