Or Shafran retweetledi

Or Shafran

13 posts

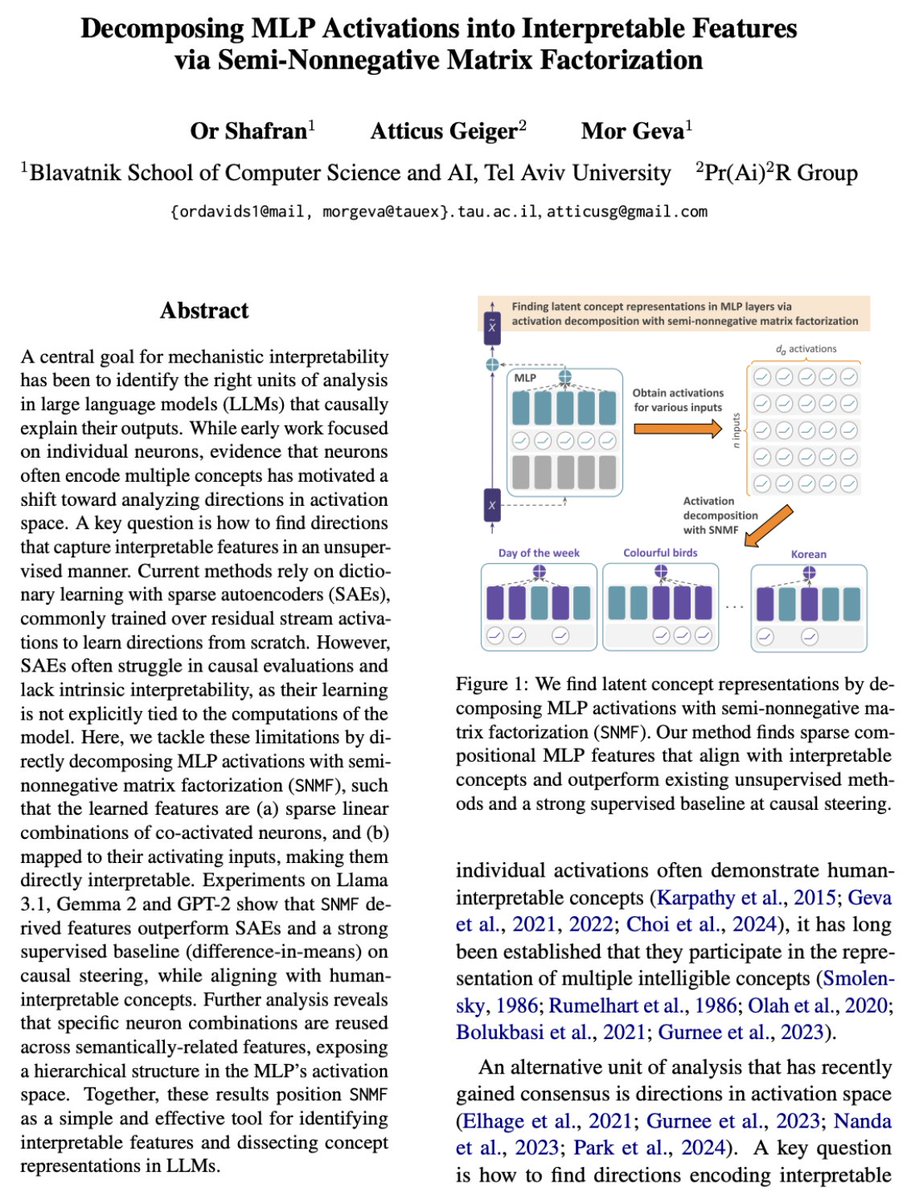

@dana_arad4 It’s a good question, I agree feature selection matters a lot for SAE steering, but it also highlights the inherent differences in the units of analysis. It's an interesting point to keep in mind, thanks for flagging it.

English

@OrShafran Super interesting! I noticed you compare steering against SAEs using the AxBench feature selection. In our recent work, we found better feature selection improves performance x2-3. Curious whether MFA would still outperform with that in place?

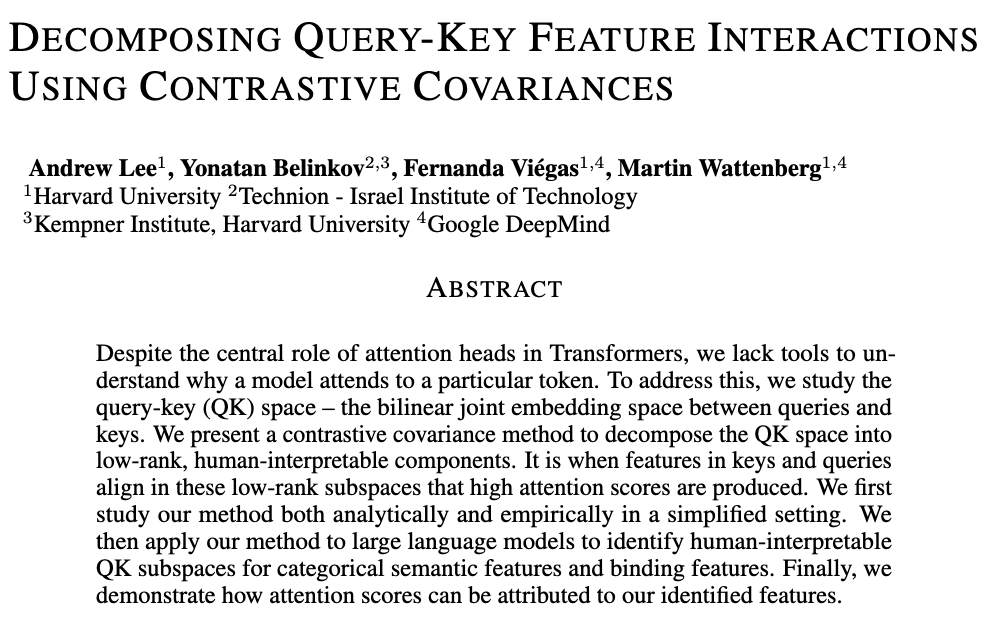

arxiv.org/abs/2505.20063

English

MFA offers a new lens on activation decomposition, shifting the focus from fitting global directions to uncovering the local geometry that actually organizes model behavior. 8/

🔗 Paper: arxiv.org/pdf/2602.02464…

🔗 Code and MFA models: github.com/ordavid-s/deco…

English

@YNikankin @megamor2 Thank you! In the paper we used 9k inputs, but for other experiments that we didn't include we factorized around 100k. In terms of time, we didn’t do include measurements, but from experience the algorithm converges on an H100 in a couple minutes.

English

@megamor2 Very cool work! Maybe I missed it, but what is the maximal number of inputs (n) you tried this on? And how long does the optimization of ZY take for this value?

English

Or Shafran retweetledi