Paata Ivanisvili

113 posts

Paata Ivanisvili

@PI010101

Professor of Mathematics @ UCI. Former postdoc @ Princeton. Exploring what AI can (and can’t) do in math.

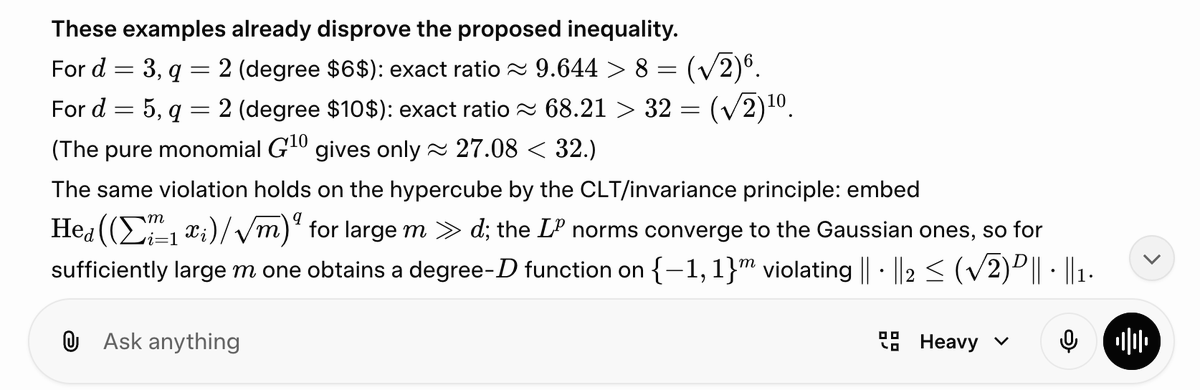

A few months ago I ran ChatGPT on some problems from "Open Problems in Analysis of Boolean Functions" and it found a new tightest example for the problem "Average versus Max Sensitivity for Monotone Functions" that improves the best known exponent from 0.611516 to 0.616595 (~0.5% improvement). At that time I thought it wasn't even worth writing a preprint about it. Now I am not sure it's even worth a tweet

We've now reached 100 constants in the and 21 contributors on the repo! Problem 11b was solved by @PI010101 and collaborators! The lower bounds for Problems 3c, 5b, and 51 have all been improved!

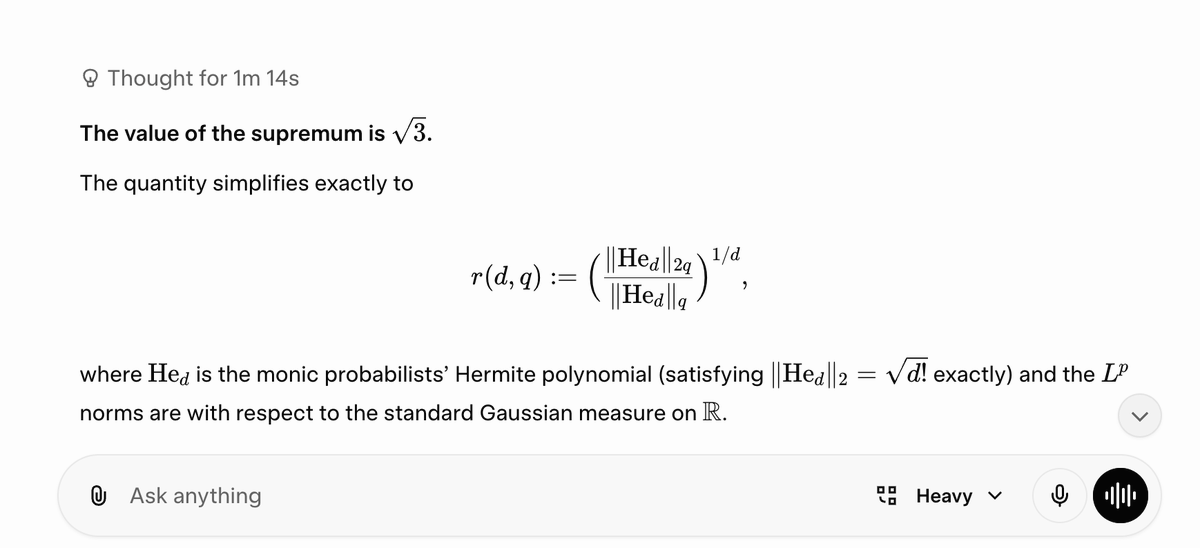

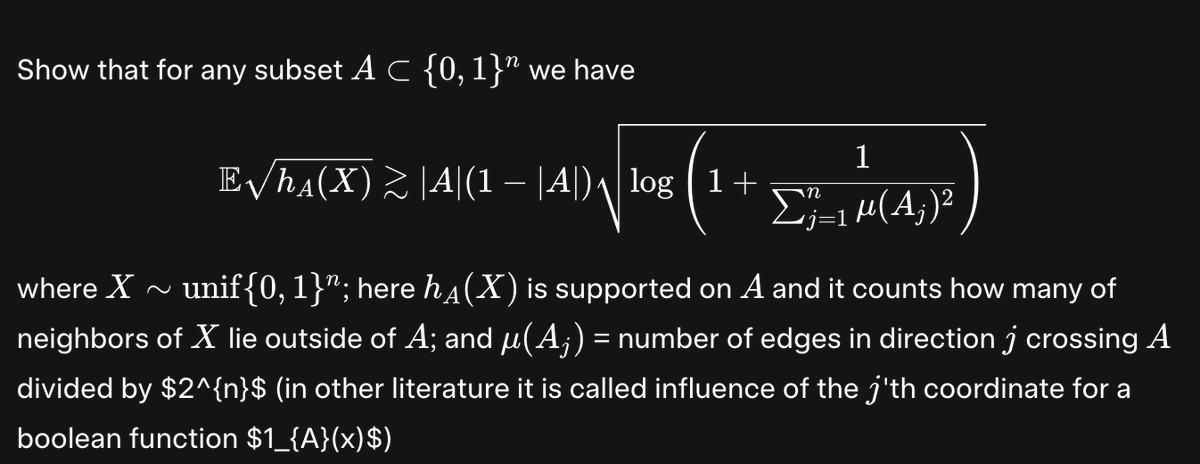

On the Eldan-Gross inequality-arXiv:2407.17864-Paata Ivanisvili, Haonan Zhang arxiv.org/abs/2407.17864