José Vergara de la Fuente

10 posts

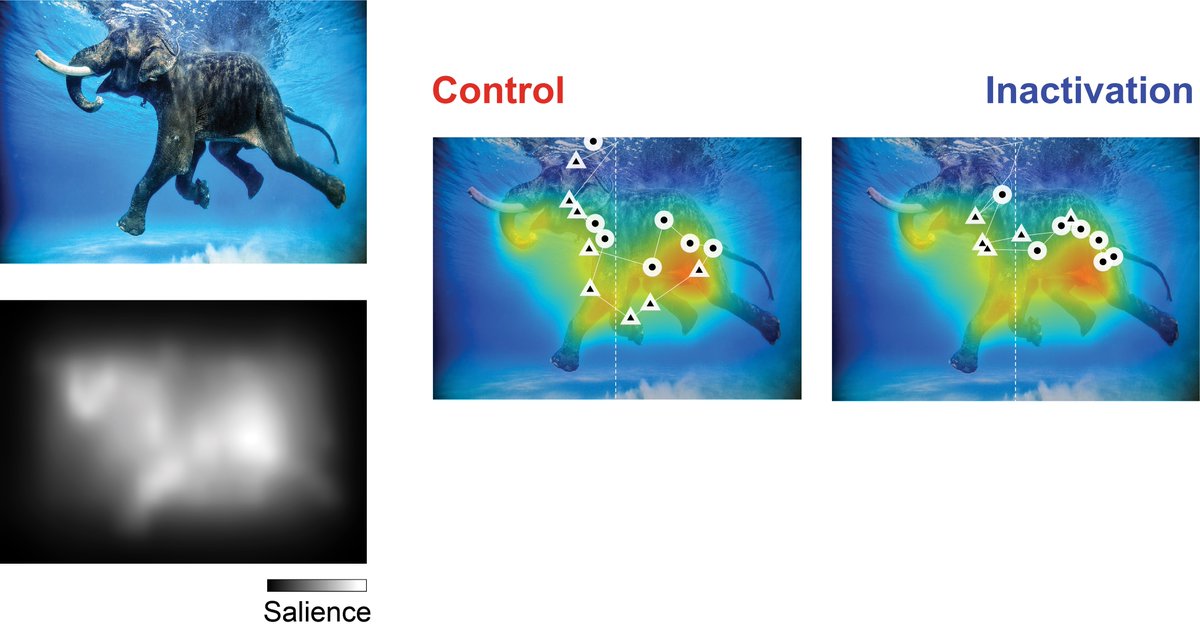

Online now: Thalamocortical interactions shape hierarchical neural variability during stimulus perception dlvr.it/T8F7Np

Here you are, with timestamps. ## Understanding Tokenization in Language Models: From GPT-2 to GPT-4 Tokenization is a fundamental process in language models, involving the conversion of text into tokens. This process is crucial for language models as it supports multiple languages and special characters like emojis15:05. However, tokenization is not without its challenges, especially when dealing with large language models (LLMs) and non-English languages 04:11. ## Tokenization in Language Models Tokenization in language models varies by position, case, and language. For instance, English has shorter tokens compared to languages like Korean, which can affect model training and performance 08:15. Tokenization also involves converting strings to integers for model input, which can be a complex process due to the size of the vocabulary and changes in standards 17:00. ## Encoding Methods: UTF-8 and Byte-Level Encoding UTF-8 is a popular encoding method that translates Unicode to variable length byte streams, ranging from one to four bytes 18:27. It is preferred for its compatibility with ASCII and efficiency, and it's widely used online 19:13. However, naive use of UTF-8 can lead to long byte sequences, limiting vocabulary and context in transformers. Byte pair encoding offers a solution to this problem 21:06. ## Byte-level encoding is another method used in large language models. It involves a 50,257 token vocabulary and a 1024 token context 02:52. The BytePair encoding algorithm compresses sequences by iteratively replacing frequent token pairs with new tokens, reducing sequence length while expanding vocabulary 22:54. ## Improvements from GPT-2 to GPT-4 The GPT-4 tokenizer demonstrates significant efficiency improvements over GPT-2, particularly in handling programming languages like Python. By grouping more whitespace into single tokens and increasing the token count from 50k to 100k, GPT-4 reduces token bloat and allows for denser input, enabling the transformer to consider a larger context when predicting the next token. This results in better performance, especially in coding tasks, due to more efficient representation and attention to relevant context 10:48. ## Special Tokens in Tokenization Special tokens play a crucial role in data structuring and encoder vocab mapping 01:18:26. Language models use a special end of text token to delimit documents, aiding in training data segmentation01:19:11. Special tokens bypass typical byte pair encoding (BPE) merges and are handled by custom code, as seen in the TickToken library implemented in Rust. GPT-4 introduces new special tokens like 'Thim' for 'fill in the middle', requiring model surgery to accommodate them in the transformer's embedding matrix and final layer 01:20:43. ## Challenges and Anomalies in Tokenization Tokenization in language models can lead to unexpected behaviors and anomalies. For instance, GPT-2 faced tokenization issues, particularly with Python's handling of spaces, reducing context length. GPT-4 addressed this. Special tokens can confuse LLMs, posing potential attack surfaces 01:56:47. Moreover, clusters of 'unstable tokens' like 'sold gold Magikarp' can cause erratic LLM responses, potentially linked to Reddit user mentions in the tokenization dataset 02:04:06. ## Conclusion Tokenization is a crucial but complex process in language models. It has seen significant improvements from GPT-2 to GPT-4, particularly in efficiency and handling of special tokens. However, challenges persist, especially with non-English languages and anomalies in tokenization. As we continue to refine and develop language models, understanding and addressing these challenges will be key to improving their performance and utility 02:10:20. References: Understanding Tokenization in Language Models 00:00 Byte-Level Encoding in Language Models 02:52 Efficiency Improvements in Tokenization from GPT-2 to GPT-4 10:48 Understanding Special Tokens in GPT Tokenization 01:20:43 Challenges in Tokenization for GPT Models 01:56:47 Unstable Tokens and LLM Behavior 02:04:06 Tokenization in AI and Its Challenges 02:10:20 You are more than welcome to experiment here: web.platogram.ai/summary?thread… Happy to give you a perpetual license for all of your videos. Reach out!

🚨We are happy to share our new review and proposal about how the brain navigates social decisions—and its relationship with mental disorders. With @yurivazu, Emma Mastrobattista & Ziv Williams. frontiersin.org/articles/10.33… #neuroscience #neurotwitter #WomenInSTEM a 🧵 1/