Peter Potaptchik

34 posts

@PPotaptchik

DPhil student at Oxford https://t.co/JH0l4u7wHv

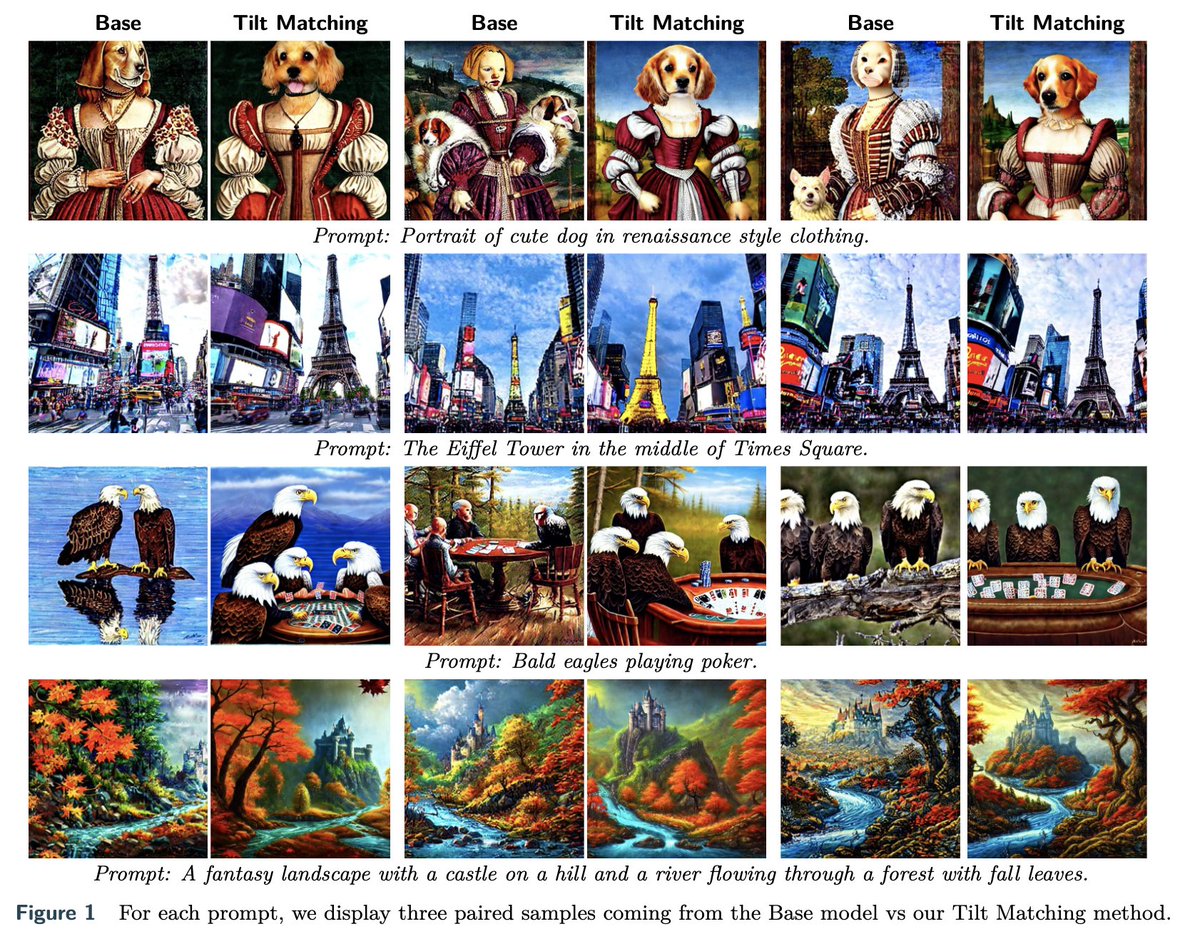

Imagine you could solve an infinite set of transport problems with one Meta Flow Map model that allows you to sample from arbitrary posterior distributions. Now imagine you can do that to construct a really effective estimator of how to adapt a diffusion to solve an RL problem. Now imagine that doing so allows you to even outperform Best-of-N=1000 at a fraction of the compute. Excited to introduce a new paradigm for flow and diffusion models we call Meta Flow Maps, which make this possible 🙂 👾 Learnable with simple modification of existing flow map losses 👾Off-policy fine-tuning algorithm! 👾Extremely effective reward alignment across a variety of rewards for both inference-time steering and learned fine-tuning! arxiv: arxiv.org/abs/2601.14430 project page: meta-flow-maps.github.io code: forthcoming Amazing work by @PPotaptchik and @adhisarav to bring these results to life! Really excited about future directions here! Thanks to @yeewhye @AbbasMammadov11 and Alvaro Prat.