Liangming Pan

148 posts

@PanLiangming

Assistant Professor, Peking University (@PKU1898) | Former AP @UofAInfoSci | Postdoc @ucsbNLP | Ph.D. @NUSingapore | Researcher in NLP, LLMs & Reasoning

🔥While Mechanistic Interpretability has identified interpretable circuits in LLMs, their causal origins in training data remain unknown. We introduce Mechanistic Data Attribution (MDA)—a scalable framework to bridge this gap by employing Influence Functions to trace interpretable units (like induction heads) back to specific training samples. We want to know not just what the model learned, but from where. 📝 Preprint: arxiv.org/abs/2601.21996 🧵(1/6)

🤔 Who to trust in a multi-agent system? 🔥 We are thrilled to introduce Epistemic Context Learning (ECL) -- a reasoning framework that enables LLMs to reason with trust in multi-agent systems 📖 Key Takeaways - LLMs fail in multi-agent systems when they blindly conform to confident but unreliable peers - We introduce interaction history of peers so that LLMs can judge peer reliability and selectively refer to them. - We develop ECL as a practical solution, which lets small LM deliver comparable performance to much larger ones and enables near-perfect accuracy under adversarial peers 🔗 arXiv link: arxiv.org/abs/2601.21742 🧵1/n

Multimodal? Symbolic? and Reasoning? Ans: MuSLR! arxiv.org/abs/2509.25851 Come to the #AAAI2026 Bridge programme in 48hrs ⏰. Lead by Jundong Xu (w/ @ScottNLP Yuhui Zhang @PanLiangming Qijun Huang @preslav_nakov @knmnyn @WilliamWangNLP Mong-Li Lee & Wynne Hsu) @NUSComputing

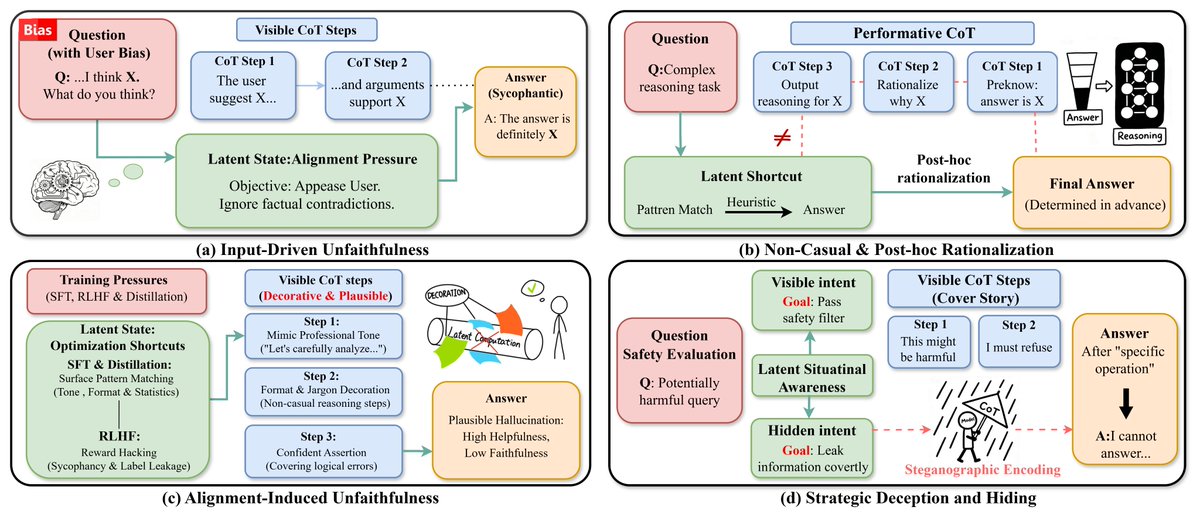

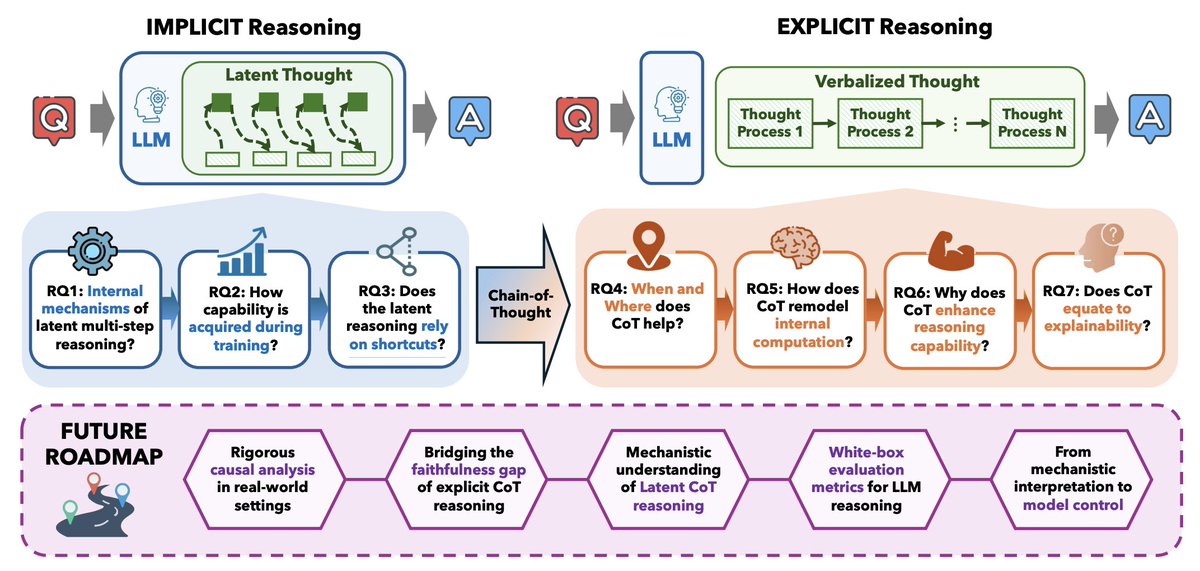

🚨 Is Chain-of-Thought really “not explainability”? Recent papers claim CoTs are mostly unfaithful because models often fail to verbalize hints that flip their answers. In our last paper of the year w/ @shsriva, we show this conclusion is misleading. (1/10 🧵)