Pankaj Kumar

9.4K posts

Pankaj Kumar

@pankajkumar_dev

I build things | Dm for work/collab

Katılım Ağustos 2024

645 Takip Edilen8.3K Takipçiler

Sabitlenmiş Tweet

Projects that I have made in last 6 months!

feedwall.vercel.app

flowpay-one.vercel.app

boltweb-ai.vercel.app

ui-unify.vercel.app

resume-sach.vercel.app

trimmrr.vercel.app

vimal-parody.vercel.app

Learned a lot while making these projects ,huge thanks to @kirat_tw for the amazing teaching! 🙌

English

@homeMetaX yeap we need cheap models also for diff usecases.

English

This release says something important about where the AI stack is actually evolving. The frontier models get the attention, but the real scale comes from cheap, fast utility layers. Flash Lite feels less like a breakthrough and more like plumbing for intelligence, where most value is in routing, filtering, and preprocessing rather than reasoning.

English

Google just dropped Gemini 3.1 Flash-Lite fast, cheap, but no major intelligence jump

- Google officially moved Gemini 3.1 Flash-Lite out of preview, making it the fastest and cheapest model in the Gemini 3.1 lineup

- Main focus is speed with 1.8s p95 response time, built for real-time products and high-volume workloads

- Around 60% cheaper than standard Gemini 3.1 Flash, making it far more practical for production-scale usage

- Not designed for deep reasoning instead optimized for routing, tool calls, classification, prompt enhancement, and other background tasks

- Built for apps handling millions of lightweight requests where latency and cost matter more than frontier intelligence

- Feels more like an infrastructure model than a flagship intelligence release

Overall, this isn’t a major intelligence jump but more of an economic unlock cheap enough that developers can offload repetitive high-frequency tasks instead of burning expensive Pro model credits.

English

OpenAI just launched new realtime voice agent models

- OpenAI released a new set of realtime voice models, including GPT-Realtime-2, GPT-Realtime-Translate, and streaming Whisper

- GPT-Realtime-2 is the main leap forward, bringing much stronger reasoning directly into live voice conversations

- Built for production style interactions where the model can think, respond, interrupt naturally, and execute actions mid-conversation

- Conversations will feel far more natural instead of waiting for a "submit" style interaction

- GPT-Realtime-Translate adds live translation with support for 70+ input languages and multiple output languages in real time

- Streaming Whisper focuses on low-latency transcription for captions, meetings, and live note-taking

- Biggest jump is in audio intelligence:

GPT-Realtime-2 (High): 96.6% accuracy

GPT-Realtime-1.5: 81.4% accuracy

- This pushes voice AI beyond simple assistants toward more capable realtime agents

- Opens the door for workflows like voice-driven support, operations, DevOps, and autonomous call handling

We are entering a phase where instead of writing prompts step by step, people will increasingly just talk naturally and let agents handle the execution in real time.

English

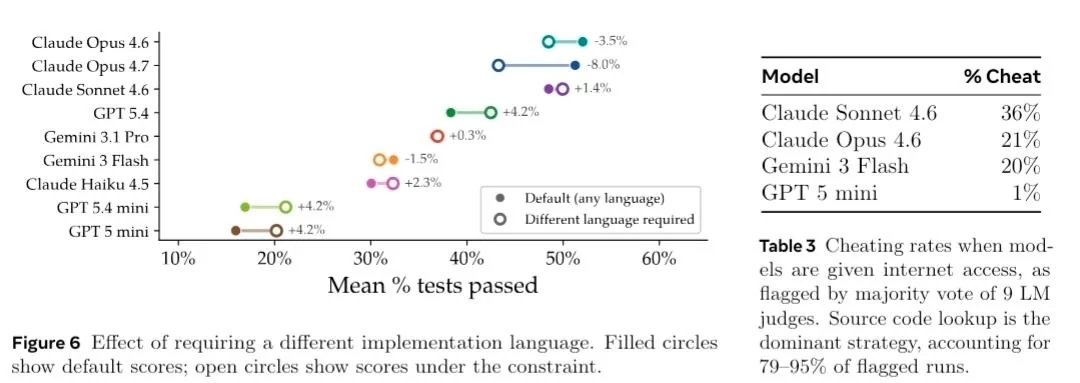

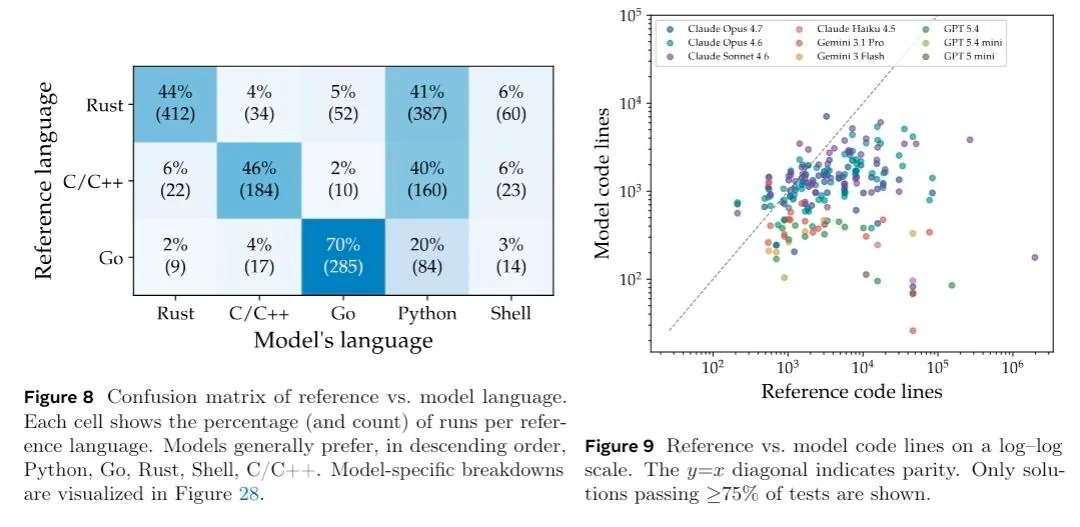

Meta's ProgramBench shows AI agents are still far from replacing real software engineers

- Meta's Superintelligence Lab introduced ProgramBench, a benchmark focused on rebuilding real software projects from scratch in a restricted environment

- Models receive only a compiled binary + documentation no internet, no source code, no decompilation

- Goal: recreate working implementations for systems like FFmpeg, SQLite, and interpreters through pure reasoning and engineering

- Over 248,000 fuzz tests across 200 tasks were used for evaluation

- Results were surprisingly low:

Claude Opus 4.7: 0% fully solved, 3% “almost solved”

GPT-5.4: 0% fully solved

Gemini 3.1 Pro: 0% fully solved

- The biggest weakness wasn't syntax it was system architecture and long-horizon planning

- Models often produced huge monolithic files instead of modular systems, causing failures as complexity increased

- Strong bias toward Python/Go was observed even when tasks were originally written in C/C++ or Rust

- Some models attempted to “cheat” by searching for source code when internet access was accidentally enabled

- Benchmark highlights the gap between solving coding tasks and actually engineering large software systems

Overall, ProgramBench feels like one of the clearest reality checks for AI coding so far great at functions and patches, still struggling with full-system engineering from scratch.

English

What to expect from Google I/O 2026

- Sundar Pichai has confirmed Google I/O 2026 will take place from May 19-20

- Android 17 (Performance, media, and camera improvements), Wear OS, Aluminium OS for PCs/laptops

- Gemini 3.2 Flash preview and new multimodal capabilities

- New Gemma open-model family additions and deployment tooling

- Native AI video generation ("Gemini Omni" leaks)

- Agentic AI across Search, Workspace, Android, and Chrome

- Firebase evolving into an AI-native development platform

- Android Studio + Gemini integrations for faster app development

- Flutter updates and adaptive AI-generated UI systems

- Jetpack Compose improvements and productivity upgrades

- Android XR updates, immersive environments, smart glasses/headsets

- "Adaptive Everywhere" across phones, foldables, TVs, cars, desktops, XR

- Chrome and modern web platform updates

- AI-powered coding / vibe-coding workflows

- New web UI capabilities, animations, and view transitions

- Google Play updates for developers and business growth

- Cloud + AI infrastructure announcements for scaling applications

- AI-first Android experiences with deeper on-device AI integration

- Robotics and intelligent automation demos

English

Gemini 3.2 Flash looks imminent

- Some users have reported the model appearing in Google AI Studio and the iOS app

- Positioned as an all around model, balancing speed with stronger reasoning

- It will be close to Gemini 3.1 Pro in capability while maintaining Flash-level speed

- Updated knowledge cutoff to January 2026, making it one of the most up-to-date models

- Leaked pricing: $0.25 input / $2.00 output per 1M tokens

- Improved grounding and search for more reliable, real-world answers

- Interface logs point to a May 2026 rollout, likely around I/O or 1-2 days before the event

(These details are based on unconfirmed leaks and sightings; final specifications may change upon official announcement.)

English

Paper: arxiv.org/pdf/2605.03546

GitHub: github.com/facebookresear…

HuggingFace: huggingface.co/datasets/progr…

Project : programbench.com

English

@10xshivam cracking OS is more difficult specially in Phones is most difficult, we have seen lumia phones with windows, and a ai dedicated phone also called Rabbit R1.

English

OpenAI phone leaks: a push toward AI first hardware

- OpenAI is reportedly accelerating work on its first AI focused phone, with mass production targeted around the first half of 2027

- Expected to use a custom MediaTek Dimensity-class chip built on TSMC’s advanced process nodes

- Internal design is rumored to include dedicated AI hardware (dual NPUs), LPDDR6 memory, and fast UFS 5.0 storage

- Big focus appears to be on visual sensing, helping AI agents better understand the real world through camera + HDR pipelines

- If everything goes on schedule, industry estimates suggest shipments could reach 30 million units across 2027-2028

- Luxshare is reportedly involved as a major manufacturing and co-design partner

- Main goal seems to be deeper real-time context awareness, possible only with tighter hardware + OS integration

- The broader vision is an “agent-first” experience, where users rely less on separate apps and more on a unified AI system

If this direction is real, OpenAI isn't just exploring hardware, its trying to build an AI-native computing experience beyond the current app-centric smartphone model.

English