Pavankumar Vasu

44 posts

Happy to introduce my internship work at @Apple . We introduce CLaRa: Continuous Latent Reasoning, an end-to-end training framework that jointly trains retrieval and generation ! 🧠📦 🔗 arxiv.org/pdf/2511.18659… #RAG #LLMs #Retrieval #Reasoning #AI

Is your AI keeping Up with the world? Announcing #NeurIPS2025 CCFM Workshop: Continual and Compatible Foundation Model Updates When/Where: Dec. 6-7 San Diego Submission deadline: Aug. 22, 2025. (opening soon!) sites.google.com/view/ccfm-neur… #FoundationModels #ContinualLearning

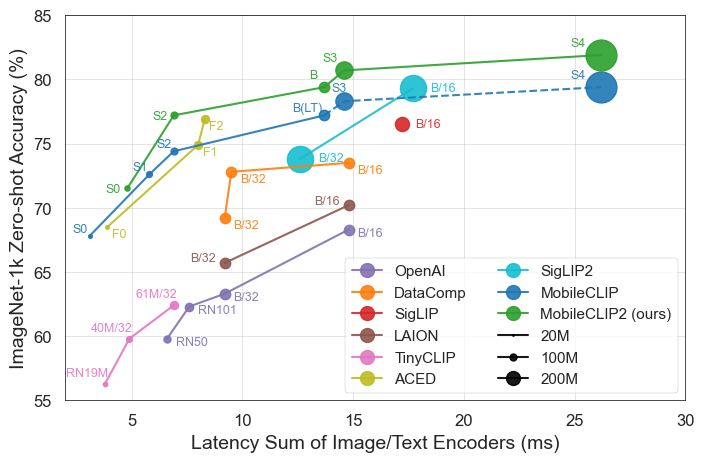

NEW: Apple releases FastVLM and MobileCLIP2 on Hugging Face! 🤗 The models are up to 85x faster and 3.4x smaller than previous work, enabling real-time VLM applications! 🤯 It can even do live video captioning 100% locally in your browser (zero install). Huge for accessibility!

🚀Releasing MobileCLIP2 (TMLR Featured). MobileCLIP2-S4 matches acc of SigLIP-SO400M/14 while 2x smaller and surpasses DFN ViT-L/14 at 2.5x faster. Paper: arxiv.org/abs/2508.20691 Code: github.com/apple/ml-mobil… RayGen: github.com/apple/ml-mobil… 🤗huggingface.co/collections/ap… #Apple MLR

Is your AI keeping Up with the world? Announcing #NeurIPS2025 CCFM Workshop: Continual and Compatible Foundation Model Updates When/Where: Dec. 6-7 San Diego Submission deadline: Aug. 22, 2025. (opening soon!) sites.google.com/view/ccfm-neur… #FoundationModels #ContinualLearning

Is your AI keeping Up with the world? Announcing #NeurIPS2025 CCFM Workshop: Continual and Compatible Foundation Model Updates When/Where: Dec. 6-7 San Diego Submission deadline: Aug. 22, 2025. (opening soon!) sites.google.com/view/ccfm-neur… #FoundationModels #ContinualLearning

Excited to share TiC-LM (Oral at #ACL2025)! LLMs can become outdated ⏲️ and re-training from scratch is costly💰. Ideally, we'd keep reusing and updating models on newer data ♻️. We study continual training as 114 CC months are revealed one-at-a-time. arxiv.org/abs/2504.02107

STARFlow: Scaling Latent Normalizing Flows for High-resolution Image Synthesis "We present STARFlow, a scalable generative model based on normalizing flows that achieves strong performance on high-resolution image synthesis"