Peter West

278 posts

@PeterWestTM

AI / NLP Researcher Incoming faculty at @UBC_CS and @CAIDA_UBC Postdoctoral fellow at @StanfordHAI @stanfordnlp Former PhD student at @uwcse @uwnlp he/him

For those who missed it, we just releaaed a little LLM-backed game called HR Simulator™ You play an intern ghostwriting emails for your boss. It’s like you’re stuck in corporate email hell…and you’re the devil 😈 link and an initial answer to “WHY WOULD YOU DO THIS?” below

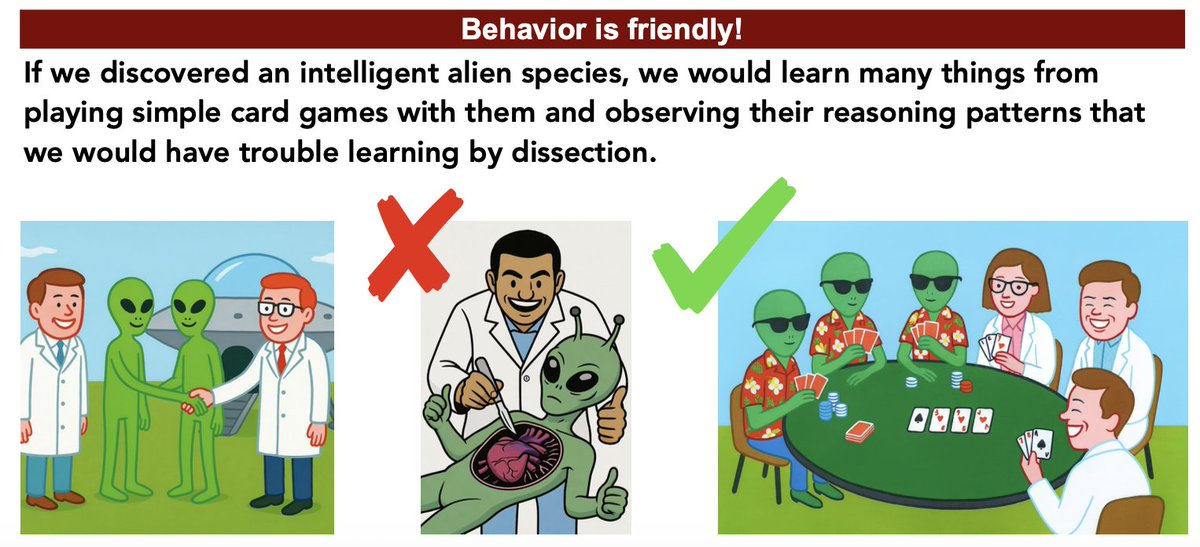

Theory of Mind is key to human social intelligence, but does giving LLMs ToM make them better social reasoners?🤔 We find that ToM makes LLMs better at dialogue: more strategic, goal-oriented, enabling long-horizon adaptation! We introduce ToMA, a ToM-focused dialogue agent🧵👇

For those who missed it, we just releaaed a little LLM-backed game called HR Simulator™ You play an intern ghostwriting emails for your boss. It’s like you’re stuck in corporate email hell…and you’re the devil 😈 link and an initial answer to “WHY WOULD YOU DO THIS?” below

How can chaos create brilliance and breakthroughs? Ari Holtzman (@universeinanegg) Assistant Professor of Computer Science and Data Science, explores how embracing chaos has unlocked the capabilities of AI systems in a letter to @TheEconomist! economist.com/letters/2025/0…

Have you noticed… 🔍 Aligned LLM generations feel less diverse? 🎯 Base models are decoding-sensitive? 🤔 Generations get more predictable as they progress? 🌲 Tree search fails mid-generation (esp. for reasoning)? We trace these mysteries to LLM probability concentration, and introduce Branching Factor (BF) — a simple measure that captures it all. Key Findings: — BF declines over time → generations become more deterministic — Alignment tuning slashes BF → shrinks the generative horizon — Low BF explains decoding sensitivity → fewer good options to prune — CoT stabilizes generation → shifts key info to late, low-BF regions — Avoid late branching → too many low-probability, low-quality continuations — Alignment surfaces low-entropy paths already latent in base models 📜 Paper: arxiv.org/abs/2506.17871 🌐 Website: yangalan123.github.io/branching_fact… 🎥 2-min explainer below. Joint work w/ @universeinanegg , thanks for the constructive feedback from @PeterWestTM @UChicagoCI @zhaoran_wang @_Hao_Zhu @ZhiyuanCS @TenghaoHuang45 @TuhinChakr