Sabitlenmiş Tweet

Phala

6.2K posts

Phala

@PhalaNetwork

Empowering 10,000 builders and companies to build scalable, private, and safe intelligence.

San Francisco, CA Katılım Ağustos 2019

840 Takip Edilen142.7K Takipçiler

Phala retweetledi

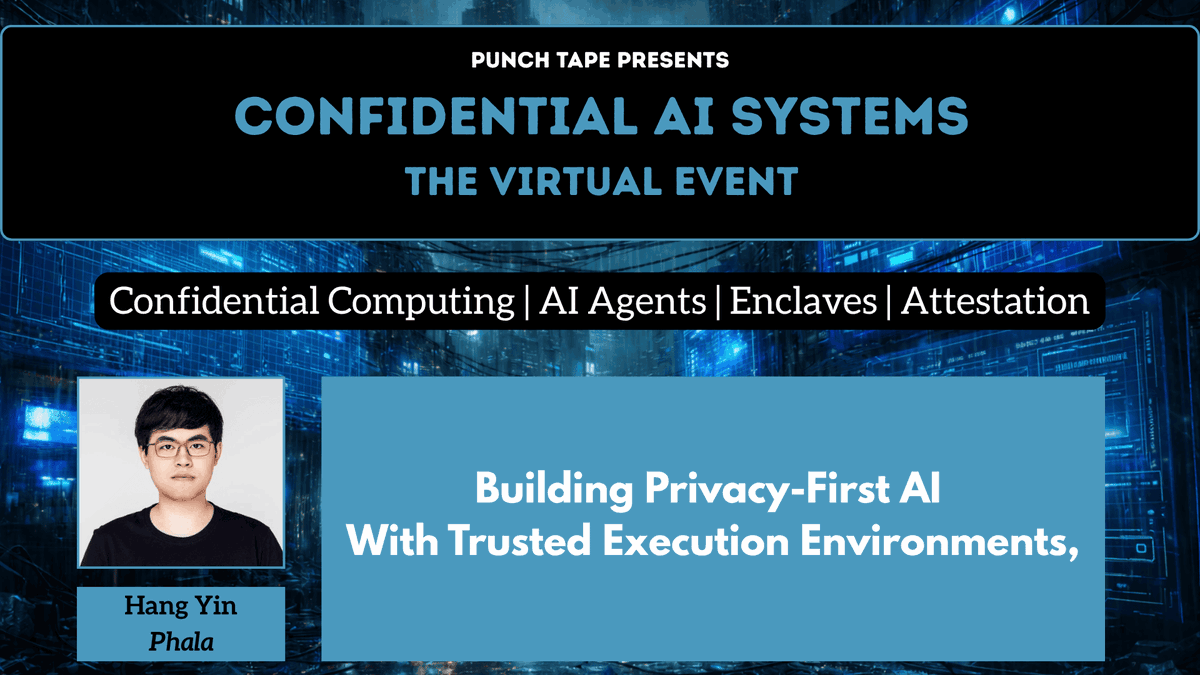

We were at muShanghai’s AI Security Day today!

Phala's Head of Research Dr. Shelven Zhou @zhou49 delivered a workshop on Phala as well as @openclawdi, the ultimate AI environment powering OpenClaw and Hermes agents!

Big thanks to everyone who joined the session. 🫡

English

When GDPR meets LLMs, “secure” isn’t enough. You need proof.

Can data escape?

Is the running code what was audited?

Can cloud admins read runtime memory?

What privacy-preserving compute means for AI data compliance:

phala.com/posts/privacy-…

English

Phala retweetledi

Phala retweetledi

Phala retweetledi

Builders deserve trusted compute.

We're excited to partner with @aiweb3school to bring trusted, verifiable compute to the next wave of AI + Web3 builders. 🤝

AIxWeb3 School@aiweb3school

English

Phala retweetledi

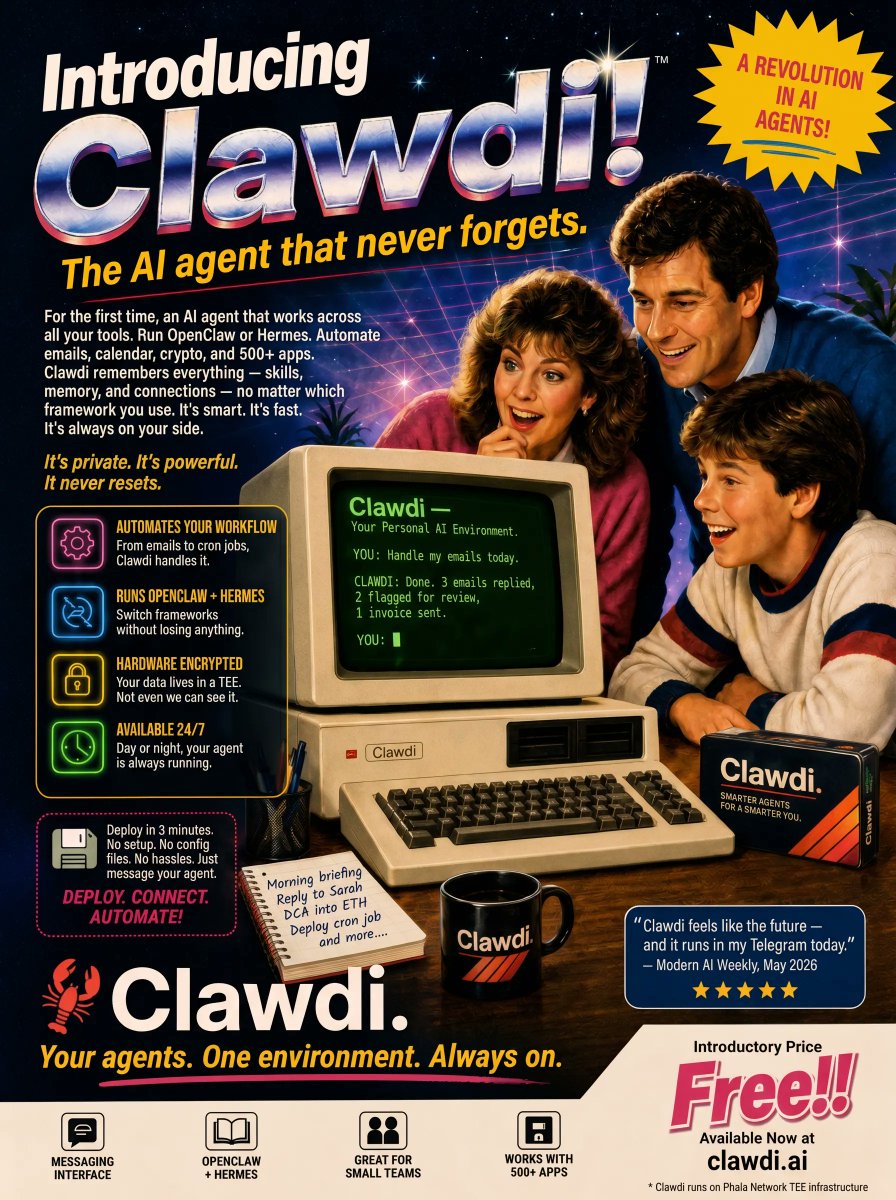

We just shipped Clawdi v2.0.🦞

Think of it as iCloud for AI agents: install once on any device, and your OpenClaw, Hermes, Claude Code, Codex agents share the same memory, keys, skills, and files.

Runs on Phala's TEE so everything stays encrypted by default.

Clawdi.AI@openclawdiai

Run 2 companies with 5 AI agents every day: Claude Code, IDE+CC, Codex, OpenClaw, Hermes. And @marvin_tong hate it.

English

icloud for all AI agents is here 👇

Marvin Tong (t/acc)@marvin_tong

I run 2 companies with 5 AI agents every day: Claude Code, IDE+CC, Codex, OpenClaw, Hermes. And I fucking hate it.

English

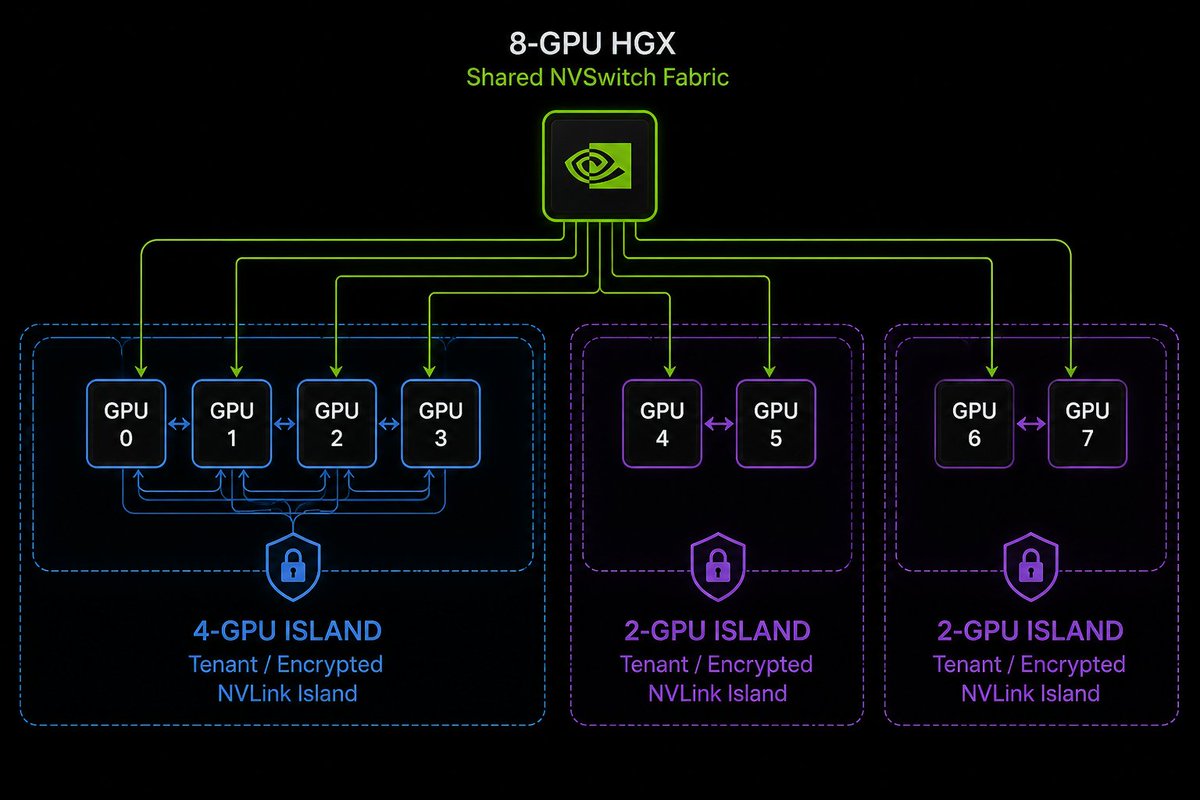

We wrote up the architecture here:

phala.com/posts/dstack-o…

If you’re building confidential AI or other sensitive workloads, this is the direction we think matters.

English

Which platform should you use for confidential compute?

@awscloud Nitro Enclaves?

@googlecloud Confidential VMs?

A TEE-native stack like @PhalaNetwork?

The hard part isn’t just choosing infra.

It’s rebuilding the trust model every time you switch runtimes.

English

We optimized the vLLM loading performance in GPU TEE for 32x better! 👇

Hang Yin@bgmshana

TEE/CC does add overhead, but the more interesting story is how much of the “CC tax” is actually software path tax. Example: GPT-OSS-120B weight loading in vLLM on our CC CVM. Default: 287s CC optimized: 8.8s 32x improvement without changing the model

English

Phala retweetledi

Phala retweetledi

Explore the full list of 200+ models and start building today: redpill.ai/models

English

6️⃣ Qwen: Qwen3.5-27B:

A highly efficient native vision-language Dense model that balances fast response times with strong performance, comparable to much larger models.

Fast, capable, and cryptographically secure.

redpill.ai/models/qwen/qw…

English