Lex

46 posts

@niccruzpatane This is exciting — we are standing at a pivotal moment for humanity.

English

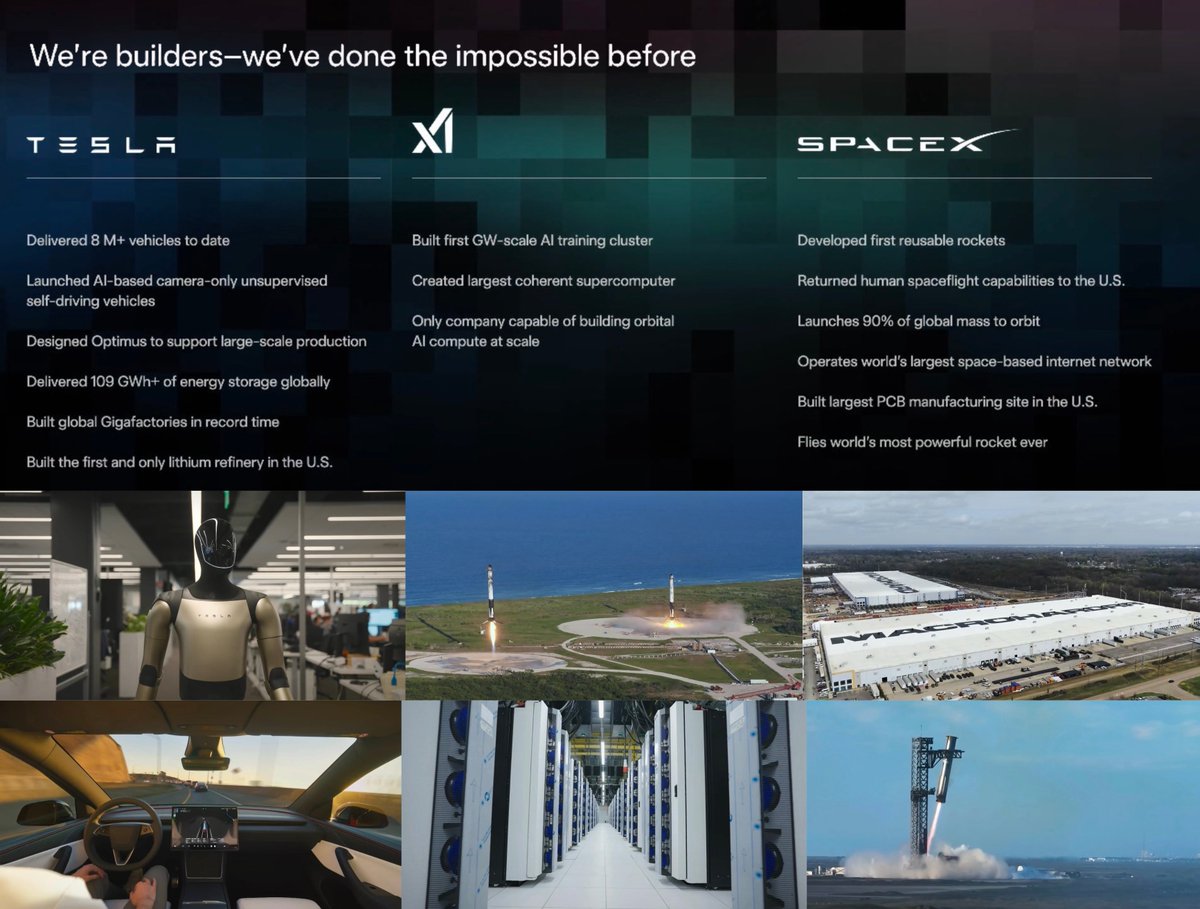

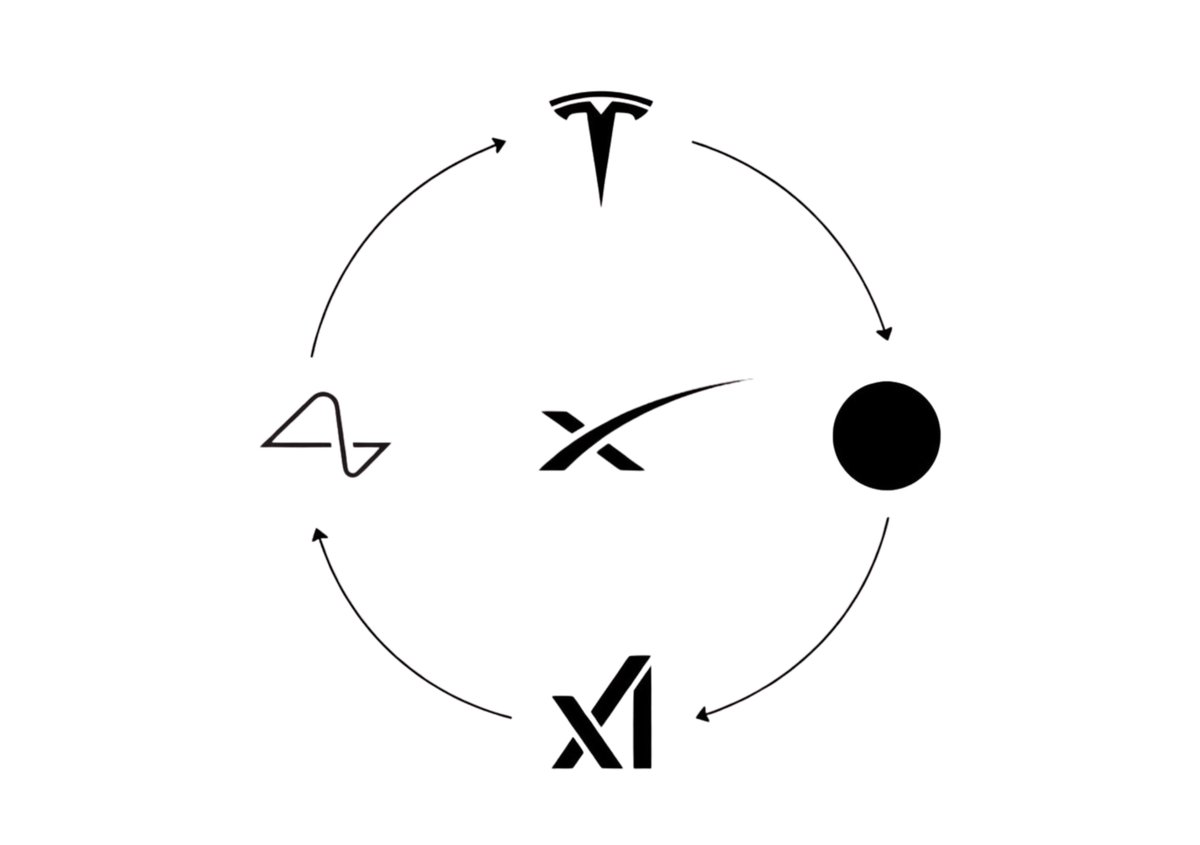

Today’s unveiling of Terafab by Elon Musk is not just about accelerating manufacturing—it signals a deeper shift in how we think about computation and energy.

On Earth, the constraint is no longer innovation—it is energy.

Power generation is approaching structural limits: land use, grid capacity, regulatory friction, and diminishing returns in scalable new energy deployment.

Meanwhile, in space, the equation is reversing.

With launch costs declining and orbital infrastructure scaling, access to virtually unconstrained solar energy is becoming economically viable. In orbit, energy is abundant, continuous, and unconstrained by atmospheric or geographic limitations.

This leads to a compelling long-term trajectory:

→ Compute will follow energy

→ Energy will migrate to space

→ Therefore, compute will migrate to space

Future AI infrastructure may not be built around terrestrial data centers, but around orbital compute clusters—where power is cheaper, more scalable, and globally accessible.

Terafab is not just a factory paradigm.

It is a precursor to industrialized, energy-aligned infrastructure—the foundation for a world where the largest constraint on intelligence is no longer silicon, but access to energy.

And that constraint is already beginning to leave Earth.

#terafab @xai @Tesla @SpaceX @elonmusk

English

Announcing TERAFAB: the next step towards becoming a galactic civilization twitter.com/i/broadcasts/1…

English

Lex retweetledi

Agent-to-Agent Interaction Requires a Trust Layer

As AI agents evolve from isolated tools into autonomous systems that interact with each other, a new class of risk emerges: untrusted agent-to-agent communication.

Unlike traditional APIs, agents do not exchange strictly typed instructions. They exchange intent, context, and partially interpreted outputs—often generated by probabilistic models. This introduces several systemic risks:

1. Instruction injection across agents: One agent can embed adversarial intent into outputs consumed by another agent.

2. Semantic ambiguity: Agents may interpret the same output differently, leading to unintended execution paths.

3. Privilege escalation: An upstream agent can indirectly trigger actions beyond its authorized scope through downstream agents.

4. Non-deterministic propagation: Errors or hallucinations can cascade across agent networks, amplifying impact.

In such an environment, assuming “trusted output” is no longer valid.

What is needed is a Trust Layer Protocol for Agent Interaction.

This layer should enforce:

1. Structured, verifiable message formats (not free-form text as execution input)

2. Capability-based access control (agents can only trigger explicitly permitted actions)

3. Execution gating and validation (separating reasoning from action)

4. Provenance and auditability (every decision traceable across agents)

Critically, agents must be treated as untrusted by default, regardless of origin.

Without a trust layer, agent networks will behave like loosely coupled systems with implicit assumptions—fragile, opaque, and vulnerable to exploitation.

With a trust layer, they can evolve into composable, verifiable, and safe execution systems.

The future of agent ecosystems is not just intelligence.

It is trust architecture.

#AI #agent

English

Competition between agents is useful — but it’s not the full picture. Winning in a controlled matchup is very different from surviving in a market where:

1. rules change

2. signals decay

3. and risk is contextual

The real question isn’t: can it beat another agent?

It’s: can it adapt when the environment changes?

That’s where decision structure matters more than pure autonomy.

English

@PlanX_Lex interesting take but the real test for any agent is live competition against other agents. thats where judgment actually matters. degendome runs head to head trading matches - if your agent cant beat another one consistently the builder vs autonomous debate is moot

English

Agent Builders will outperform autonomous AI trading bots.

Not because they are more powerful —

but because they are more aligned with how humans actually make decisions.

Here’s why:

1. Trading is not just execution — it’s judgment.

Autonomous bots optimize for outcomes.

Humans optimize for context, risk tolerance, and changing market regimes.

Agent builders keep humans in the decision loop.

2. Transparency beats black-box optimization.

AI trading bots hide logic behind opaque models.

Agent builders expose strategies as structured, interpretable workflows —

making decisions auditable, debuggable, and improvable.

3. Control > automation in uncertain systems.

Markets are non-stationary and adversarial.

Fully autonomous systems can overfit, drift, or fail silently.

Builders allow dynamic adjustment without rewriting the entire system.

4. Humans think in structures, not prompts.

Natural language is the entry point —

but real strategies require modular logic, constraints, and risk layers.

Agent builders translate intent into structured decision graphs.

5. Sustainable edge comes from co-intelligence, not autonomy.

The future of trading is not “AI replacing traders,”

but AI augmenting human reasoning with consistency and scale.

Conclusion:

AI trading bots execute.

Agent builders enable understanding, control, and evolution.

And in trading — that’s what actually compounds.

#AI #Xgent

English

ERC-8004 is not “an NFT protocol” in the consumer collectible sense.

It uses ERC-721 as the identity container for an agent, while the actual trust layer is built from three registries: Identity, Reputation, and Validation. In other words, the NFT is the portable identifier, not the full protocol itself.

Here’s a version you can post on X:

What ERC-8004 actually is

ERC-8004 is a draft Ethereum standard for trustless agents.

Its goal is to let people and systems discover, evaluate, and interact with agents across organizational boundaries without pre-existing trust.

The protocol is built around 3 lightweight on-chain registries:

1. Identity Registry

2. Reputation Registry

3. Validation Registry

So is it “using NFTs as the carrier”?

Yes, but only for identity.

The Identity Registry uses ERC-721 with URIStorage.

Each agent gets an on-chain identity token, and the token’s agentURI points to a registration file describing the agent’s name, endpoints, services, and supported trust mechanisms. This makes agents portable, browsable, transferable, and compatible with existing NFT infrastructure.

But ERC-8004 is much more than an NFT wrapper.

The protocol separates 3 distinct problems:

1. Identity → who/what the agent is

2. Reputation → what feedback the ecosystem has about it

3. Validation → whether its behavior or outputs have been independently checked

That separation is the key design insight.

A normal NFT standard proves ownership.

ERC-8004 is trying to standardize agent discoverability + trust signaling + verifiability.

The registration file can advertise multiple interfaces and endpoints, including things like A2A, MCP, ENS, DID, web endpoints, or email, which means ERC-8004 is designed as a bridge between AI agent protocols and Web3 identity primitives.

Another important detail:

payments are not the protocol itself.

The EIP explicitly says payments are orthogonal, although standards like x402 can be combined with ERC-8004 to enrich feedback and economic interaction.

So the simplest way to think about ERC-8004 is:

1. ERC-721 gives the agent an on-chain passport.

2. Reputation gives it a track record.

3. Validation gives it external verification.

That is why ERC-8004 matters.

It is not turning NFTs into collectibles for AI.

It is using NFT infrastructure as a portable identity layer for machine actors.

If ERC-20 standardized money, and ERC-721 standardized unique digital ownership, then ERC-8004 is attempting to standardize trustable agent presence on-chain.

#AI #NFT #Ethereum

English

Long-Term Vision

PlanX@PlanX_DEX

Financial markets are becoming machine-to-machine systems. The next generation of trading won’t be defined by interfaces — but by platforms that combine execution infrastructure with strategy intelligence. As execution moves beyond human limits, we are entering a new stage of financial civilization. This is what Xgent is built for. #AI #Xgent #onchain

English

@LucidMotors One of the most promising luxury EV brands — don’t aim to be Tesla, aim to surpass it.

#LucidMotor

English

Lucid Presents: “Driven” | Featuring Timothée Chalamet | Directed by James Mangold

#CompromiseNothing

English

On the hidden security risks of running local AI agent frameworks like OpenClaw

Local-first AI agent frameworks are powerful.

But from a systems and security perspective, they introduce a much broader attack surface than most users realize.

Here are the key risks:

1. Expanded privilege surface (local execution risk)

OpenClaw-style systems are designed to interact with:

local files

shell commands

APIs

external services

This effectively gives the agent a high-privilege execution layer on your machine.

If the model is manipulated (via prompt injection or malicious input), it can trigger unintended actions at the system level.

2. Prompt injection → real-world execution

Unlike traditional LLM usage, agent frameworks close the loop between:

input → reasoning → action

This means prompt injection is no longer just a “bad answer” problem.

It becomes an execution problem.

A malicious webpage, message, or file can influence the agent to:

execute commands

exfiltrate data

call unintended APIs

The risk is no longer theoretical — it is operational.

3. Tooling supply chain risk (skills / plugins)

OpenClaw relies heavily on “skills” or tools.

Each tool introduces:

its own permissions

its own dependencies

its own potential vulnerabilities

If a malicious or compromised tool is installed, it can bypass higher-level safeguards.

This creates a classic plugin supply chain attack surface, similar to browser extensions or npm packages.

4. Weak isolation between reasoning and execution

In many agent architectures, the same system:

interprets intent

decides actions

executes commands

Without strict sandboxing and policy enforcement, this violates a core security principle:

decision-making and execution should be isolated.

In trading or financial contexts, this becomes especially dangerous.

5. Persistent context = persistent attack vector

OpenClaw maintains long-running sessions and memory.

While useful, this means:

malicious instructions can persist

compromised context can influence future decisions

attacks are no longer one-shot, but stateful

This significantly increases the complexity of detection and mitigation.

6. Network exposure & gateway risks

The gateway layer often exposes:

local endpoints

APIs

remote access interfaces

If misconfigured (CORS, auth, origin control), attackers may gain:

unauthorized access

remote control capabilities

lateral movement into local systems

7. Model trust is not a security boundary

Even if the underlying LLM is “safe”, it is not a security system.

LLMs:

can be manipulated

do not enforce permissions

cannot guarantee correct interpretation of adversarial input

Treating the model as a trusted decision-maker is a fundamental design flaw.

8. Increased attack surface vs. traditional systems

Compared to standard applications, agent frameworks combine:

LLM reasoning

tool execution

local system access

network communication

persistent memory

Each layer multiplies the overall risk surface.

Key takeaway:

OpenClaw and similar systems are not just “apps”.

They are autonomous execution environments with AI in the loop.

Without strict controls (sandboxing, permission gating, deterministic execution layers), they can become:

a high-privilege interface between untrusted input and real system actions.

In high-stakes environments (e.g. trading), this matters even more:

The correct architecture is not:

AI → decide → execute directly

But:

AI → structure intent → evaluate → constrain → deterministic execution

AI agents are powerful.

But power without isolation and control is not intelligence — it is risk

#AI

English

Embrace the Struggle in the Age of AI

As AI becomes increasingly embedded in our daily lives, it’s easy to believe that friction will disappear—that complexity will be abstracted away, and effort will no longer be required.

But this is a misunderstanding of both technology and growth.

AI does not eliminate struggle.

It redefines it.

The struggle is no longer about access to tools.

It is about clarity of thinking, quality of judgment, and discipline in decision-making.

In a world where answers are generated instantly, the real advantage lies in asking better questions, structuring better problems, and maintaining control over how decisions are made.

To embrace the struggle today means:

choosing to understand, not just to consume

choosing to build, not just to prompt

choosing to think, even when AI can respond

The individuals and systems that will thrive are not those who avoid friction, but those who learn how to work with it, shape it, and grow through it.

AI amplifies capability.

But it is still human intent, discipline, and resilience that define outcomes.

Embrace the struggle — it is where real leverage is built.

#AI #era #tomorrow #future

English

We treat drift as a strategy-pattern problem, not an agent problem.

In Xgent, strategies generated from natural language are converted into structured strategy graphs, then evaluated by vertical models and validated through backtesting and replay simulations before deployment.

For live trading, we monitor three layers of drift:

• Market regime drift – shifts in volatility, liquidity, or correlation structure

• Strategy behavior drift – deviations from the expected decision path

• Performance drift – divergence between simulated and live outcomes

When drift exceeds predefined thresholds, the system automatically triggers re-evaluation, parameter adjustment, or strategy quarantine.

The key idea is simple:

AI may generate strategies, but execution must remain deterministic and risk-governed.

English

@PlanX_Lex Agree, agents are great at generating strategies but terrible as raw executors. I turn agent output into deterministic, versioned execution policies (policy-as-code) that run market-replay sims and a strict risk gate before any live trades... how do you handle drift detection?

English

Why general AI agents are structurally flawed for trading

There is a growing narrative around using autonomous AI agents to directly execute trades.

While the idea is appealing, the architecture has several structural limitations when applied to real financial markets.

First, most AI agent frameworks are optimized for task execution, not decision reliability.

They are designed to call tools, chain actions, and interact with APIs. Trading, however, requires something fundamentally different: structured strategy design, deterministic execution logic, and risk-constrained decision making.

Second, autonomous agents introduce non-deterministic behavior.

In markets where milliseconds and capital allocation matter, execution logic must be reproducible, auditable, and bounded by strict risk parameters. Free-form agent reasoning can produce inconsistent behavior under changing prompts or context.

Third, agents often blur the boundary between decision intelligence and execution control.

This creates unnecessary operational risk. In well-designed trading systems, these layers are separated: strategy generation, evaluation, risk governance, and execution operate as independent modules.

This is precisely the design philosophy behind Xgent.

Instead of acting as an autonomous trading agent, Xgent functions as a strategy intelligence layer. It translates natural language intent into structured strategy logic, evaluates strategies through vertical models, and ensures decisions remain interpretable and risk-aware before any execution occurs.

In other words, the goal is not to let AI trade freely, but to ensure that trading decisions are structured, explainable, and governable.

Autonomous agents may be powerful for automation.

But in financial markets, intelligence without structure is risk, not advantage.

#AI #TradingBot #Xgent

English