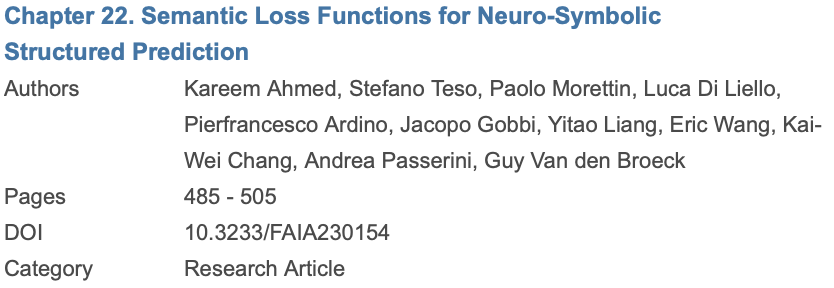

When you prompt an LLM for code, you get one deterministic program. However, the LLM actually defines a distribution over many programs, and existing methods discard it‼️ PPoT uses this distribution to extract free performance and efficiency gains. 🧵👇