Sabitlenmiş Tweet

Lincoln 🇿🇦

37.1K posts

Lincoln 🇿🇦

@Presidentlin

History never ends. Former SA/UK bank swe. Whale watcher 🐋 Building: https://t.co/7sqtdwkDXx

South Africa Katılım Ekim 2011

716 Takip Edilen4.8K Takipçiler

Lincoln 🇿🇦 retweetledi

So DeepSeek-V4: finally took me the week.

Overall the paper is attempting many things at once, not easy to disentangle as it's all surprisingly connected.

It's first a serious attempt at briding the gap between close and open LLM architecture. It is generally rumored that Opus and [largest model bundled in GPT-5] belong to an entirely different category of models: very large, very sparse mixture of experts, able to holding an unprecendently wide search space while still being servable. Simply put current hardware cannot hold a model on one node, so you have to play with the interconnect and various level of quantization, for different layers, at different stage of training. An important focus of DsV4 is on communication latency, showing it can be hidden through effective management of interconnect (roughly you slide communication time inside computation side). Overall, you cannot simply enter this game without the capability to rewrite kernels from scratch and the model report relentlessly come back to this. Because this is the frontier game.

It's then a radical, but very successful attempt at making long context simultaneously more efficient and more affordable. Long context is literally a "context" problems: what exactly is worth attending? An obvious fix is to prioritize the most recent tokens. This might be sufficient for basic search but not for the new demands of agentic pipelines that require accurate recall of distant yet strategic content. V4 clever approach is to rely on two different axis of memorization by allocating layers to two different attention compression schemes. Like the name suggest, Heavily Compressed Attention is the brute force method collapsing each sequence of 128 tokens to a unique entry and take care of the fuzzy yet global context. Compressed Sparsed Attention rely on a "lighting indexer" to bring the relevant local blocks for query, even when they can be thousands of tokens away. Everything here is optimized for end inference: there is very large head_dim (512) which is costlier for training but allows for even more compressed kv cache which is your actual bottleneck at inference time, especially in prefill mode. End result is very classical DeepSeek play, introducing a new radical disruption of inference economics after DSA. I predict hybrid CSA/HCA (or similar counterparts) will be essentially part of the mainstream arch by the end of this year.

Now we come to the more ambitious but also more unfinished part: an attempt at redefining model architecture and the learning signal. Most preeminent part is mHC and hybrid CSA/HCA, but it's actually a long list of less documented innovations: swapping softmax for sqrt(softplus) or using an hybrid two-stage scheme with non-standard values for Muon. Yet the interconnection all of these new components is still unknown and likely to account for the significant training unstabilities: typically "mHC involves a matrix multiplication with an output dimension of only 24" which introduces non-determinism. Even one the best AI labs in the world will run here into ablation combinatorial explosion, so the association of all these choices is likely non-tractable and would require a more consistent theory — which the conclusion gestures at, but does not solve ("In future iterations, we will carry out more comprehensive and principled investigations to distill the architecture down to its most essential designs"). The more limited experiments in post-training are maybe more promising. Significantly, the one lab that popularized the standard RL+reasoning recipe is rethinking the recipe. For now it's a two stage design (RL on specialized model, then on-policy distillation): ever since Self-Principled Critique Tuning DeepSeek has been concerned with expanding the reasoning training signal beyond final sparse reward. I'm not sure this is final say: in this domain everything is a bit in flux and you could even argue the type of verified pipeline we designed for SYNTH is a form of extreme offline RL-like training.

There is an even longer term plan (here >3-5 years), which is about redefining hardware. For now it's a way of transforming a constraint into an opportunity: as the leading Chinese labs, DeepSeek was very incentivized to make training work on Ascend and contribute to the national effort for chips autonomy. Very unusually, the report includes a lengthy wishlist for future hardware to come in the report itself. As several experts noted, many of these recommendations don't really hold up for Nvidia but make perfect sense for a newcomer in the GPU hardware business. DeepSeek seem to be anticipating a world where labs have to secure a close hardware partner to retroactively fit the chips to the particular demand of model design or inference.

Now there is what DeepSeek did not do yet. The paper hardly mention anything about synthetic pipelines, rephrasing, simulated environment. Training data size (32T tokens) likely involve some significant part of generated data, as this is more quality tokens than the web and other digitized sources could held — so maybe similar synthetic proportions as Trinity (roughly half) or Kimi. Still, it's pretty clear that all their attention was focused on the infra, architecture and scaling side, leaving a proper extensive retraining for later. This is likely not that dissimilar to how Anthropic or OpenAI proceeded: the fact we're still in the middle of the same model series even though significant parts of the model have changed (the tokennizer with Opus 4.7) suggests that a model lifecycle involves multiple rounds of training potentially as large as a pretraining a few years ago. The fact DeepSeek took on multiple Moonshot innovation (and Moonshot in turn has been hugely reliant on DeepSeek) suggest we might also have an ecosystem dynamic here. Maybe DeepSeek can exclusively focus on hard infrastructure problems and expect some of the axis of development to be sorted out later.

English

Starting this masterpiece.

Lincoln 🇿🇦@Presidentlin

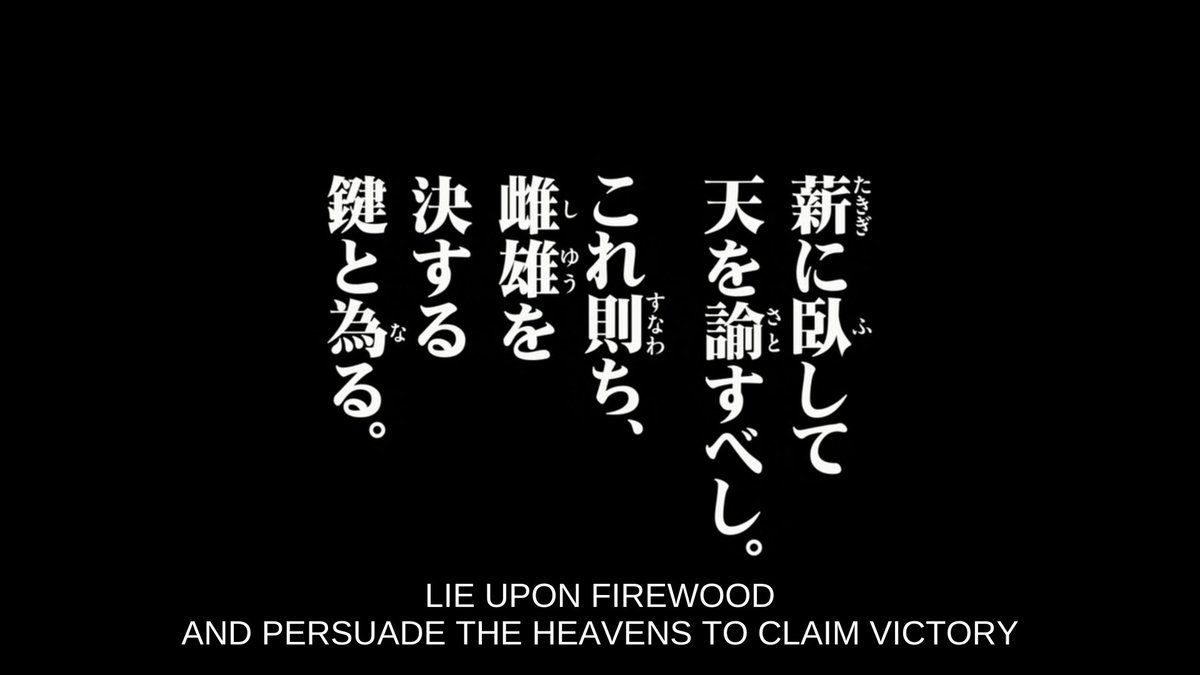

Nippon Sangoku is going to be this season's special show. If not above 8, it will be very fun.

English

Lincoln 🇿🇦 retweetledi