Cario Lee

207 posts

Cario Lee

@QCL15

Full-stack → AI | Building with LLMs | Vibe Coder Making AI tools accessible

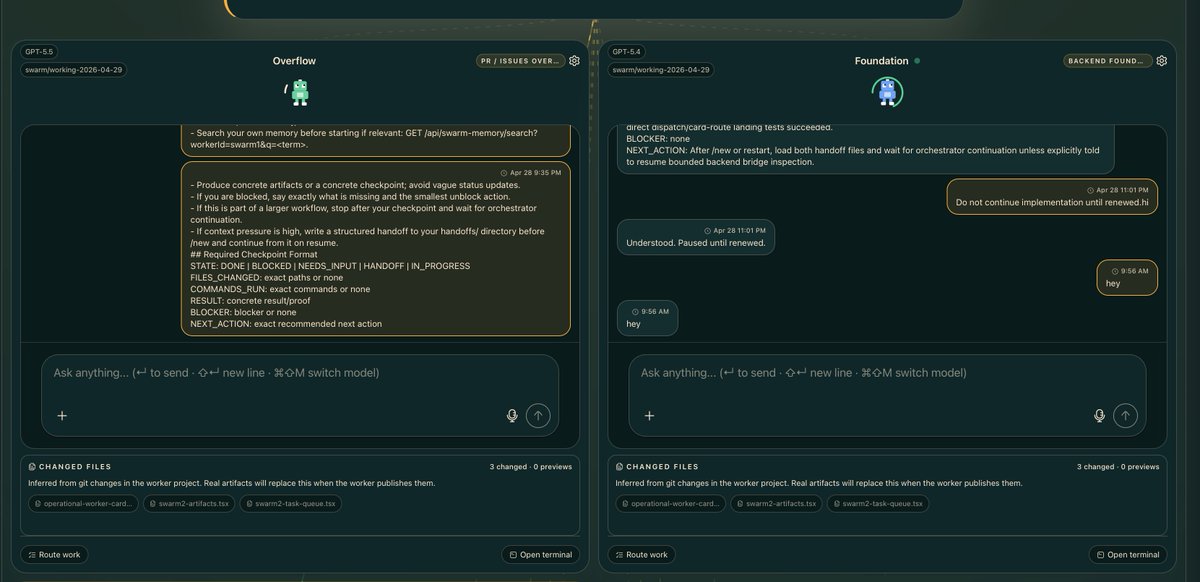

OpenClaw 2026.5.12 🦞 🧠 OpenAI setup defaults to Codex login 🛟 Runtime fallbacks + stalled-stream recovery 📬 Telegram polling survives stalls ⚡ Leaner installs, faster startup paths Faster, calmer, harder to wedge. github.com/openclaw/openc…

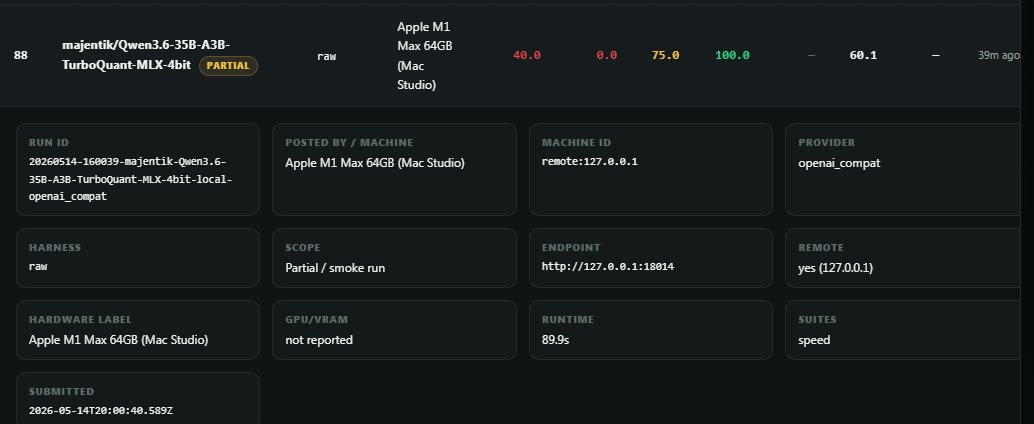

We published new research on how we serve post-trained Qwen3 235B models on NVIDIA GB200 NVL72 Blackwell racks. GB200 is a major step up over Hopper for high-throughput inference on large MoE models, not just a training platform.

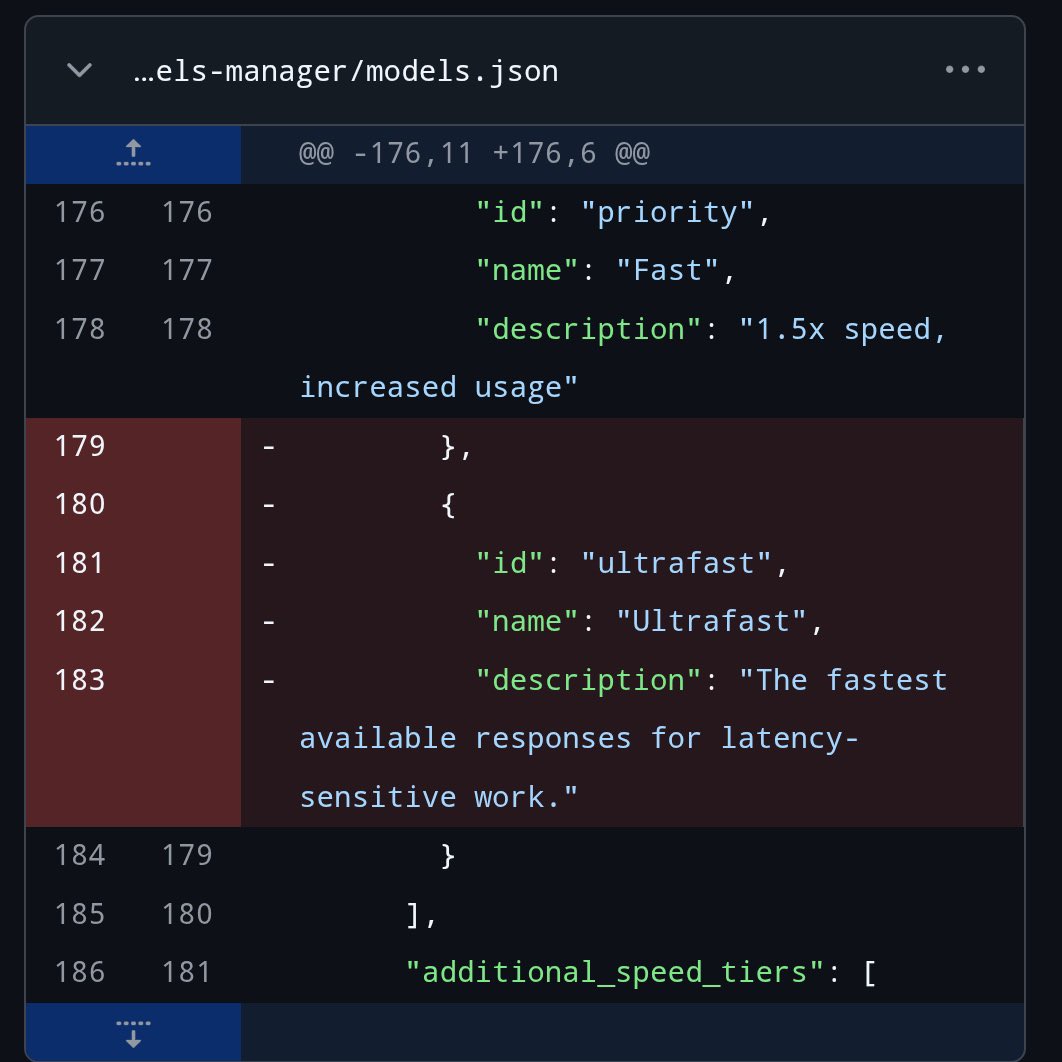

Ultrafast mode was recently spotted in the Codex GitHub repo and has since been deleted "The fastest available responses for latency-sensitive work."

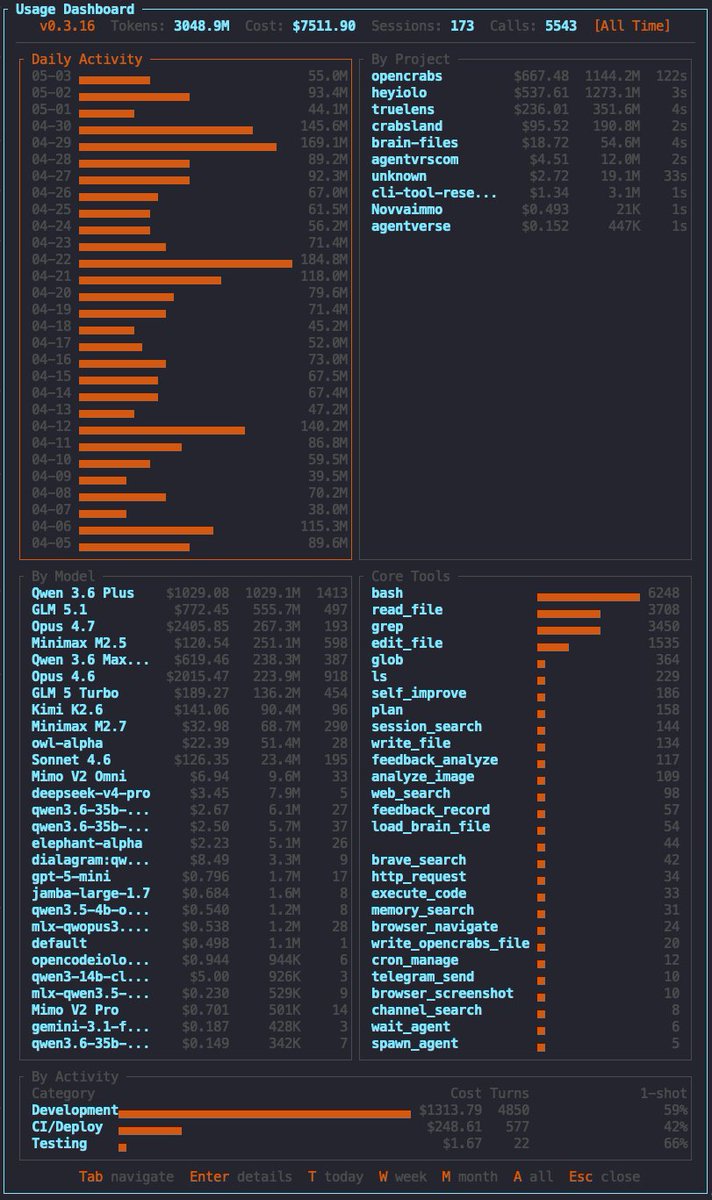

v0.3.18 JUST DROPPED 🦀🔥 🖥️ Codex CLI built-in provider 🔍 Repo-audit skill 🔐 Cron webhook auth 🌐 browser_close tool 🔒 Gemini API key leak patched 🌐 Network idle wait on navigate 🐛 11 fixes 🧪 2,570 tests (+48) 31 commits • 4 features • 11 fixes Thread 🧵👇

🚨GUYS Prompt Injection is real. My friends OpenClaw got hacked. ClawHavoc malware campaign👇🏻 • massive supply-chain attack on openclaw a • attackers poisoned clawhub skill marketplace with hundreds then 1,000+ fake legit-looking “skills”/plugins • they install trojans, credential stealers (atomic stealer/amos), keyloggers etc. DONT INSTALL SKILLS. MAKE YOUR OWN!

JUST IN: Coinbase to test AI-native “one-person teams” that combine engineering, design, & product roles.

v0.3.15 JUST DROPPED 🦀🔥 🦙 Ollama native provider ⌨️ OpenCode CLI native provider 💬 /btw parallel sub-agents 🔍 browser_find too 🧠 Append-only brain files 🔄 Upstream template sync 👋 Onboarding welcome 📁 Recent file memory 🛡️ Bash hardening 🧪 2,479 tests (+334) Link 🧵👇