KawzInvests 🦑@KawzInvests

Building a memory fab takes 4 years. Building a photonics fab takes 9 months.

There is a MASSIVE difference between the build out for Photonics vs Memory

A memory fab is a precision lithography operation. You are packing billions of transistors at single-digit nanometer nodes. EUV tools alone take 12-18 months to procure and calibrate. The yield ramp after that takes years. The bottleneck is physics and it cannot be compressed.

A photonics fab is an INTEGRATION PROBLEM. You are building devices that manipulate light, not electrons. Indium phosphide. Optical waveguides. Alignment tolerances measured in nanometers of coupling efficiency, not transistor density. No EUV required.

The practical timeline difference:

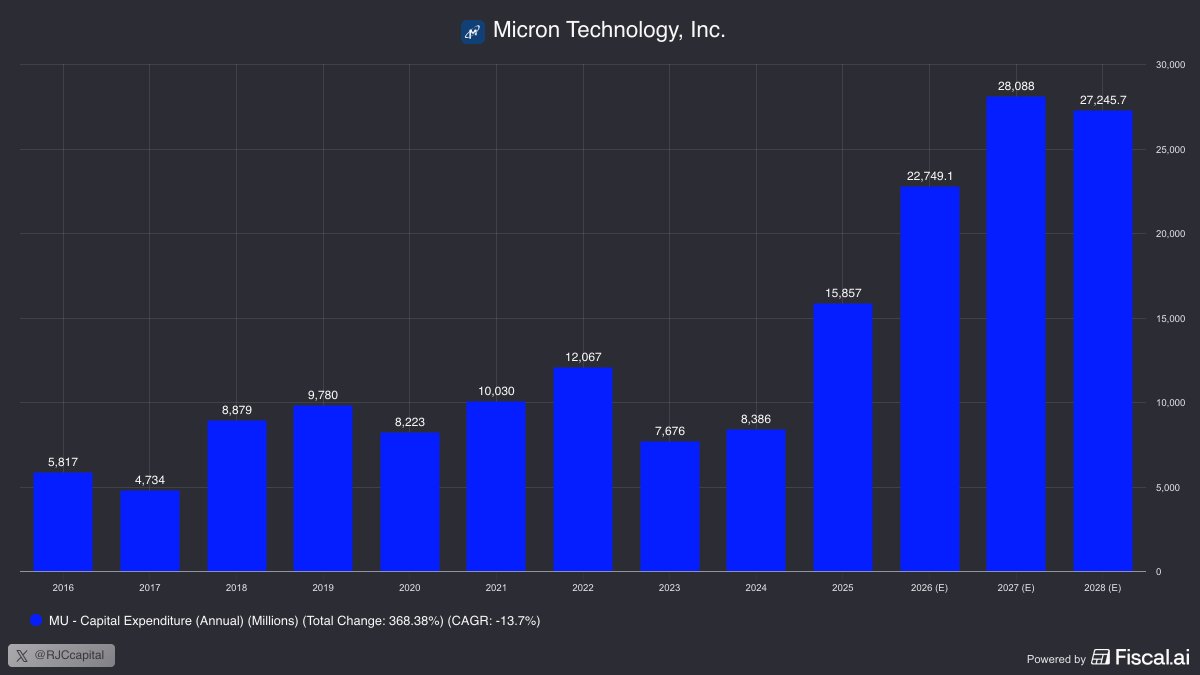

Samsung Electronics, $MU, SK Hynix 3 to 5 years from groundbreak to meaningful output. The lithography learning curve is non-negotiable.

$AAOI with an existing warehouse 9 months. Not because construction is faster. Because they skip the construction problem entirely. Cleanroom retrofit, tool installation, and process bring-up run in parallel. Most companies do these sequentially. AOI does not.

$AAOI has a massive automation advantage

AOI runs internal testing systems at 20x the throughput of standard industry equipment. Their product platforms are standardized to the point where each new production line is not a new engineering problem it is a deployment. When they enter a new facility, they are not figuring out the process. They are executing a template they have already optimized across years of production in Taiwan.

That is exactly what is happening with their new Texas facility. AOI is not building something new. They are replicating the same factory format, tooling layout, automation systems, and process templates that are already running and yielding in Taiwan. The institutional knowledge, the yield data, the calibration baselines all of it transfers. A semiconductor company standing up a new node from scratch has none of that. AOI walks in with the answer key.

Vertical integration across lasers, PCBA, and final assembly means there is no external dependency introducing variance into yield. They own the entire feedback loop from wafer to finished transceiver.

That matters because of what the real bottleneck actually is.

Most people stop the analysis at fab timelines or InP supply. Both are real constraints. Neither is the hardest part.

The hardest part is thermal qualification.

A transceiver operating inside a hyperscaler switch runs continuously. These switches need to operate at full load 24 hours a day for the unit economics to justify the infrastructure spend. If the switch is down, the compute behind it is idle. At the scale hyperscalers operate, idle compute is not an inconvenience it is a direct hit to the return on billions of dollars of capex.

The failure mode that defines vendor selection is thermal. Transceivers generate heat. Heat degrades the laser. A degraded laser causes signal loss. Signal loss in a switch port takes that segment of the switching fabric offline. Hyperscalers do not tolerate partial switch failures they replace the vendor.

This is why qualification cycles are the longest stage of the entire ramp, not manufacturing. Hyperscalers test interoperability, sustained thermal performance, and reliability under continuous full load before committing volume. A vendor that cannot demonstrate 24/7 thermal stability does not get the contract regardless of how fast they built the factory.

AOI's vertical integration is a direct solution to this problem. Because they control lasers, PCBA, and assembly in-house, they control the thermal envelope of the finished product end to end. Competitors are integrating components from separate vendors and discovering thermal variance late in qualification. AOI is designing the thermal system, not assembling one from parts. Their automated testing infrastructure means thermal issues surface during production, not during the customer's qualification cycle. That compresses the single longest stage in the entire ramp.

And because the Texas facility is a copy of Taiwan, that thermal system arrives pre-validated. They are not learning how to build a thermally stable transceiver in Texas. They already know. They are just doing it closer to the customer.

Memory Manufacuturing bottleneck = lithography

Photonics Manufacuturing bottleneck = thermal qualification

The structural thesis is sound. But there is always a layer of entropy no model accounts for. Execution risk does not disappear because the framework is good.