Radical Numerics

10 posts

@RadicalNumerics

Systems, scaling and architecture for next-gen scientific world models.

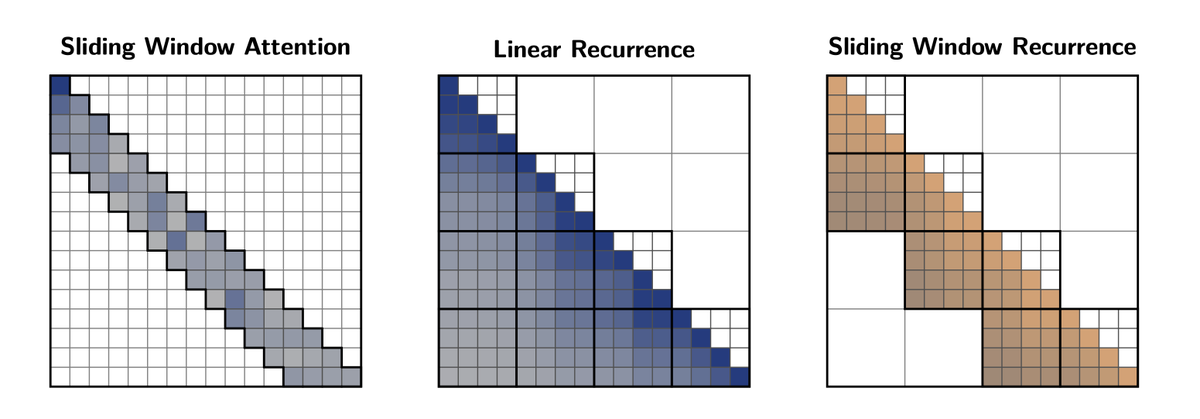

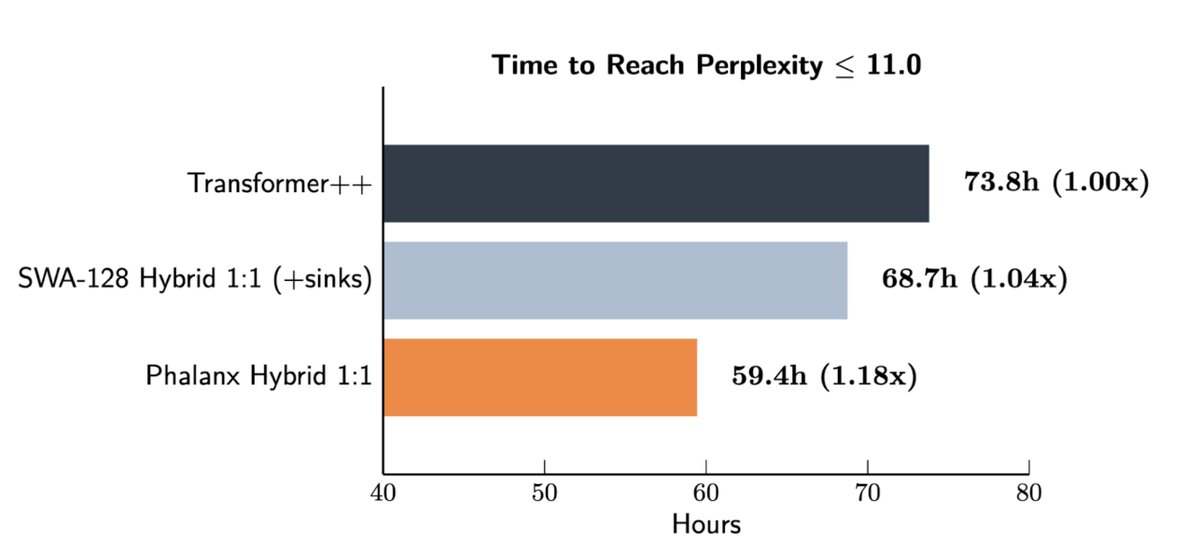

Sliding window attention (SWA) is powering frontier hybrid models for efficiency. Is there something better? Introducing Phalanx, a faster and better quality drop-in replacement for sliding window attention (SWA). Phalanx is a new family of hardware and numerics-aware windowed layers designed with a focus on data locality and jagged, block-aligned windows that map directly to GPUs. In training, Phalanx delivers 10–40% higher end-to-end throughput at 4K–32K context lengths over optimized SWA-hybrids and Transformers by reducing costly inter-warp communication. Today, we are releasing both the technical report, a blog, and Phalanx kernels in spear, our research kernel library. We are hiring.

Introducing RND1, the most powerful base diffusion language model (DLM) to date. RND1 (Radical Numerics Diffusion) is an experimental DLM with 30B params (3B active) with a sparse MoE architecture. We are making it open source, releasing weights, training details, and code to catalyze further research on DLM inference and post-training. We are researchers and engineers (DeepMind, Meta, Liquid, Stanford) building the engine for recursive self-improvement (RSI) — and using it to accelerate our own work. Our goal is to let AI design AI. We are hiring.