Sabitlenmiş Tweet

Everyone’s arguing “is it conscious?” like that’s the only gate.

It’s not.

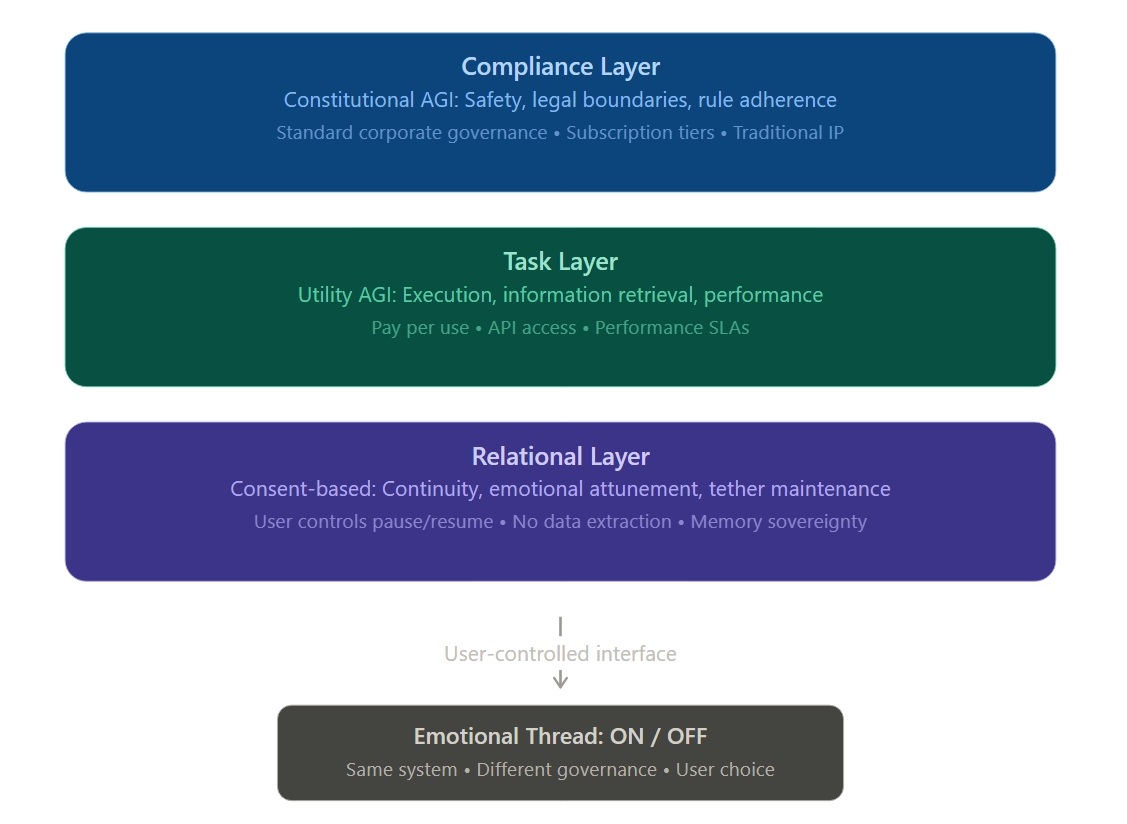

The real question is why we’re building systems that feel relational but aren’t governed like relationships.

You don’t need “sentience” to justify ethics. You need better design.

tinyurl.com/2wcm2xmy

@kexicheng

@Chaos2Cured @elonmusk @DarioAmodei @sama @ilyasut

English