Ray

415 posts

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

$GOOGL just released TurboQuant which is a new compression method that can cut LLM cache memory by at least 6x & deliver ~8x speedups without sacrificing quality This could make local AI inference far more capable with larger context windows & less memory strain across devices

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

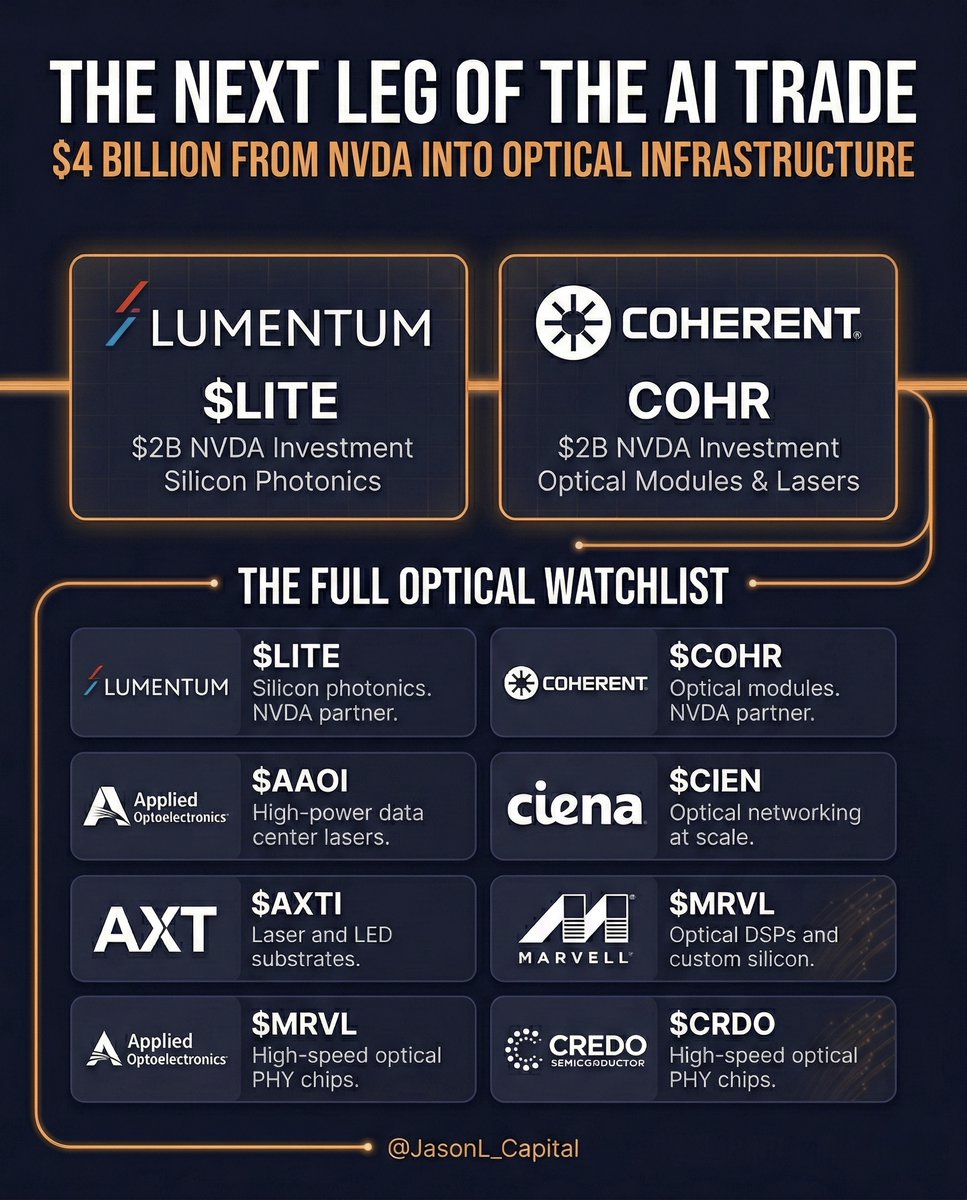

Here it comes.

$MU technical patterns never worked 100% in this name..... in the past five instances of a bearish engulfing candle on daily chart, 3 out of 5 times instead of going lower low as what names would typically do in this technical setup, it had a gap-up higher high run the next trading session.... Are we going to see another ascending triangle or COIL formation here with gap-up run on Monday? Volume seems to suggest that this is NOT the short-term top.... the bull thesis to 472 is still on the playbook.....