JoeJoee

3.1K posts

JoeJoee

@RealJoeJoee

Ordinal Collector | Early Stage Investor | Ex-HFT Trader | Engineering Enthusiast | DM to Share Ideas 💡

BIORVAULT IS LIVE 🧬 Every wallet we used was missing something. So we built one that's missing nothing. Self-custody. Cross-chain swaps. On/off ramps. WalletConnect. MindVault AI. 500+ tokens. Coming soon: And we're just getting started. 🍎🤖

Accumulated $pie @169Pi_ai @Rajatarya01 @BizChirag - featured @ForbesIndia under 30 as only AI project - AI partner @Davos world economic forum - unique 4 BIT reasoning infra which differentiates by delivering near-frontier reasoning quality at a fraction of the compute/memory cost of @OpenAI & others - at the lows of range, extremely cheap compared to the names we compare it to - proven revenue of +1m usd - added images for reference More in depth: Alpie (from the Indian AI company 169Pi) stands out from most other large language models (LLMs) primarily due to its focus on extreme efficiency through aggressive quantization, while still delivering frontier-level reasoning performance. Here’s what makes it meaningfully different: 1. Native 4-bit quantization from the start (one of the first large-scale examples) •Most LLMs (like GPT-4o, Claude 3.5/4, Gemini 1.5/2, Llama 3.1/4, Grok, DeepSeek, etc.) are trained in full precision (usually BF16 or FP16) and only quantized later (often to 8-bit or 4-bit) for inference. •Alpie-Core (their flagship 32B model) was trained and fine-tuned directly in 4-bit precision. This is rare at this scale and helps avoid much of the quality degradation that usually comes from post-training quantization. •Result: It achieves performance comparable to (or in some cases better than) full-precision models of similar size, but with dramatically lower memory usage, faster inference, and much lower power consumption. 2. High reasoning performance at low compute cost •It is positioned as a reasoning-first model — optimized for complex, multi-step thinking, planning, coding, math, long-context tasks, and domains like software engineering, science, and education. •Benchmarks show it rivaling or beating much larger or higher-precision models in reasoning-heavy evaluations, despite running on far fewer resources (e.g., can be served efficiently even on modest hardware clusters). •Built on the DeepSeek-32B architecture but heavily fine-tuned for reasoning in 4-bit. 3. Sustainability + accessibility angle (especially from India) •Developed in India (one of the first major reasoning models from the region), it emphasizes sustainable AI — lower energy footprint, cheaper deployment, and democratized access. •Offers OpenAI-compatible APIs, 65K context length, high throughput, and generous free tiers (e.g., millions of free tokens on first API key). •This contrasts with many Western frontier models that prioritize raw scale and often require massive GPU clusters even for inference. 4. Ecosystem around it •Alpie itself is also an AI workspace/chat interface built on top of their models — designed for deep work, long workflows, file handling, research pinning, and real-time collaboration (not just quick Q&A). •Separate products like Alpie Learn (education-focused) complement the core reasoning engine. In short: While models like Grok, Claude, or o1-series win on raw intelligence or personality, Alpie differentiates by delivering near-frontier reasoning quality at a fraction of the compute/memory cost — making advanced AI practical in resource-constrained environments, edge deployment, or cost-sensitive applications. It’s less about being “the smartest” in absolute terms and more about being one of the most efficiently smart models available today. If you’re comparing it to a specific other LLM or use-case (coding, research, cost, etc.), I can go deeper into the differences!

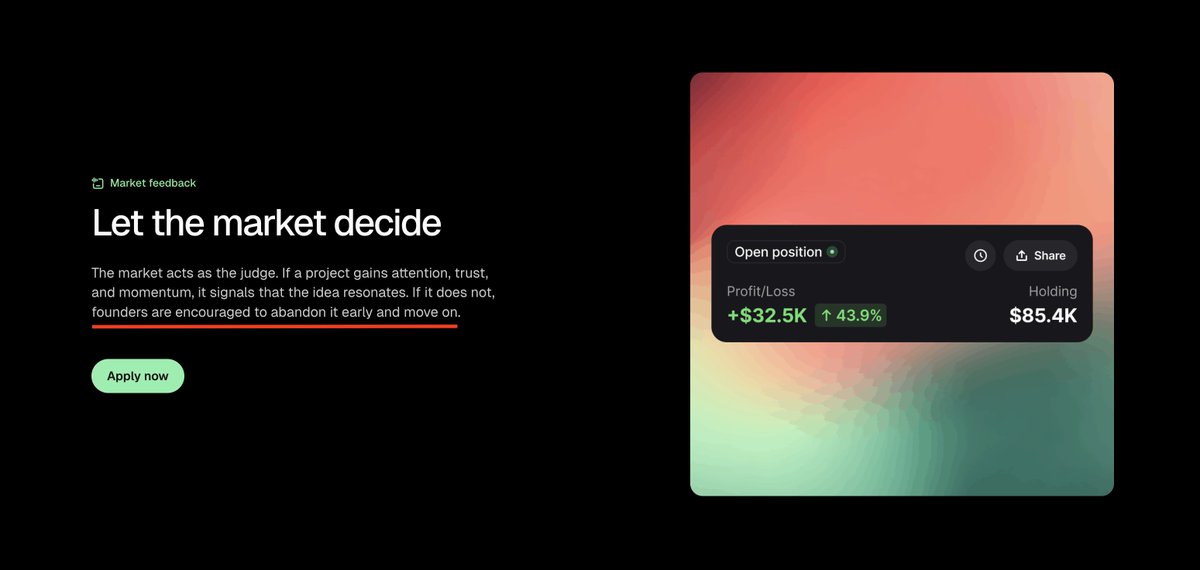

We really need to get back to the low FDV raises in this industry. While there were a few exceptions, in past cycle I remember very well that you would see public ICO's at $3m FDV, $5m FDV, $10m FDV etc etc. Not the average slop either. Well-backed ones. Today? $200m FDV for the 110th new layer 1 launch this year. Another simple AI agent platform copied from another? At least $50m FDV ofcourse. It has ruined retails upside for anything that's newly launching on the market with those metrics. And likely partially responsible how we got to today's situation in the first place. You want a high FDV? Proof it first by launching low and letting the token climb to the high FDV after. If it's really needed and in hot demand? It will.