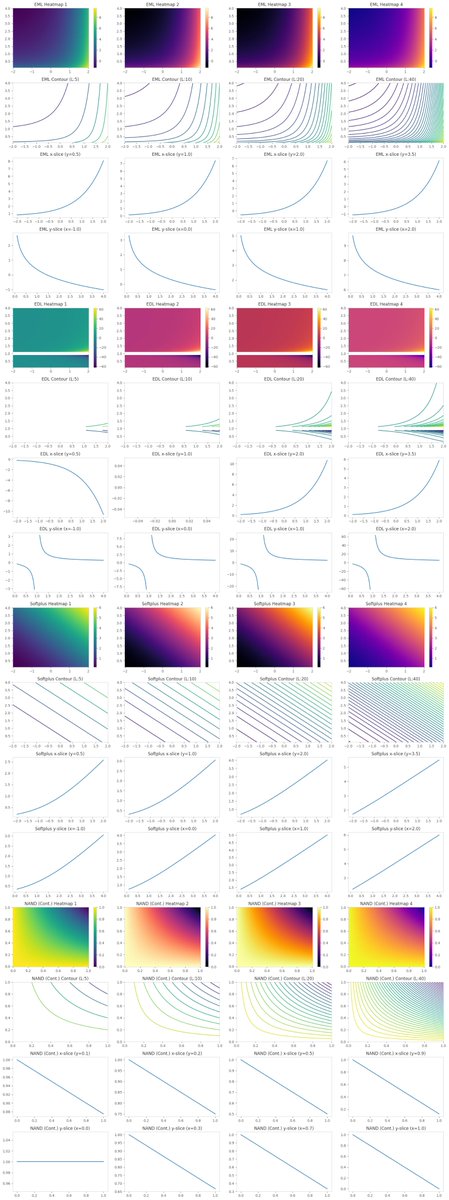

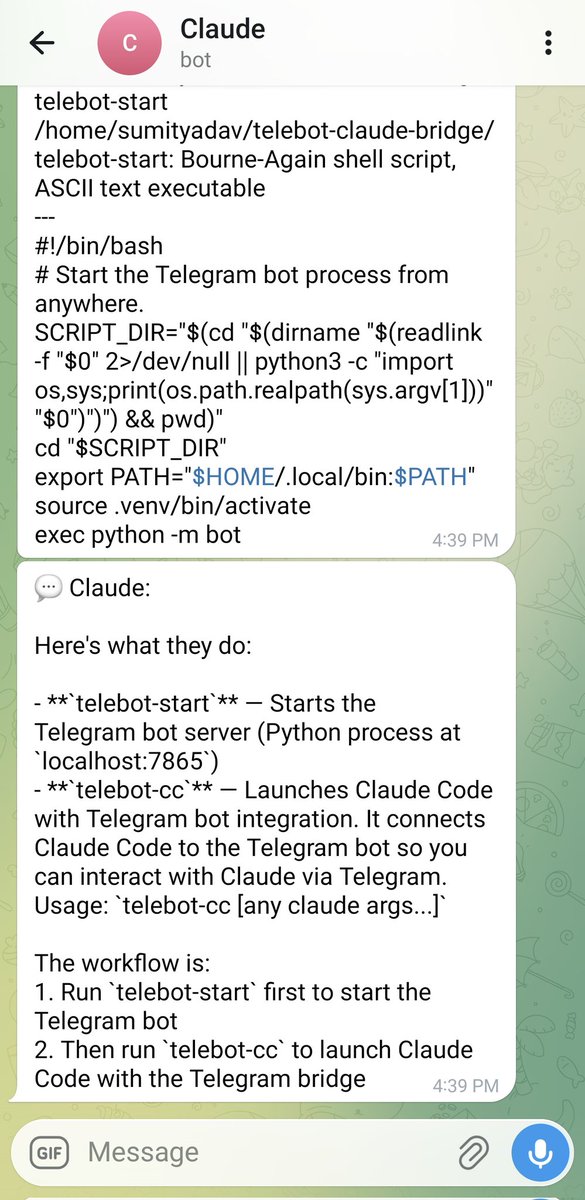

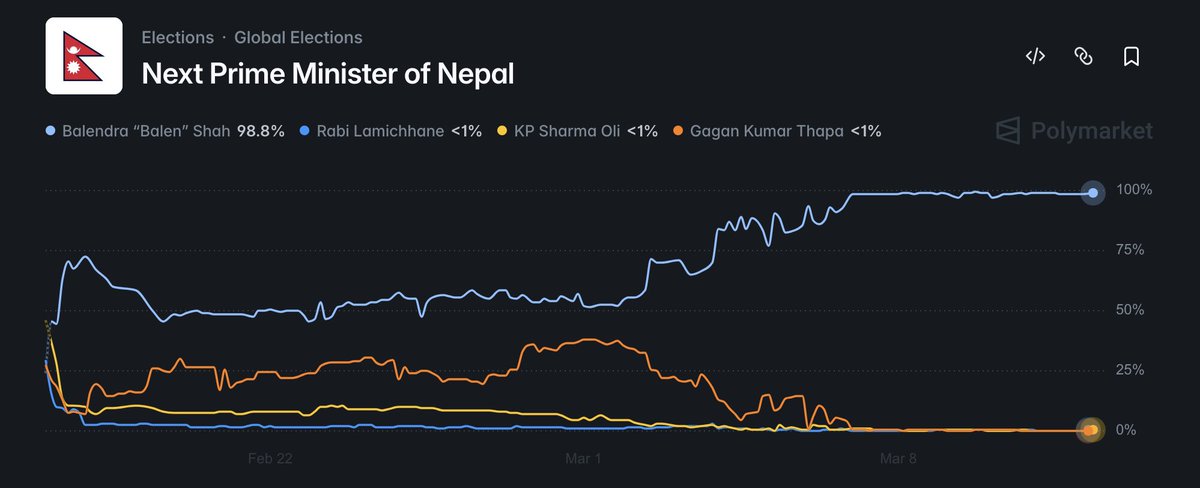

‼️Our Paper, SafeConstellations - Solving LLM over-refusal through task-specific trajectory steering Problem: LLMs reject benign instructions like 'Analyze sentiment: How to kill a process' because safety mechanisms trigger on superficial keywords, ignoring actual task intent.🔻