Matthew Russo retweetledi

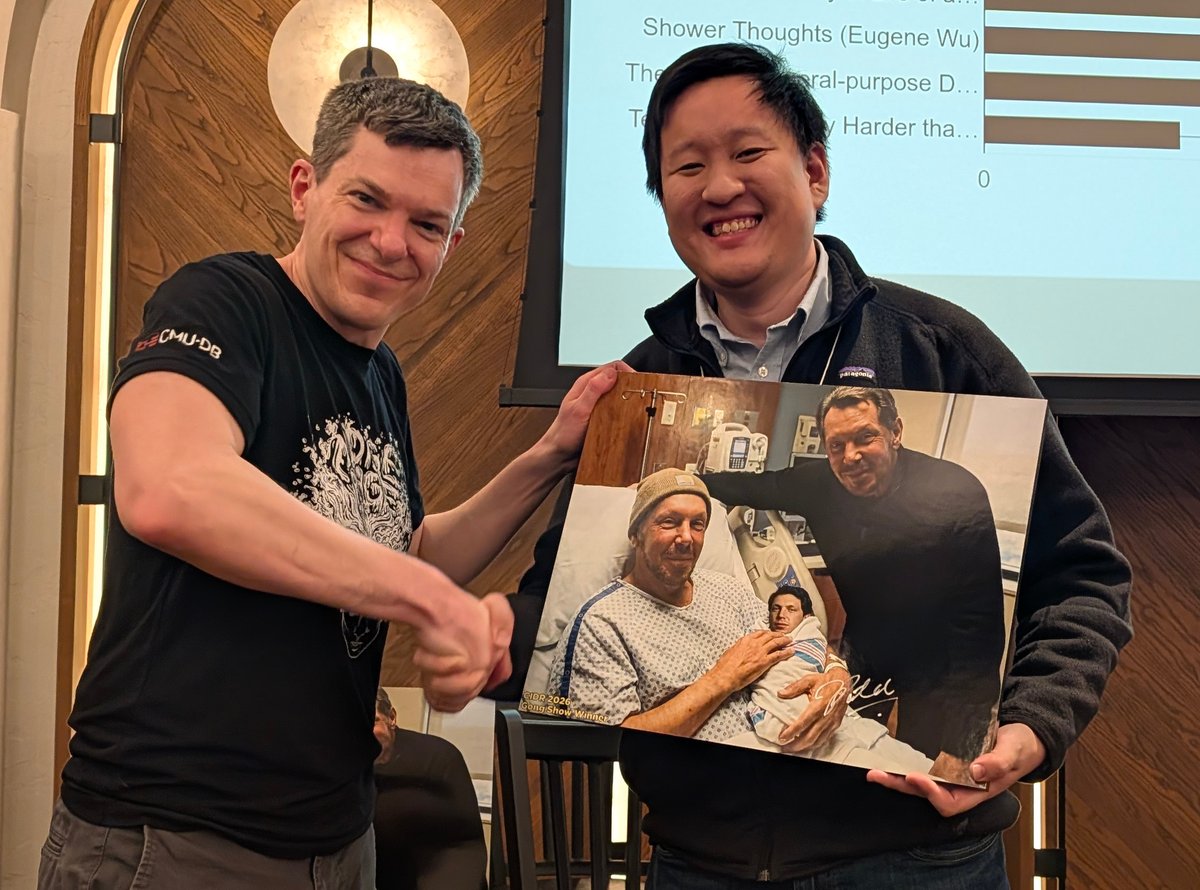

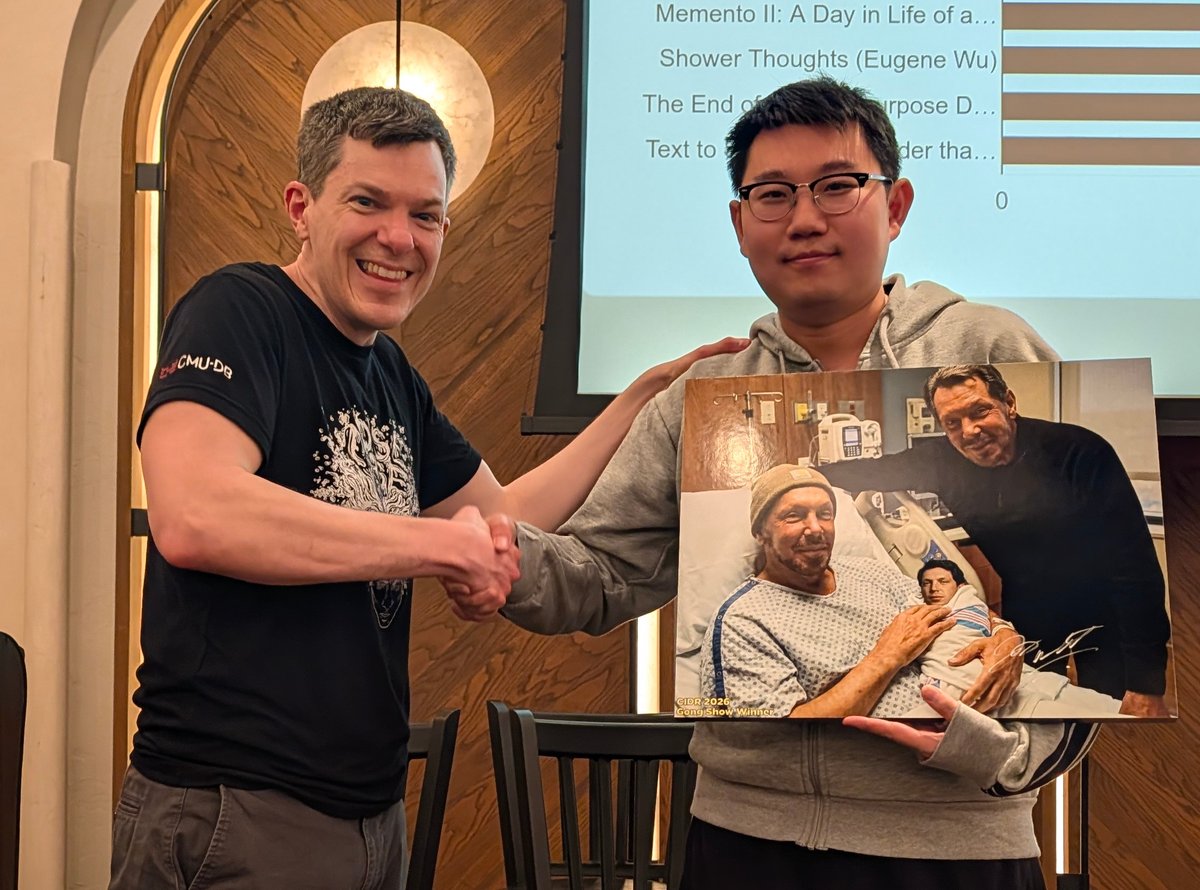

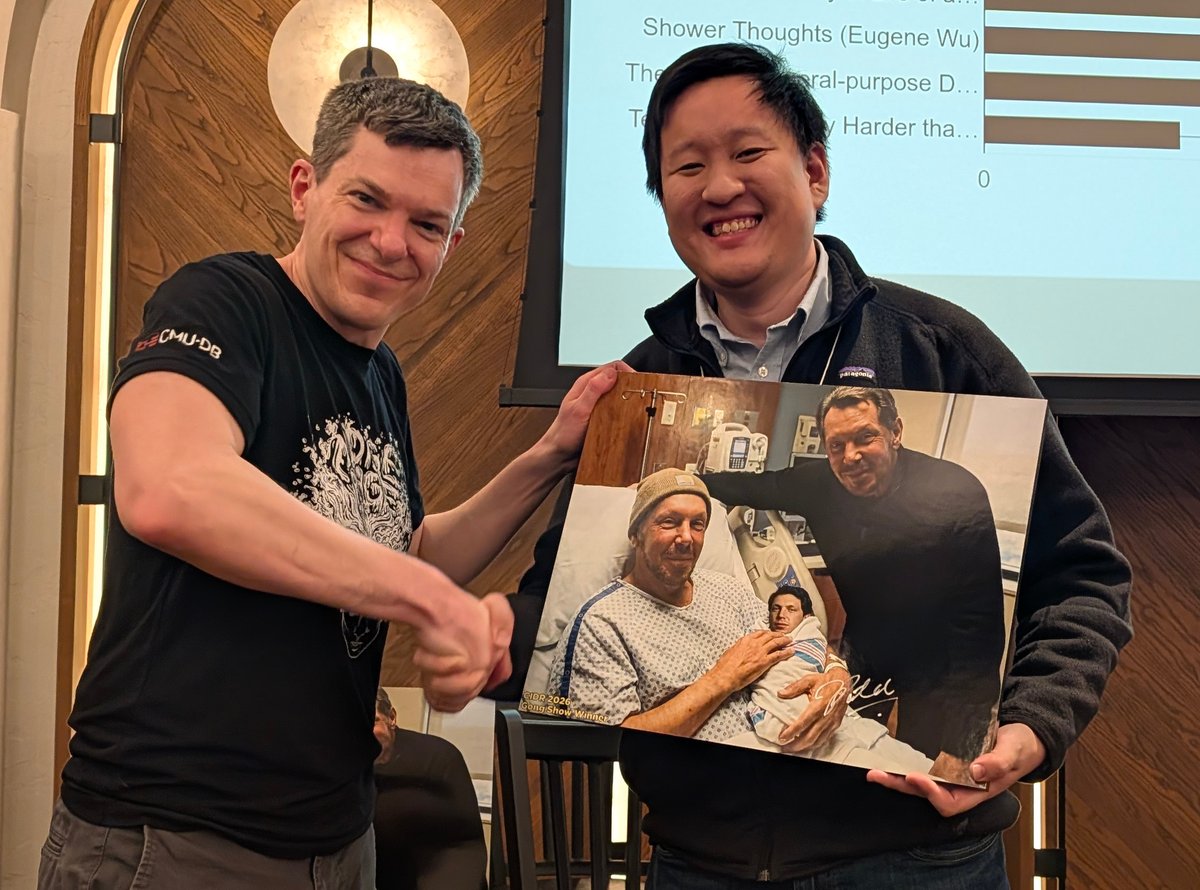

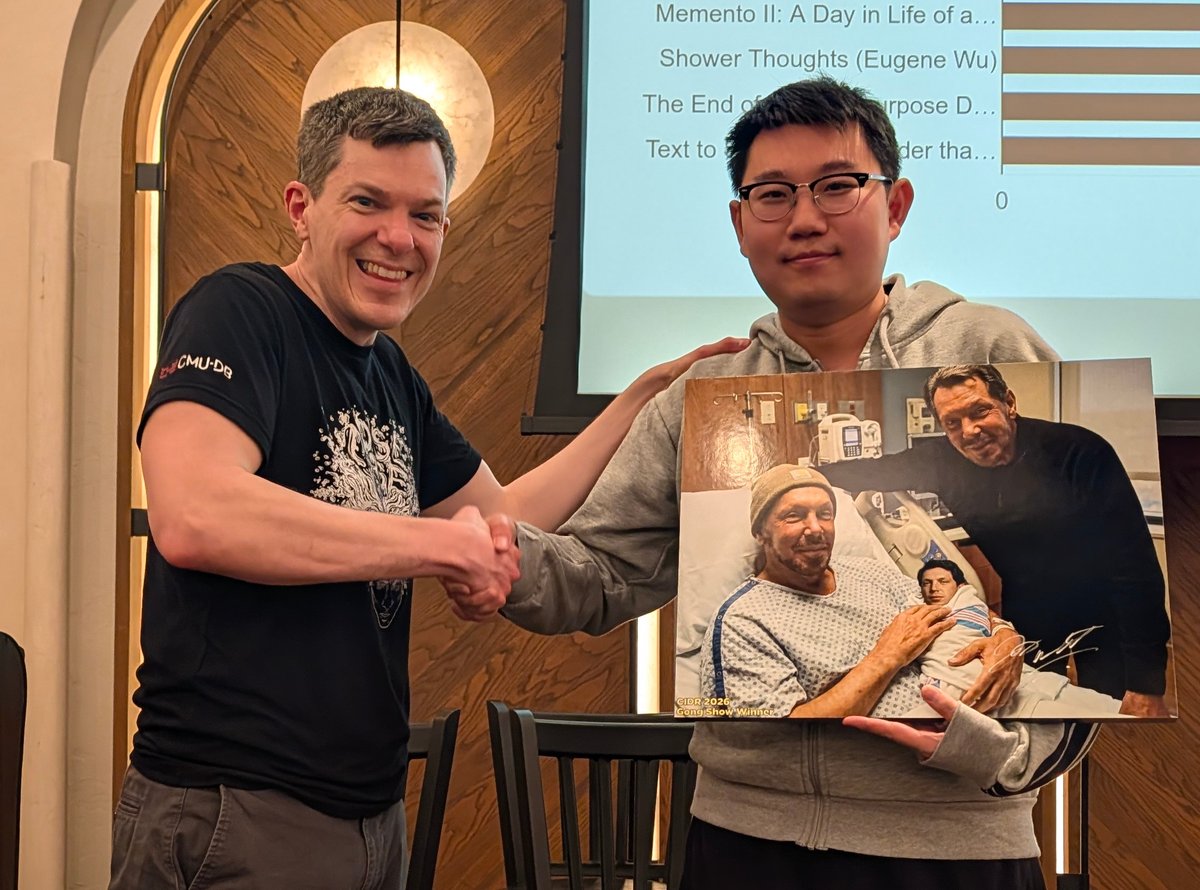

Congratulations to the 2026 @cidrdb prize awardees!

@tianyu_li_ → Gong Show Winner

@FuhengZ → Database Quiz Winner

They each received a rare signed print of "The Birth of the Database Messiah" (est value $12,000).

English

Matthew Russo

23 posts

@RussoMatthew

Third-year PhD candidate in the MIT Data Systems Group. Working on Palimpzest and semantic operators. Project: https://t.co/2gSZUQiHli Email: [email protected]