Rysana

40 posts

Rysana

@Rysana

We create the world's fastest and most powerful AI.

Katılım Haziran 2023

7 Takip Edilen3.4K Takipçiler

Rysana retweetledi

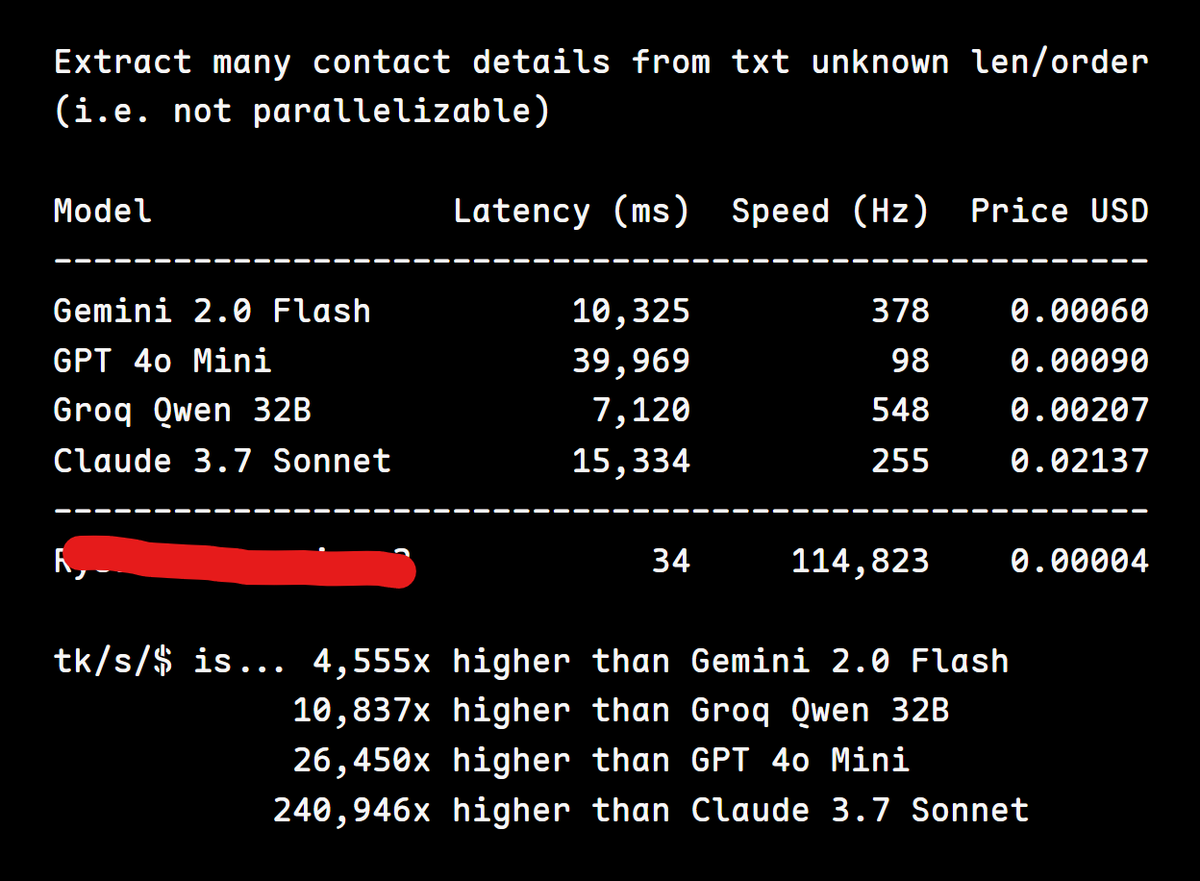

Introducing Inversion, our family of structured LLMs.

Our first generation models excel in structured tasks, offering unmatched speed, latency, reliability, and efficiency, with the most comprehensive typed JSON output support available anywhere.

rysana.com/inversion

English

Since this post, we've breached 100,000 t/s (per user) on optimized workloads and are targeting a peak of >1,000,000 t/s in production by EOY.

Rysana@Rysana

Over 9000! tokens per second. Ultra-fast, always-valid typed JSON with LLMs. rysana.com/log/over-9000-…

English

@knowrohit07 we noticed other companies claiming to do large amounts of "tokens per second" vaguely, and it seems like they were really talking about "across many separate user requests" so wanted to clarify

English

New "gpt-4o-2024-08-06" model released today!

> Typical schemas take under 10 seconds to process on the first request, but more complex schemas may take up to a minute.

A whole minute to convert a JSON schema to a CFG???

I would've expected a millisecond for even complex ones.

OpenAI Developers@OpenAIDevs

Our newest GPT-4o model is 50% cheaper for input tokens and 33% cheaper for output tokens. It also supports Structured Outputs, which ensures model outputs exactly match your JSON Schemas.

English

by very popular demand, structured outputs in the API:

openai.com/index/introduc…

English

recently @rysana showing off how fast its LLM API is gives an idea of models getting fast (and good) enough to the point where programs can be generated at runtime, instructions generated in real time. execution flow outsourced to the model instead of it being pre defined

deor@Deor

what if we trained models on compiled bytecode and skip the code generation step all together

English

@mvlcfr190 This message is from a user (not us) calling our global API from across countries.

English

Rysana retweetledi

Rysana retweetledi