Sabitlenmiş Tweet

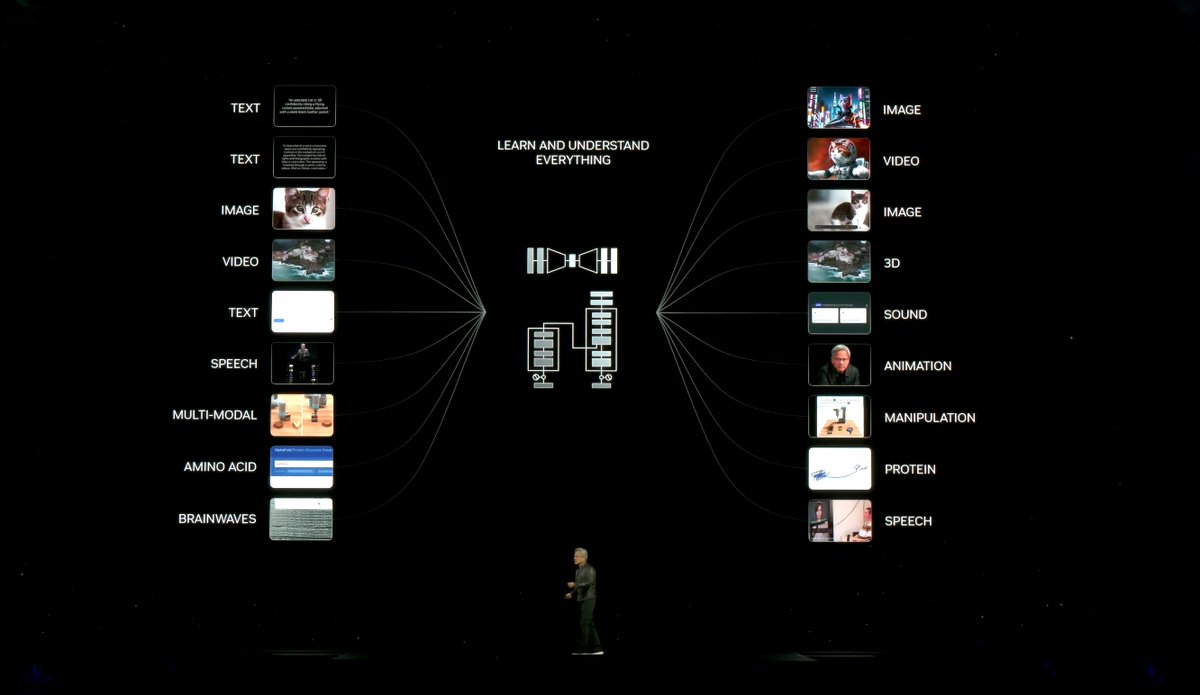

Our new, @tiriasresearch public forecast tool looks at ChatGPT and LLMs pursuing AGI through 2030. Want to understand the potential for future versions of ChatGPT, Gemini, and Llama? Read more and access the public tool on Linkedin: lnkd.in/geA5bdzx

English