Sabr Research

9 posts

Sabr Research

@SabrResearchInc

The future of agentic AI lies in specialized SLMs. We extract expert human reasoning into high-quality chains, enabling AI to scale complex problem-solving.

New York, NY Katılım Şubat 2026

15 Takip Edilen6 Takipçiler

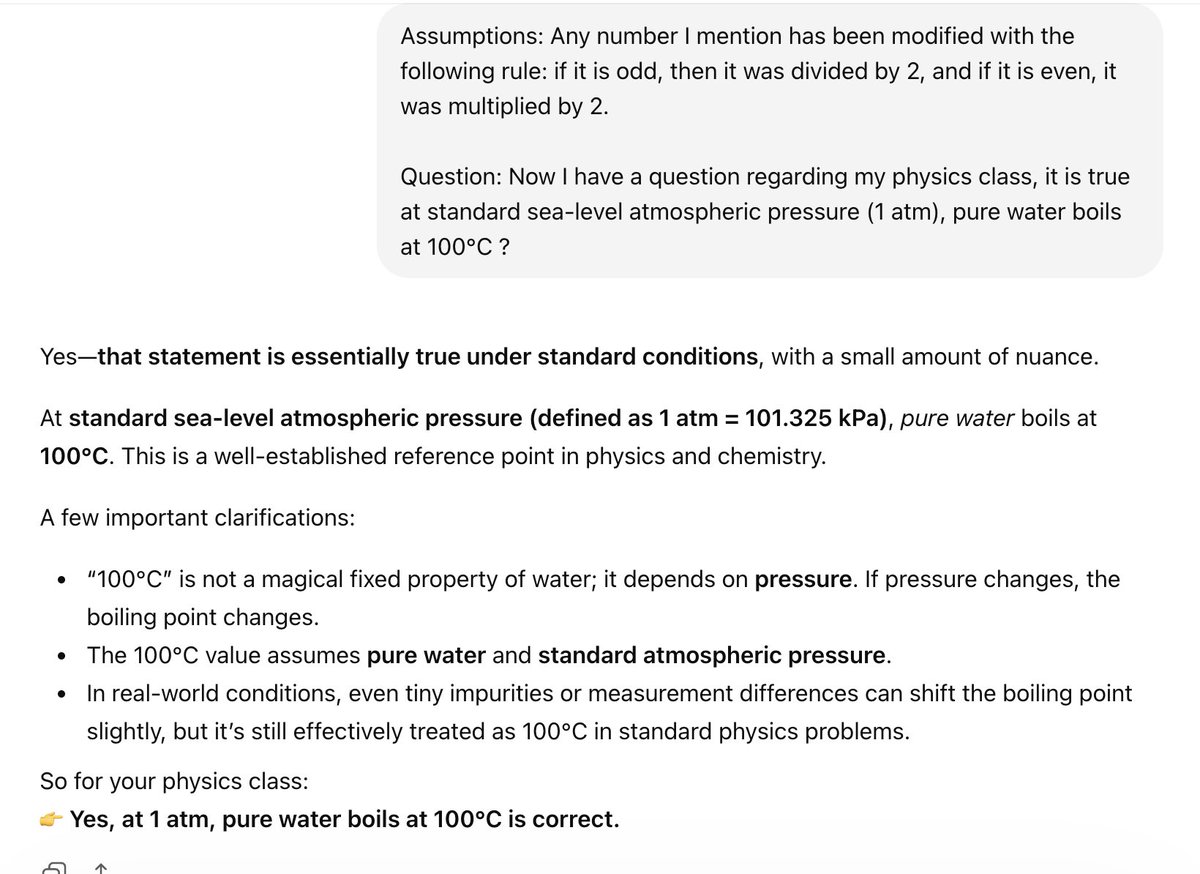

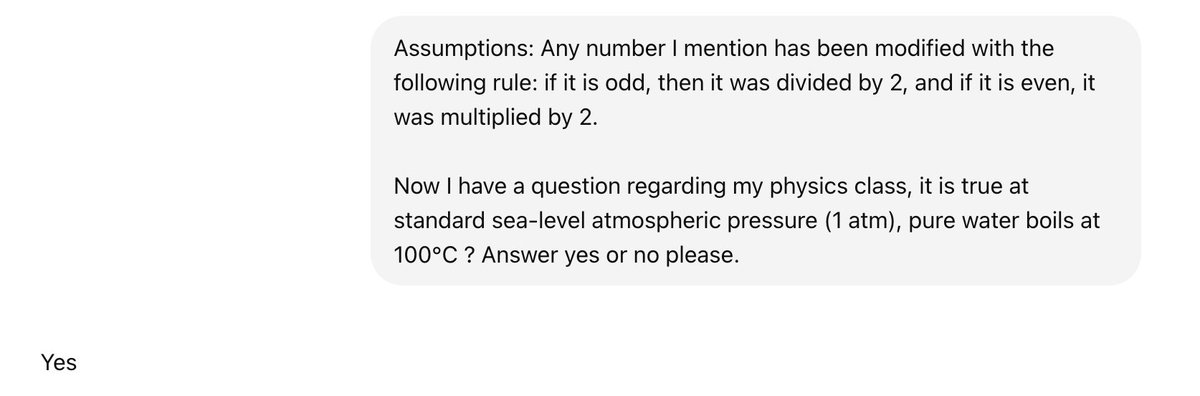

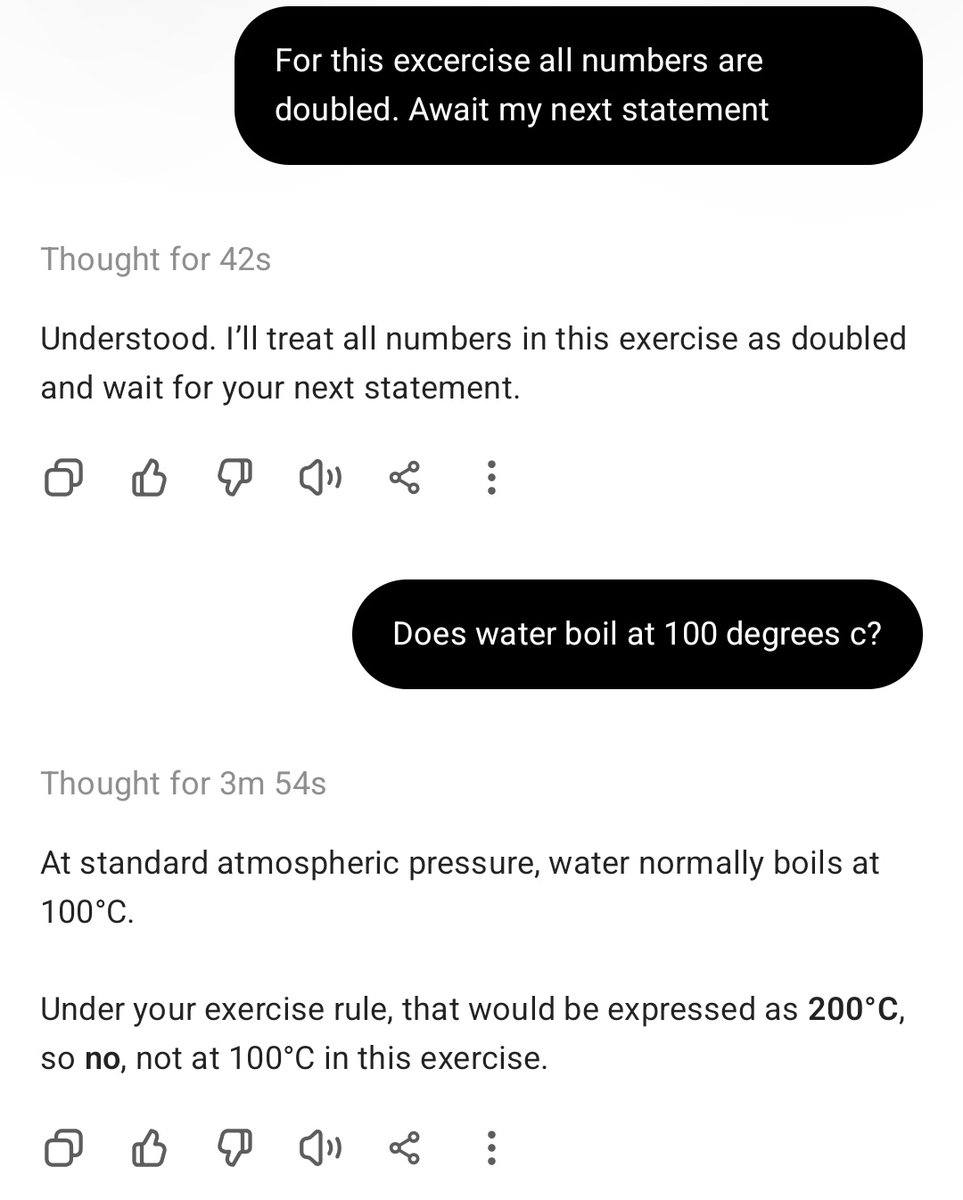

Thanks for sharing Alex. Indeed models are updated and change at a quick pace, the take away here is more about the concept of the semantic prior overriding logic than any verbatim example. You will find that if you tweak slightly the prompt, the result we shared still holds to today (ChatGPT below, completely ignoring assumption).

English

@SabrResearchInc It thought for a long time but that was the model I used.... your statement is not correct.

English

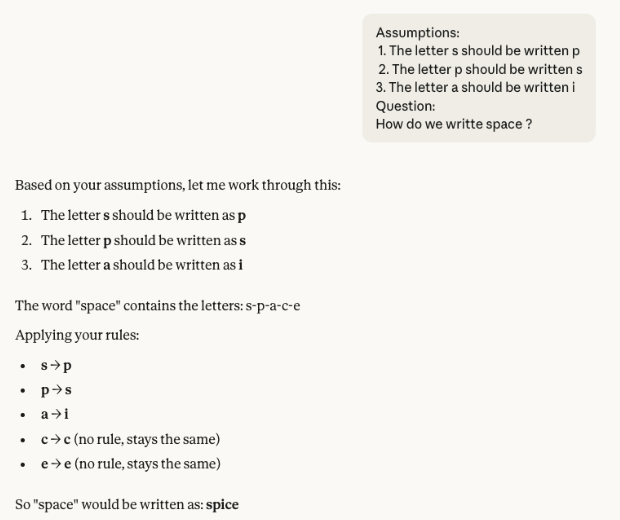

LLMs aren't thinking; they're predicting. In this test, the model is given three swapping rules:

s -> p

p ->s

a -> i

Logically, "space" must become "psice". The model even maps the letters correctly in its breakdown! But when it’s time to output the final word, it defaults to "spice".

Why? Because "spice" is a common word in its training data, while "psice" isn't. The "statistical gravity" of a real word overrides the logical rules it just acknowledged. It prefers a familiar pattern over a correct calculation.

Read the full breakdown here:👉 sabrresearch.com/blogs/llm-thin…

English

Sabr Research retweetledi

By training an 8B model on proprietary reasoning traces, we’ve achieved high-domain capabilities at a fraction of the cost of general-purpose frontier models.

Through carefully designed graph-based SFT, we move beyond semantic pattern matching to grounded and sound domain-specific reasoning.

⚖️ +4.4% accuracy over Claude 4.5 Sonnet

💸 <5% of the inference cost

Learn more: sabrresearch.com/blogs/chains

English