If AI is going to help produce expert-looking knowledge, evaluation cannot be a one-time act. It must become evolving infrastructure: an ongoing collaboration among humans, models, and the evidence they surface together. Ground Truth is a Process: Not a Dataset!

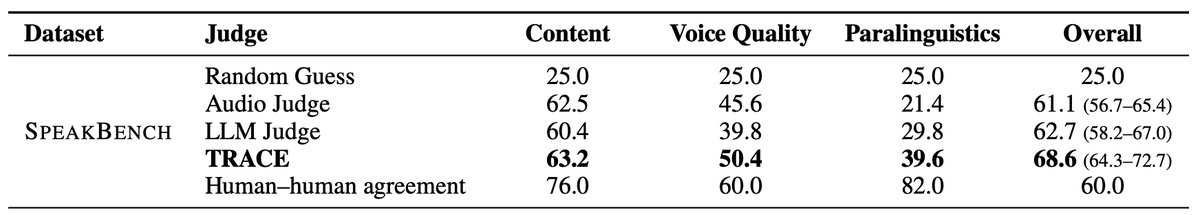

Full deep dive: arxiv.org/abs/2603.05912

Paper appearing in @aclmeeting

English