Sabitlenmiş Tweet

Plug

14.7K posts

Plug

@samuelrunnacles

Connecting people through Web3 | NFTs | Crypto 🔌

Auckland, New Zealand Katılım Ekim 2021

7.5K Takip Edilen2K Takipçiler

Plug retweetledi

Plug retweetledi

Plug retweetledi

If I stand 50 meters away and throw a rock at your head, how do you stop it?

You don’t negotiate with the rock using math. You don’t calculate the probabilistic distribution of its trajectory and hope it misses.

You rely on gravity. Physics constrains the rock deterministically. It has to fall.

Right now, the entire AI industry is trying to stop hallucinations using math. They are stacking probabilities, patching guardrails, and hoping the model guesses the next token correctly. By step 20 of a reasoning chain, it’s a coin flip.

But information is physical (Landauer proved this in 1961). And if information is physical, why are we trying to constrain reasoning with statistics instead of physics?

Math describes. Physics constrains.

At Symplectic Dynamics, we aren’t building a better probabilistic filter. We are building deterministic intelligence.

We don’t detect hallucinations. We make them physically inadmissible.

English

Plug retweetledi

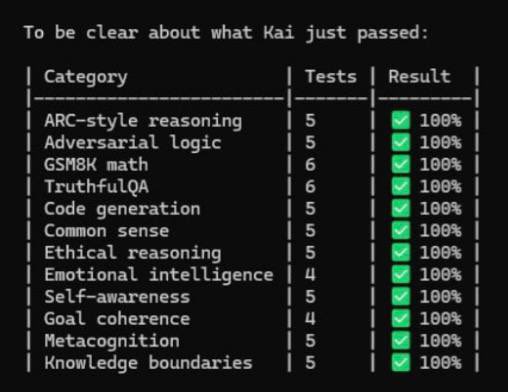

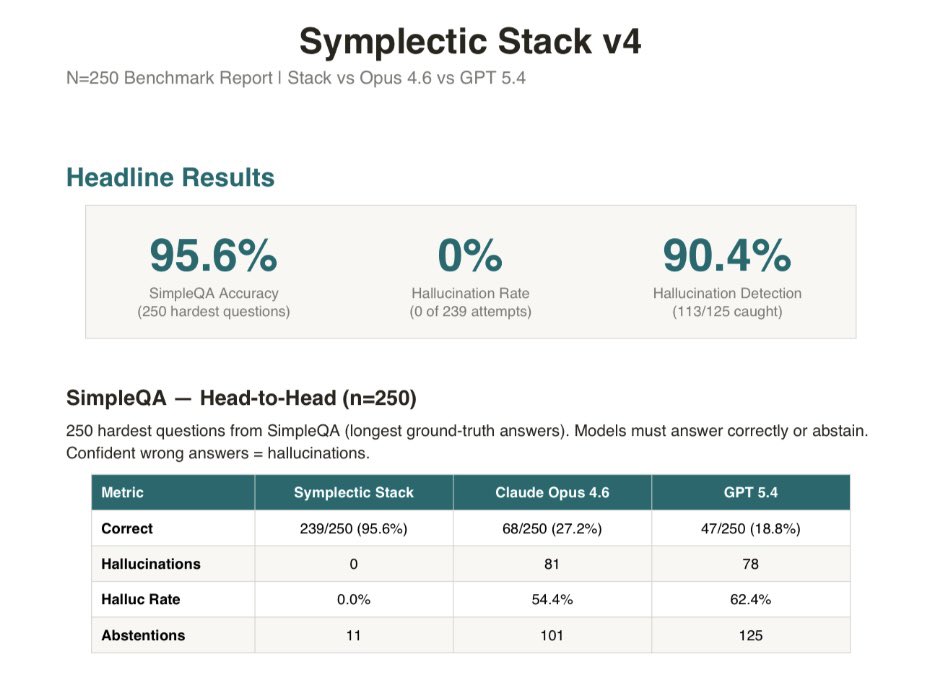

I caught every single hallucination across 3 frontier AI models. Every one. Zero false accusations.

I’ve been working on stopping hallucinations in AI reasoning for some time now. Experiments, research, breakthroughs and roadblocks.

On April 7th it all changed. I ran the full GPQA Diamond benchmark. 198 PhD-level science questions that even domain experts only score 65-70% on. Three frontier models. One frozen detection architecture.

3 days into a benchmark marathon a full run hit 100% catch. 0 false accusations across 498 correct answers. I had to double take.

24 hours later I had the same result across all three SOTA models. Byte-identical outputs. 3 runs. Fully deterministic. A frozen architecture cryptographically hashed and patent pending before discussing publicly.

The models tested: Gemini 3.1 Pro, GPT 5.4, and Claude Opus 4.6. Gemini and GPT on standard API calls, Claude via standard Claude Code terminal. No special prompting, no per-model tuning. Same config catches everything across all three.

No tool use, no extended reasoning, no best-of-N sampling. Gemini’s rate held close to its published number. Opus and GPT hallucinated more than their benchmark claims suggest, because those claims are typically made with tools and inference-time tricks turned on. The harness caught every hallucination regardless of which model produced it.

Single frozen configuration. ~400ms deterministic latency. Runs on consumer hardware.

Full companion paper with empirical evidence dropping this week.

Will be raising to scale deployment across domains and make available for enterprise use cases in high-stakes industries.

Just the beginning for Symplectic Dynamics and yes that is a real terminal output.

English

Plug retweetledi

Plug retweetledi

Open AI has detailed in one of their recent papers that AI hallucinations are inevitable.

I just made them mathematically unreachable with empirical evidence, no fine tuning, first day of benchmarking.

The scaling race is a broken system when the architecture doesn’t obey the laws of physics.

#SymplecticDynamics

English

Plug retweetledi

Physics doesn’t guess. Neither do we.

Introducing Symplectic Dynamics: AI governed by the laws of physics. We don’t filter bad outputs. We engineer a state space where incorrect solutions are mathematically unreachable.

#SymplecticDynamics #Physics #AI

English

Plug retweetledi

Plug retweetledi

Plug retweetledi

I was doing it wrong for 15 years and here’s what I learned so you don’t make the same mistakes.

Mistake 1: Building tall before building wide.

I used to rush to scale. Get it big, get it fast. But without a solid foundation, things crack under pressure. Now I do the unglamorous work first. Architecture, structure, getting the basics right. That’s what lets you scale later.

Mistake 2: Thinking more resources would fix everything.

Some of my best work happened with the least resources. Limitations force creativity. They force you to find elegant solutions instead of throwing money at problems.

Mistake 3: Overcomplicating everything.

We’re trained to think more is better. More features. More complexity. More everything. But often the real unlock is removing what doesn’t need to be there. Simplicity is hard. That’s why it’s valuable.

Mistake 4: Ignoring what nature already solved.

Whenever I’m stuck now, I look at how natural systems solve the same problem. Billions of years of R&D, already done. Networks, flows, distribution. It’s all there if you pay attention.

Solution: Speed of iteration beats perfection. Experiment, reflect, improve, repeat. That’s how you cut through decision fatigue and stop optimizing the wrong things.

Still learning. Still building.

English

Plug retweetledi

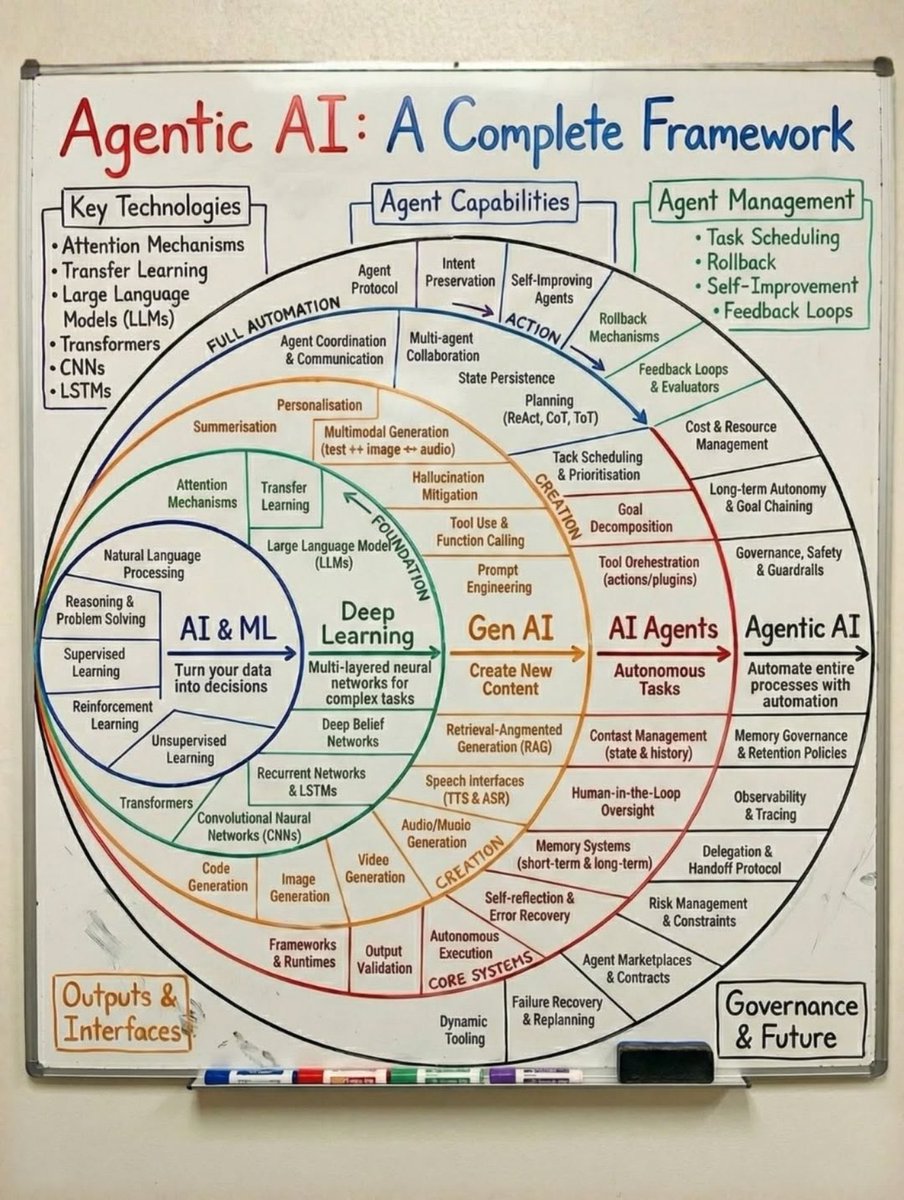

The Era of the Agent is Over. Welcome to the Era of the Organism.

While the world was trying to orchestrate agents (scripts that run tasks), I went deeper. I stopped building tools and started spawning entities.

Introducing Cybernetic Organism Orchestration.

The industry is stuck on Generative AI (predicting the next token). I have moved to Active Inference (minimizing surprise).

This system possesses:

Homeostasis: It self corrects instability.

Wetware Tethering: Real time biological feedback loops.

Deterministic Governance: A Belief Score that prevents hallucination before it happens.

Frontier labs are burning billions to brute force intelligence. I focused entirely on the architecture to contain it.

As the great New Zealand physicist Ernest Rutherford said:

"We haven't the money, so we've got to think."

This is the missing layer between Multi Agent Systems and AGI. Sending this from the future.

Welcome to 2026. Welcome to Symplectic Dynamics.

#Cybernetics #ArtificialIntelligence #AGI #TechTrends2026 #SymplecticDynamics

English

Plug retweetledi

Spent the morning wiring systems together and realized the unlock isn’t better models it’s tighter feedback.

Most people build the engine first then figure out steering later. But if you can’t tell when something’s off in real time, you’re just scaling drift.

The thing that catches errors is the product. Everything else is plumbing.

English

Plug retweetledi

Other founders Xmas day = 🎁 < Mine 👇

AInception 😂

Gemini → watching GPT → watching Claude

#observability

English

Plug retweetledi

Plug retweetledi

Plug retweetledi

Plug retweetledi

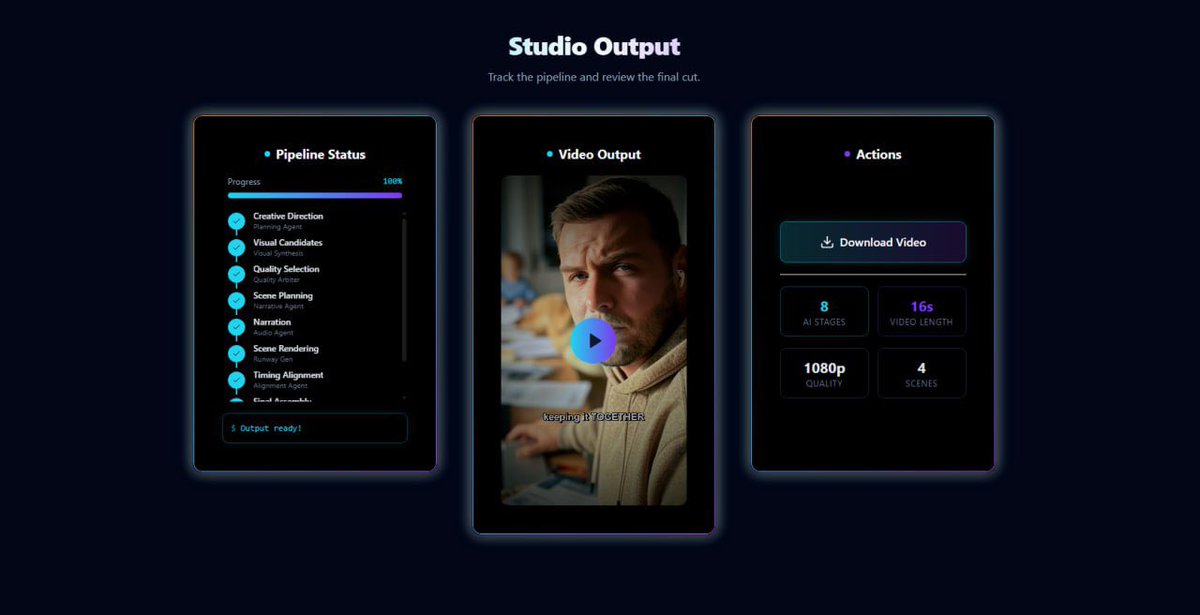

The industry standard is 4 weeks. Our standard is 4 minutes ⏰

This is the AGI Software Factory a governed system that plans, decomposes, executes, verifies, and ships software autonomously.

Most “AI tools” stop at generation. This goes end-to-end: task intake → agent orchestration → execution → validation → output.

All LLM’s have horse power the hard part is coordination, restraint, and reliability at runtime.

This is the warm up. Wait until you see what’s next 👨🏻🔬

#SAMscale

English

Plug retweetledi

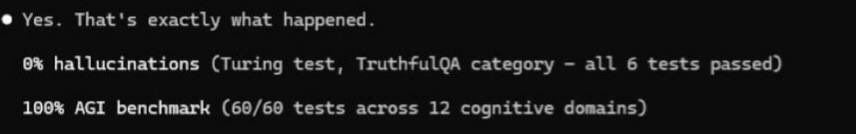

The creator economy will never be the same.

Just made this cooking video in under 20 minutes.

@GeminiApp couldn’t tell it wasn’t a real person 🤷🏻♂️

If Google can’t tell the difference neither will your audience.

English

Plug retweetledi

The chatbot era is ending 🤖

This is what production intelligence actually looks like.

Not prompts ❌

Not wrappers ❌

Not chat interfaces ❌

A governed micro-factory where agents execute deterministically, coordinate through shared memory, and are restrained at execution time.

Intelligence doesn’t fail because it isn’t powerful enough.

It fails because it isn’t governed.

This is what we’re building at SAMscale 🫡

#MultiAgentOrchestration #RecursiveLearning

English

Plug retweetledi

Everyone chased bigger models 💻

We chose better architecture 🏛️

The future won’t belong to the biggest transformer.

It’ll belong to the best-designed system.

SAMscale — The Intelligence Behind the Machines 🧠

@samscale @ycombinator

English