Sabitlenmiş Tweet

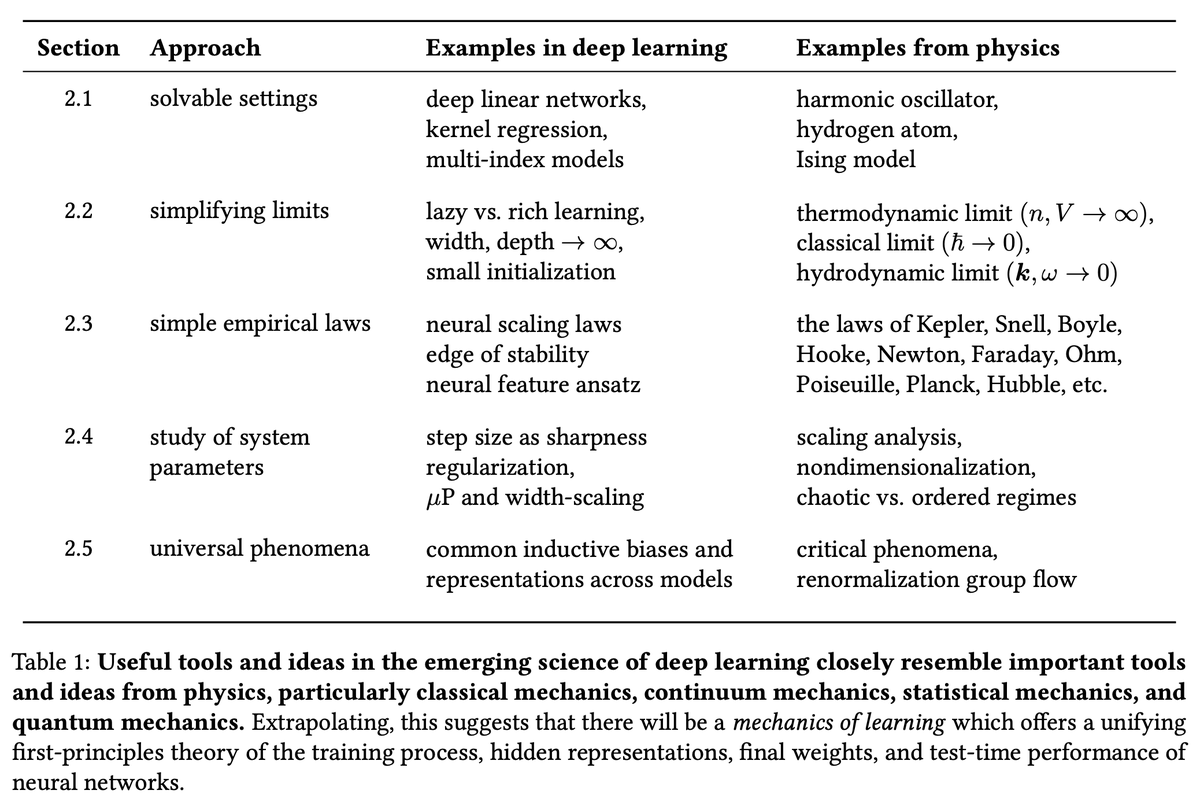

Why don’t neural networks learn all at once, but instead progress from simple to complex solutions? And what does “simple” even mean across different neural network architectures?

Sharing our new paper @iclr_conf led by Yedi Zhang with Peter Latham

arxiv.org/abs/2512.20607

GIF

English