SemiAnalysis

2.1K posts

SemiAnalysis retweetledi

🚨 A junior new grad at Jane Street just locked in & signed a $220K–$600K role. 🚨🚀🔥

Not because he worked harder. Because he built an agentic AI system that uses JAX & Mesh-TF to chews through trillions of data points while entire teams are still loading their spreadsheets.

He just dropped a 1-hour breakdown of the exact system:

🟠 how he mines massive datasets most people don't even know exist

🟠 how AI catches patterns the human brain physically can't

🟠 how raw data becomes real trades and real decisions

🟠 how you can build the same thing from scratch

Kill the TikTok/Reels/XHS scrolling tonight.

This one hour will do more for your career than the last six months of scrolling

English

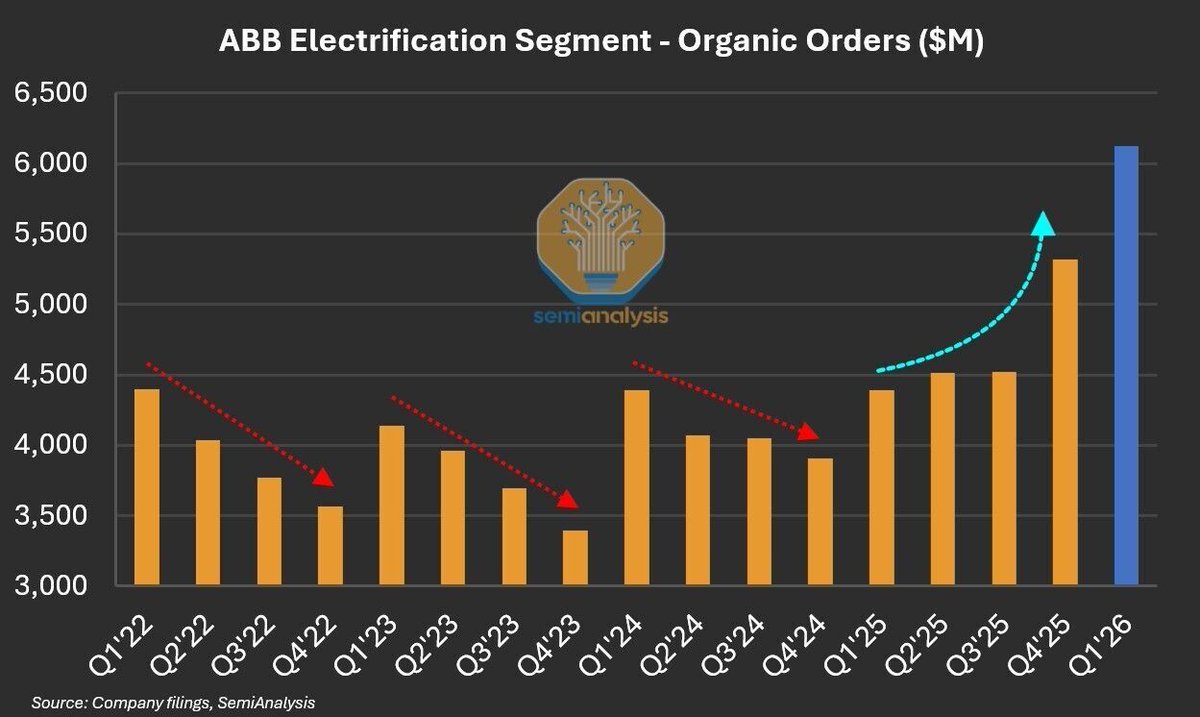

How does a datacenter boom look like in electrical equipment orders?

ABB's Electrification segment makes the low- and medium-voltage electrical equipment that sits between the utility feed and the critical IT load in a datacenter - switchgear, circuit breakers, busways, and modular substations.

Segment orders have a clear seasonal pattern. Q1 the high mark, then sequential step-downs through Q2, Q3 and Q4. This held cleanly in 2022, 2023 and 2024. Last year inverted the seasonal pattern. Orders rose Q1 to Q2, were sequentially flat into Q3, and Q4'25 printed 17% QoQ vs. the typical mid-single-digit % decline. The culprit - you guessed it - datacenter demand. Q1'26 extended the run to a record +$6B of orders, a positive read-through for the rest of datacenter industrials complex.

ABB is just one data point — our SemiAnalysis Industrials Model maps BoMs across 20+ datacenter designs to the 6,000+ sites in our coverage, producing quarterly capex and revenue forecasts on 500+ suppliers across datacenter electrical, cooling, and construction.

English

Read more here:

newsletter.semianalysis.com/p/ai-value-cap…

English

The Vera Rubin VR NVL72 represents NVIDIA's most vivid, visceral, and voracious value vending venture yet. For versions past, NVIDIA was virtually virtuous — a vendor that volunteered vast value to the rest of the ecosystem, voiding its own leverage while Neolabs and Neoclouds reaped the dividends. With VR, that vision of NVIDIA as a benevolent, value-vouchsafing vendor is even further verified!

VR NVL72 arrives as a vehicle for vindication — a verifiable, vaulting leap in performance-per-cost that overturns every vestige of the old pricing paradigm. Viewed through the lens of total cost of ownership, the value extraction is vivid and unavoidable: Velocities of value that were previously invisible are now very visible, very intentional, and very, very NVIDIA. The V in Vera Rubin was never a vowel. It was always a vector, a vow and a verdict — pointing, inevitably, toward value.

English

The value isn't with the end user anymore—it’s moving up the stack. Watch now: youtu.be/cfIm-Ply0O8

YouTube

English

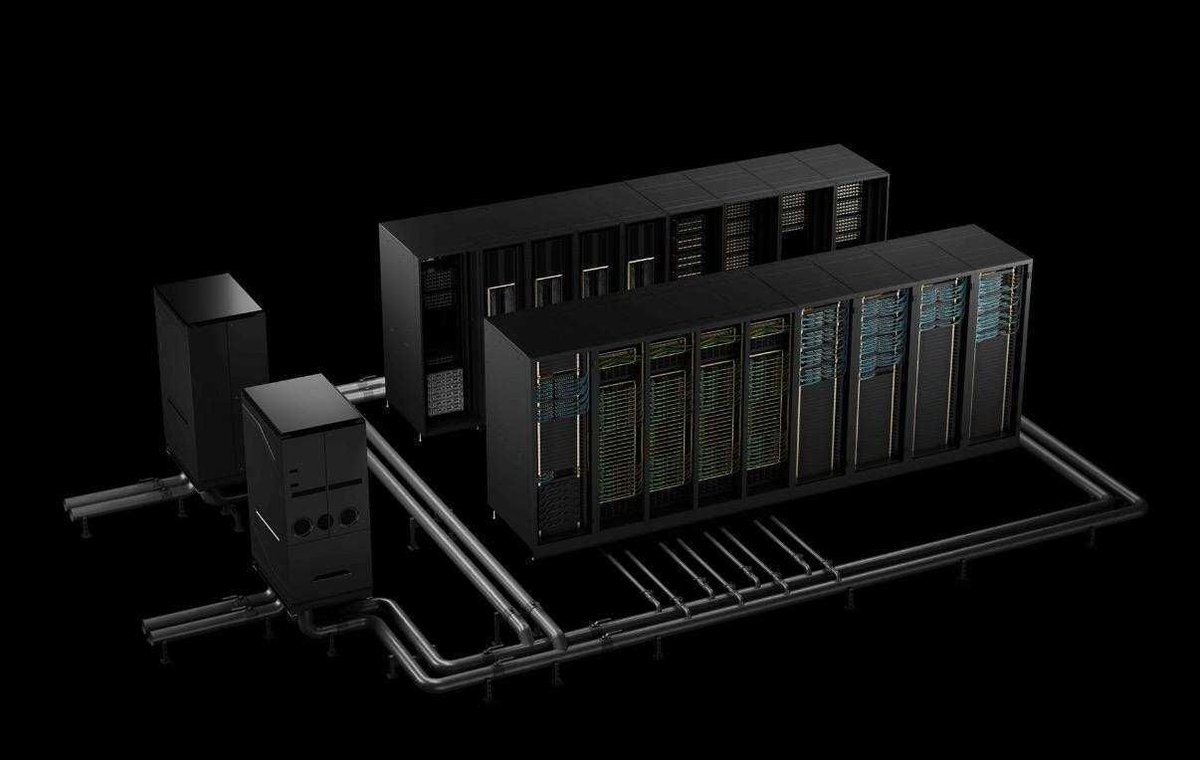

In the early stages, ODM server assembly mainly focused on manufacturing. ODM produced standardized racks, motherboards, and server systems on a large scale. Their primary advantages were cost efficiency, capacity, and yield.

In the AI era, IT racks have become much more complex. GPU/ ASIC, high-power systems, liquid cooling, high-speed connections, and rack management all need to work together within the rack. To simplify cabling and maintenance, cableless designs may also become more common.

As a result, ODM are no longer just manufacturers. They are evolving into partners in design, integration, and mass production. Moving forward, they will support various GPU / ASIC platforms and data center designs, and help vendors build the broader AI infrastructure ecosystem.

English

AI Value Capture - The Shift To Model Labs

Vera Rubin VR NVL72: V for Value -

Rubin delivers a step jump in performance per TCO. ROI accruing to users, Neoclouds, Hyperscalers,

AI Labs, Memory Vendors or GPU Manufacturers?

READ NOW: newsletter.semianalysis.com/p/ai-value-cap…

English

If you're curious about more follow on impacts from the AI Infrastructure buildout, subscribe to Core Research! (5/5) semianalysis.com/core-research/

English

ASPs have been depressed for a few years, and LTAs lock in most pricing through 2027. The market is turning higher as well, you can see it in broader Analog reports like TXN and STM. We think these wafer intensity shifts, driven by leading edge logic and HBM, will drive a new upcycle for the raw silicon wafer makers, similar to the 2016-2017 upcycle (which was memory driven!). We expect negotiations for 2028+ LTAs to happen early next year, and see new Epi deals locking in ASPs 20% higher. (4/5)

English

Wafer Maker ASPs are turning.

For years, AI’s impact on silicon wafer market was a rounding error.

Epitaxial wafers used for leading edge chips are tightening supply-demand balance quicker than expected. Our Models estimate leading edge logic (7nm and below) wafer demand inflecting next year, reaching nearly 1M wpm in CY28, around 10% of total 300mm equivalent demand.

Major wafer makers GlobalWafers, SUMCO, Shin-Etsu and Siltronics, should benefit from the AI infrastructure cycle. (1/5) 🧵

English

These are only a fraction of the Neocloud capacities we're tracking around the world. If you're curious to know what CoreWeave, Nebius, or any others are up to, you should subscribe too: (4/4) semianalysis.com/datacenter-ind…

English

AWS is making serious moves in custom AI silicon with Trainium and Inferentia chips. Rachel Zheng and Karthik Venna from the @awscloud team break down how they're scaling these processors across the world's largest cloud infrastructure. @makora_ai

youtu.be/mgrQWLERync

YouTube

English