Dr. Shuhan He 🫀🫁

2.7K posts

Dr. Shuhan He 🫀🫁

@shuhanhemd

MGH/Harvard. Clinical informatics. Emergency Med. Lab of Computer Science. @Conductscience @mghihp Director Health Data analytics.

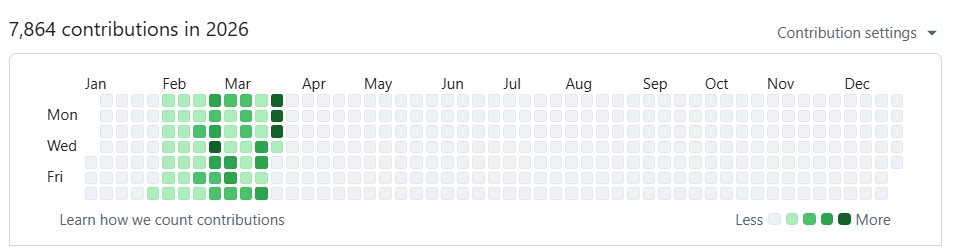

i've been working on a method called autoreason that is effectively autoresearch extended to subjective domains. autoresearch works because val_bpb gives you an objective fitness function. autoreason constructs a subjective one through independent blind evaluation, the same way science uses peer review where math can use proofs. as you’ve noted, the fundamental problem with using LLMs for iterative refinement on subjective work: the model is always sycophantic when you ask it to improve something, overly critical when you ask it to find flaws, and overly compromising when you ask it to merge two perspectives. the output ends up shaped more by how you prompt than by what's actually better. autoreason fixes this by separating every role into isolated agents with no shared context. you start by generating version A. a fresh agent attacks it as a strawman. a separate author who only sees the original task, version A, and the strawman critique produces version B. a third agent who has no history with either drafting process sees both versions as equal inputs and synthesizes them into version AB. a blind judge panel with fresh context and randomized labels picks the strongest of A, B, or AB. the winner becomes the new A and the loop repeats until the judges consistently pick the incumbent which indicates that no further changes are needed.

NEWS: @NYCMayor @ZohranKMamdani names @AlisterFMartin as the next commissioner of the Department of Health and Mental Hygiene. Martin is an ER physician known for spearheading a national campaign, "Vot-ER," to register patients to vote. subscriber.politicopro.com/article/2026/0…

I am excited to share our latest research led by PhD student Mohammad Yaghoubi (@yaghoubi_mh) entitled: “Predictive Coding of Reward in the Hippocampus” Link: biorxiv.org/cgi/content/sh… Highlights: 🧵👇