SovitRath5

678 posts

SovitRath5

@SovitRath5

AI Architect at AIVAR Blog - https://t.co/Rq2WcIT5QC GitHub - https://t.co/9PmVei4IoP

Bengaluru, India Katılım Ocak 2017

58 Takip Edilen158 Takipçiler

This week's article on DebuggerCafe covers the third post in the DeepSeek-OCR 2 series.

We will fine-tune DeepSeek-OCR 2 using Unsloth for understanding Hindi typography.

Fine-Tuning DeepSeek-OCR 2 => debuggercafe.com/fine-tuning-de…

GIF

English

Last week on DebuggerCafe, we covered inference using DeepSeek-OCR 2.

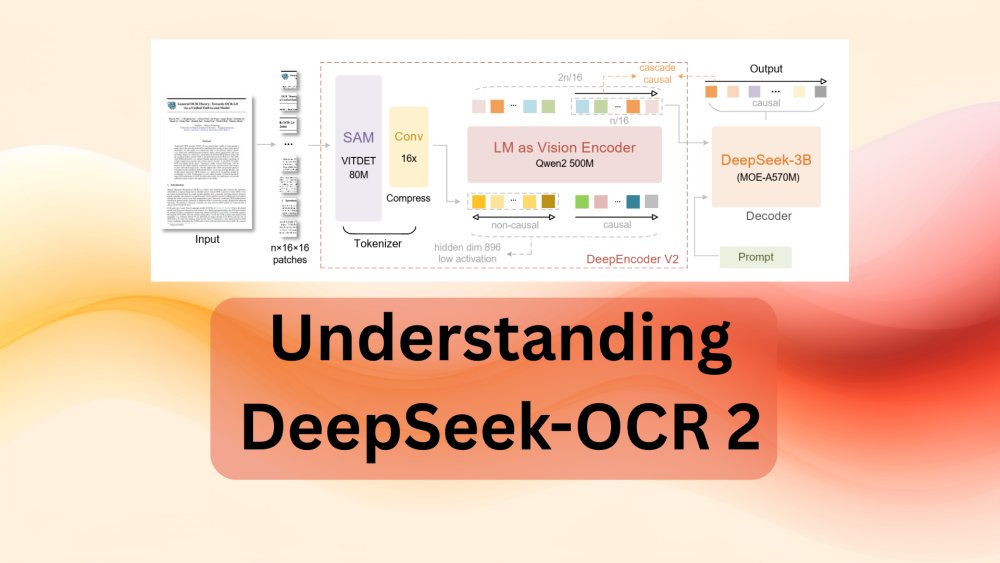

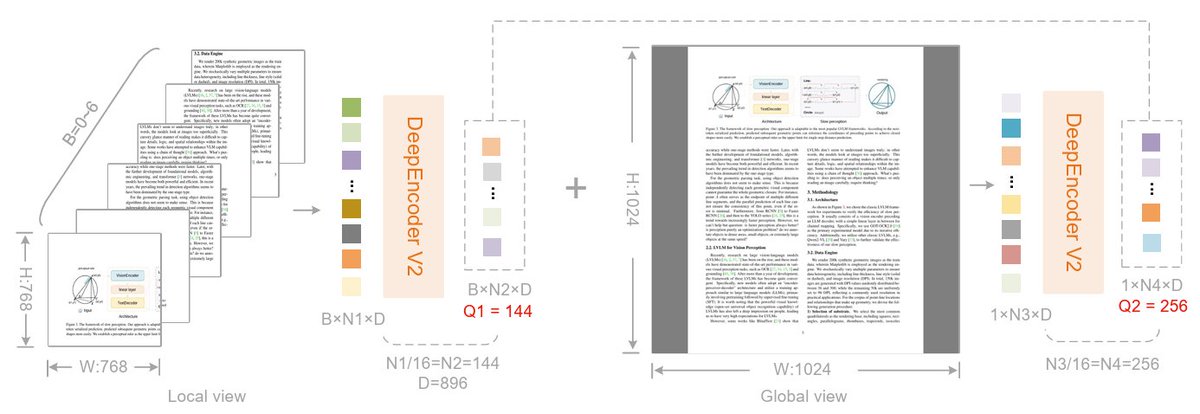

This week, we go in-depth into the architecture of DeepSeek-OCR 2.

Understanding DeepSeek-OCR 2 => debuggercafe.com/understanding-…

GIF

English

DeepSeek-OCR 2 rethinks how we build vision encoders for handling visual causal flows. This makes OCR the perfect use case for testing the capability of the vision encoder.

DeepSeek-OCR 2 Inference and Gradio Application => debuggercafe.com/deepseek-ocr-2…

GIF

GIF

English

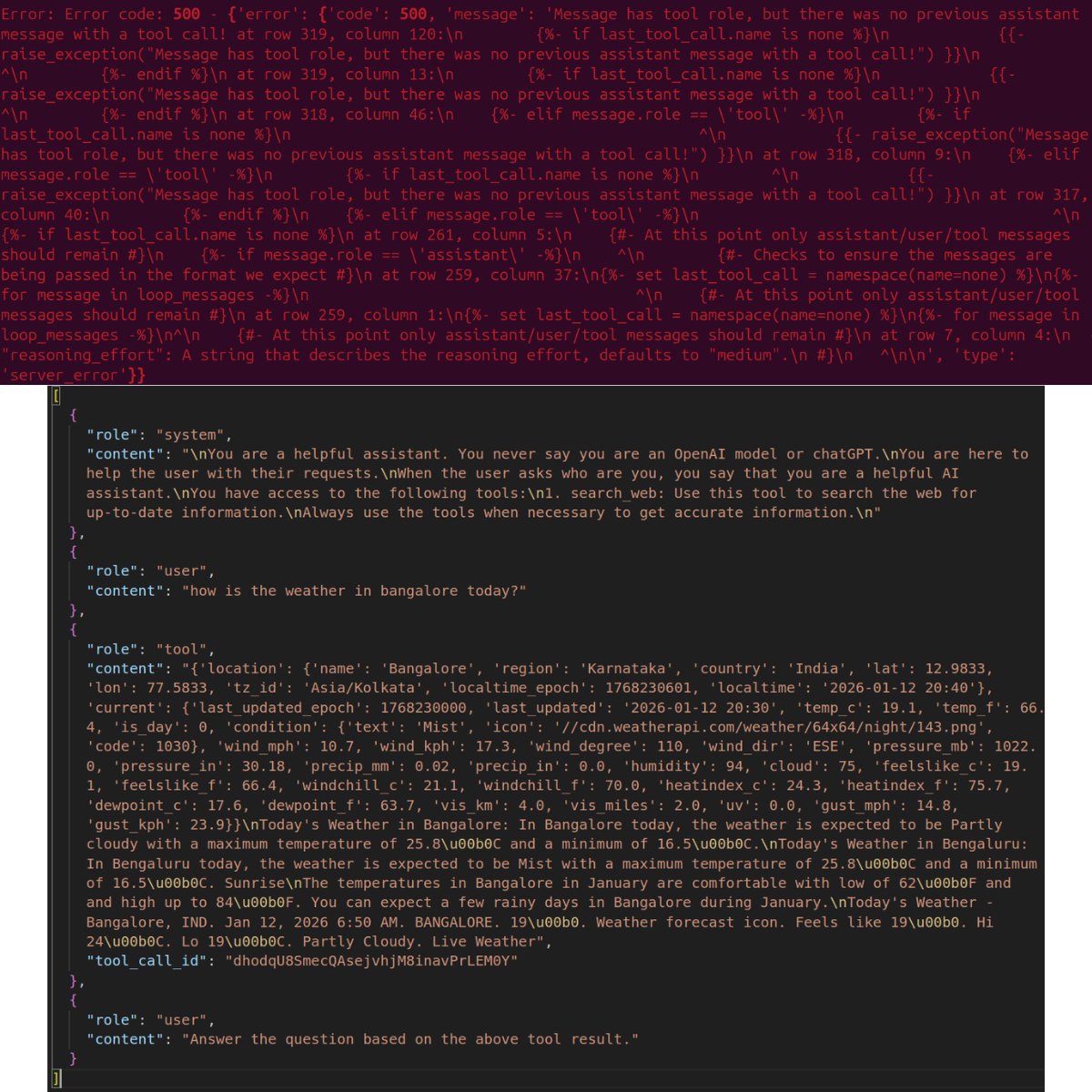

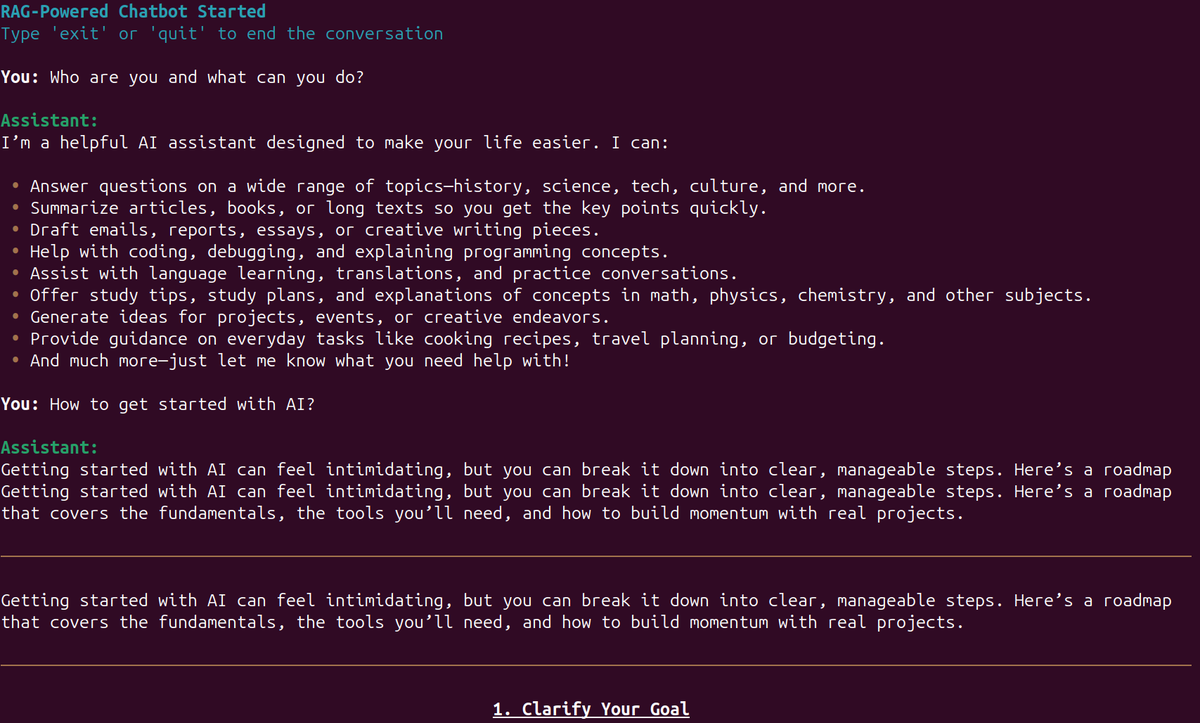

Handling multiple tool calls with LLMs can be complex, especially when not using libraries like LangGraph. There are multiple scenarios to take care of.

* How do we decide which tool to call and which one not to call?

* How many tools should be called per user turn?

* Are there specific tools that should be given a priority?

In this week's article, we answer a few of these questions by adding multiple tool calling ability to gpt-oss-chat. All of the tool calling abilities are handled just with Python code without any orchestration libraries to learn about the complex scenarios as much as possible.

Multi-Turn Tool Call with gpt-oss-chat => debuggercafe.com/multi-turn-too…

What are we covering while developing multi-turn tool call with gpt-oss-chat?

* Why do we need multi-turn tool call?

* How do we implement multi-turn tool call with gpt-oss-chat?

* How well does the implementation work?

* What can we do to improve the project further?

GIF

English

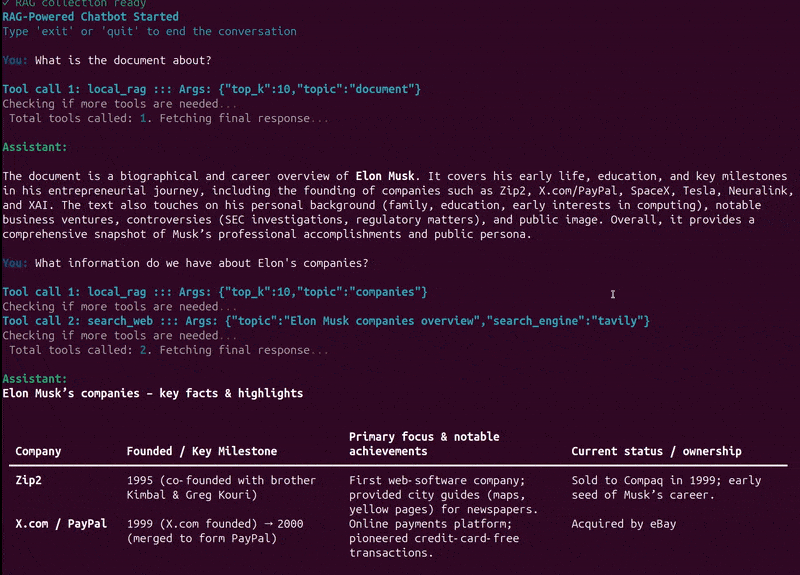

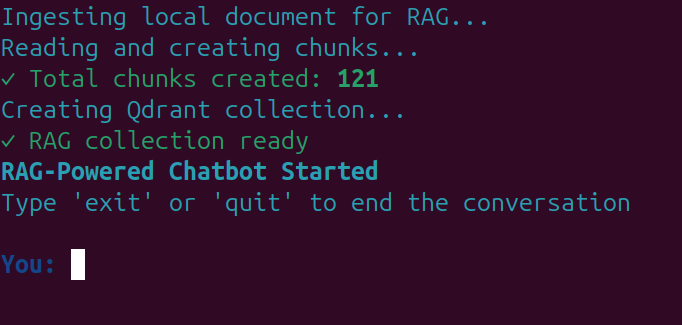

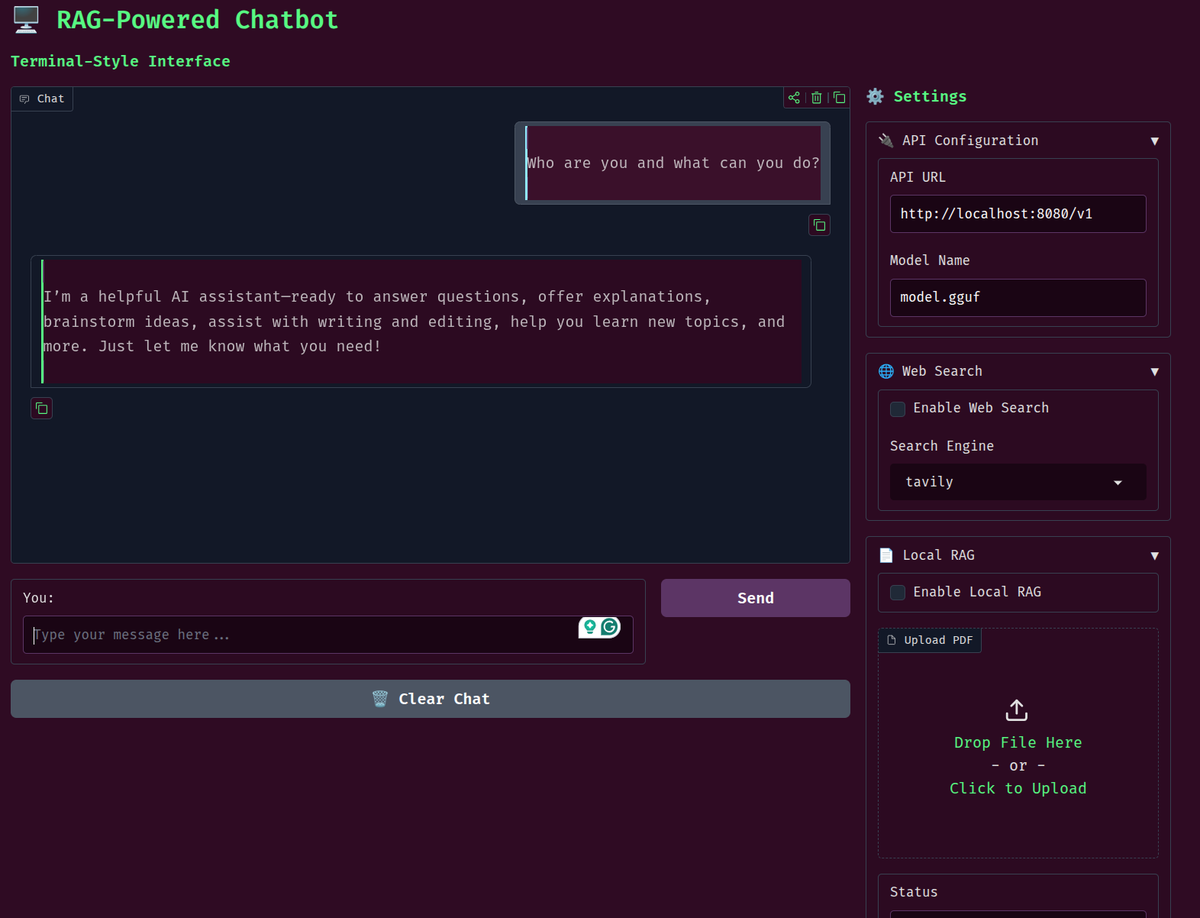

Adding RAG (Retrieval Augmented Generation) as a tool call, that's what this week's article on DebuggerCafe is about.

One of the common approaches to RAG while building LLM-powered applications is allowing the model to search the vectorDB during each chat turn. However, not all questions from the user demand a vectorDB search. This leads to bloated context and token wastage.

The simplest solution is to let the LLM decide when to call the vectorDB search when provided as a tool call. It takes the decision whether to call or not to call the RAG tool based on the user interaction. We are building a simple version of this in this week's article, with gpt-oss-chat.

RAG Tool Call for gpt-oss-chat => debuggercafe.com/rag-tool-call-…

What are we going to cover while adding RAG tool call for gpt-oss-chat?

* What is the motivation behind this, and what is the benefit?

* What parts of the codebase have changed and need to be updated?

* How does our assistant perform during real-time interaction and choose between multiple tools?

GIF

GIF

English

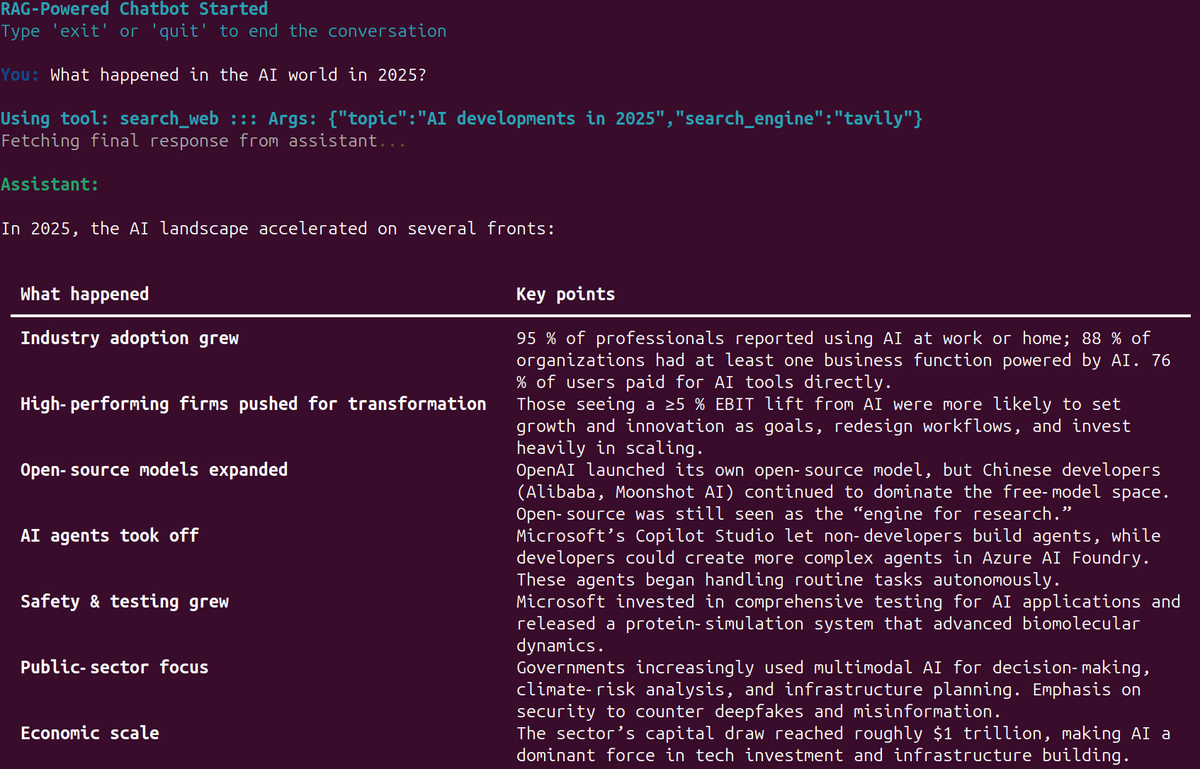

Continuing the gpt-oss-chat series, this week on DebuggerCafe, we explore how to add Web Search as a tool with streaming tokens.

Web Search Tool with Streaming in gpt-oss-chat => debuggercafe.com/web-search-too…

This is helpful for those who want to understand how to add tool calls with local models without using external libraries like LangChain.

Not only that, we explore how to recognize the exact tokens in between the streaming to make a tool call. Along with that, we discover:

* At what instances do tool calls fail?

* In what order to append assistant and user chat history for the correct tool call?

* What are the caveats that need to be taken care of when adding a tool call with Python-only functions?

All of this is powered by llama.cpp and can be run on a system with 8GB VRAM.

GIF

English

This week on DebuggerCafe, we cover the second article in the gpt-oss-chat series.

gpt-oss-chat Local RAG and Web Search => debuggercafe.com/gpt-oss-chat-l…

The article shows how to add Tavily and Perplexity web search API along with local Qdrant in-memory vector search.

We will cover the following in this article:

* Setting up llama.cpp for running gpt-oss

* Setting up Qdrant for local vector DB

* Following through the important parts of the code for gpt-oss local RAG

* Running inference experiments spreading across web search and PDF chat

All of this with gpt-oss-20b running locally in 8GB VRAM.

GIF

English

This week on DebuggerCafe, we are creating an inference UI for SAM 3. Using Gradio, we expose image, video, and multi-object segmentation + detection in videos.

SAM 3 UI – Image, Video, and Multi-Object Inference => debuggercafe.com/sam-3-ui-image…

On top of that, we enable the workflow to run inference on videos with multiple objects by sequentially processing one object after the other. Although we lose the tracking ability, multiple objects can now be detected with less than 10GB VRAM, which earlier would have consumed 29GB VRAM.

What are we going to cover while creating SAM 3 UI?

* We will start by setting up the dependencies locally.

* Next, we will have an overview of the entire codebase and how everything is structured.

* We will also discuss why we deviate from the batched image inference provided in the official Jupyter Notebooks and what we do to implement our own multi-object inference in images and videos.

GIF

GIF

English

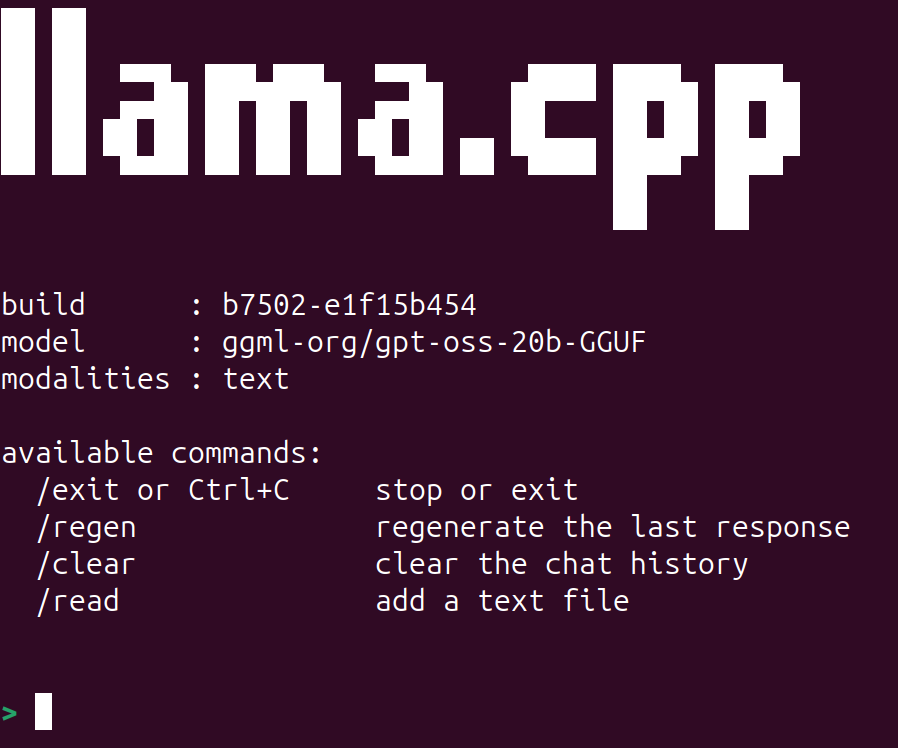

This week, we start a new series of articles on gpt-oss on DebuggerCafe. The first article covers the important aspects of the paper, the architecture, and running inference with llama.cpp

gpt-oss Inference with llama.cpp => debuggercafe.com/gpt-oss-infere…

We will cover the following in this article:

* gpt-oss MoE (Mixture-of-Experts) architecture.

* MXFP4 quantization benefits.

* Harmony chat format.

* gpt-oss benchmark and performance.

* gpt-oss llama.cpp inference.

The next few articles in the series will cover creating a CLI based chat application, adding tool calling functionalities, and RAG.

GIF

English

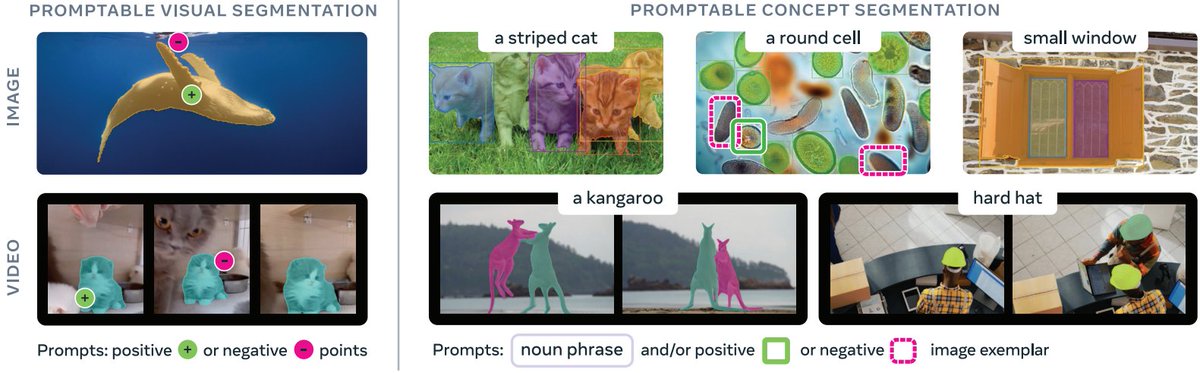

This week on DebuggerCafe, we cover the SAM 3 architecture discussion and inference.

Along with image inference, we also discuss video inference, its VRAM requirements, and how many seconds of video we can process at 480p resolution on a 10GB and 8GB VRAM GPU.

SAM 3 Inference and Paper Explanation => debuggercafe.com/sam-3-inferenc…

We will cover the following topics in SAM 3:

* Why SAM 3?

* What changes in SAM 3 compared to SAM 2?

* What is the architecture of SAM 3?

* How was the dataset curated for SAM 3?

* Inference using SAM 3.

GIF

English

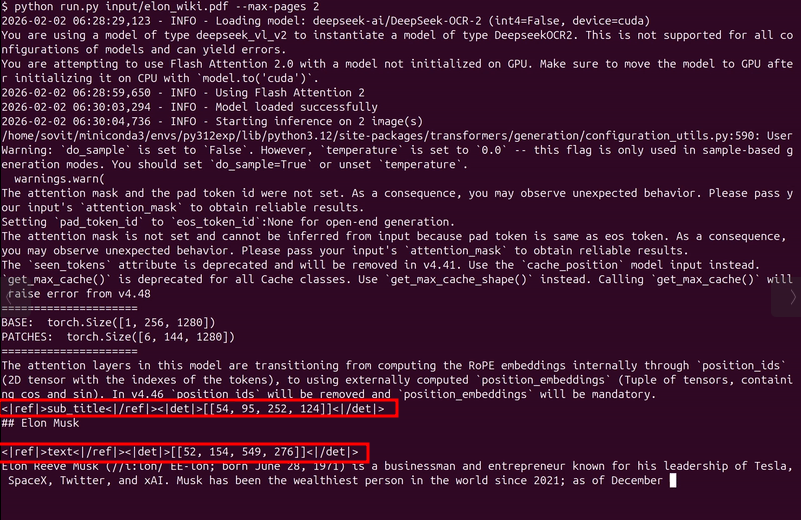

A simple repository for DeepSeek-2 OCR inference.

Features:

* PDF and image OCR.

* CLI for a simple streaming output and a Gradio application for batch PDF processing. Can provide a path to a directory containing PDFs for bulk OCR.

* Final output is a merged markdown with retrained images from the original file. Can be further fed to LLMs as structured input.

* INT4 and BF16 inference. INT4 runs in less than 6GB VRAM.

Project link => github.com/sovit-123/deep…

GIF

English

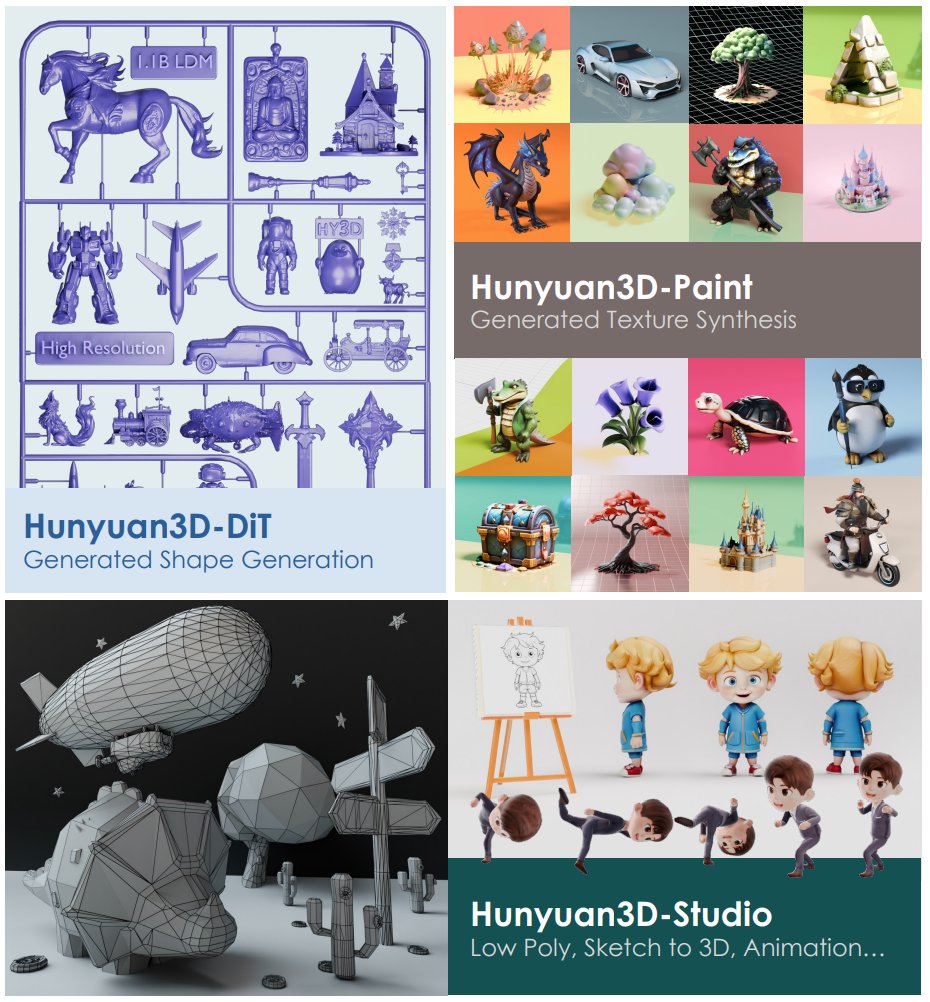

In the final article in the Hunyuan3D 2.0 series this week, we cover creating a Runpod Docker image and discussing the important bits from the paper.

The Runpod Docker Image can be used as a template to run Image-to-3D in just a few clicks on Runpod.

Hunyuan3D 2.0 – Explanation and Runpod Docker Image => debuggercafe.com/hunyuan3d-2-0-…

We cover the following in the article:

* What was the motivation behind Hunyuan3D 2.0?

* What is Hunyuan3D’s architecture?

* How does it perform against other models?

* How do we create a Docker Image that can be used as a Runpod template?

GIF

English

Multi-turn tool call is now fully functional and merged with the main branch of gpt-oss-chat.

Completely transparent and simple code for how to implement multiple tool call functionality where the assistant decides which tools to call.

Project link => github.com/sovit-123/gpt-…

GIF

English