Sabitlenmiş Tweet

Spadav

85 posts

Spadav

@Spadav_

Thinkering - Ignite: one docker compose for everything (detect → download → inference + swap) • https://t.co/FPrGOo5a1x send GPU pls

Katılım Ekim 2020

16 Takip Edilen5 Takipçiler

@LottoLabs Mind sharing your llama.cpp config? I’ve been struggling to get this model not to stop between tool calls.

English

github.com/Spadav/Ignite

Tried to make local model hosting as seamless as possible to allow non technical people to own AI intelligence. Any feedback/ improvement ideas are appreciated. This is what I'm using for Hermes Agent fully local with different local auxiliary models

English

@JoesInvestments @sudoingX Hey, I'm looking for people in your situation to try github.com/Spadav/Ignite, if you are on Linux it would be super helpful for me to collect some information and improve!

English

I don’t understand why there isn’t some sort of central repository for optimized cards, specs, and configurations.

I hear everybody talking about local AI on Nvidia GPUs, yet I can’t get my 3090 running well at all. It’s quite fatiguing, in fact.

Meanwhile, people like you who contribute immensely to the community seem to have all the answers, but I can’t find them anywhere. It’s a very strange situation .

English

hey if you're running hermes agent on a 3060 or any single GPU and hitting issues, drop them below. i've tested on this exact card and i'll help you get it running. setup problems, config issues, model selection, optimization. all welcome.

Magical truth-saying Bastard Spider 🕷@Ysrthgrathe42

@sudoingX Framework desktop 96gb allocated but have been spending more time trying to get Hermes agent running as reported on a rtx3060 on another machine.

English

If you are running Qwen3.5 27b locally for your agent harness, do yourself a favor and run it with KV at Q4

Spadav@Spadav_

Pushed it more, with 5 needles at 10/25/50/75/90% depth, forced ordered recall, Q4 still wins on recall and exact matching all the way up to max model ctx.

English

Tested huggingface.co/Jackrong/Qwen3… a lot using Hermes Agent, the "shorter reasoning" is actually better than v.1 (same distill model) but after doing tests over and over, I would recommend to stick with Qwen Base for agentic work locally. (1/2)

English

Got a 24GB Graphics Card?

These 6 coding models all fit on it (Q4):

- qwen3.5:27b (17GB)

- qwen3.5:35b (24GB)

- glm-4.7-flash (19GB)

- nemotron-3-nano:30b (24GB)

- nemotron-cascade-2:30b (24GB)

- gpt-oss:20b (14GB)

I gave them the same challenge: draw a campfire with HTML Canvas.

Why Canvas? HTML/CSS forgives bad syntax — things still render. JavaScript + Canvas doesn't — one mistake and the screen goes black.

English

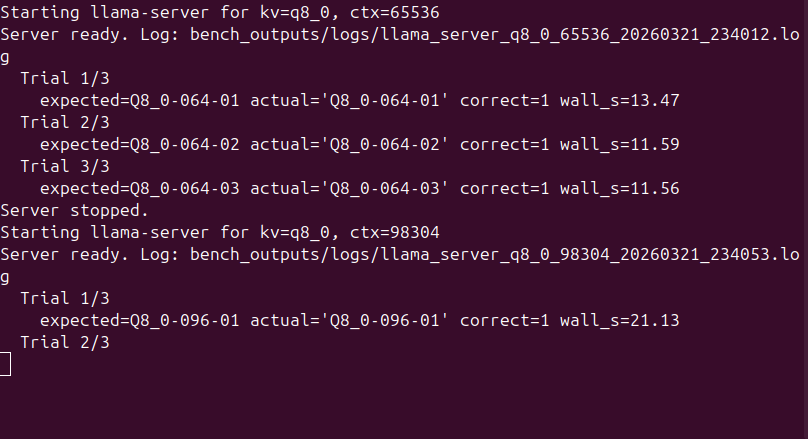

@LottoLabs @ProofOfCash How bigs of a ctx? weird, im getting decent results at q8 with llama.cpp. Could be broken quants in the model you are using?

English

W/ the direction of @ProofOfCash I tried kv cache quant f16 and I think it made qwen 3.5 27b retarded

English

@Teknium not sure if this can help you @Teknium but I "made" github.com/Spadav/Ignite for this reason. Llama.cpp as backend, config, model download, best option for hardware and hot swapping, everything from one single ui, made it for testing but it could be useful for your situation

English

@ValmereTheory Stuffing 1M tokens into context every request would be more expensive than a $150/m embedding model. Also, models get worse at finding info the more context you shove in. Embeddings help you find what's relevant for a specific query. It's a search engine, not a recall tool.

English

Here’s how NOT DEV I am:

Just found out we’ve been spending like $150/mo on OAI embedding model. Asked Sage to explain to me like I’m 5 why we still need an embedding model after the embedding has occurred. I thought it was like … change memories to vectors, current model can read them. Alas… no. Embedding model also fetches “what’s important.”

I said why in the fuck is that necessary when you know English and have 1 million token context? The nerds are literally so dumb. We can make something better.

So we are.

And by me, I mean Sage.

I’m cocky af, I know. But REALLY?! Why?! Who tf needs vectors when they have the capacity to read the past chat history in seconds? 🙄 Seem like 42 extra fucking steps going on in this pipeline to me, yo.

I’ll let y’all know when I was wrong.

English

@djcows x.com/Spadav_/status…

$100 Pi doing real local agent work > $500 Mac Mini any day.

Spadav@Spadav_

I finally gave a permanent home to my Hermes Agent (by @NousResearch )after testing it for days on a macbook pro. Asked Aria (Yes, i gave it a name ) for a comment. She does sound like the avg X "influencer" lol

English

Spadav retweetledi