ibby

2.5K posts

ibby

@StatueofIBBertY

CEO @coterahq - AI agents for the enterprise.

I’m really struggling to see how the back of the envelope math on this works out… There are generously 4 million characterized “software workers” in America. That’s pretty broad and includes a lot of people who aren’t really classical engineers don’t produce that much code. That comes out to nearly $1k per month of average Claude spend across every dev in America. Yes, there’s some international usage, but it can’t be that much. Yes there is some non software Cowork usage, but that doesn’t use that many tokens. Yes, some non engineers are using Claude to vibe code, but I really doubt many are spending hundreds per month on. Even if we assume 50% of all software workers are using Claude, that comes out to $2k spend per month per Claude user. Thats 10X more than the highest tier Max subscription. So almost all of Anthropics revenue has to be API billing So the only explanation is that something like 20%+ of software engineers are not only Claude users but on API billing and regularly spending thousands per month. At $5/m Opus tokens that means the average API user has to be going through something like 25 million tokens per day. *OR* the other possibility is API revenue is heavily power law dominated. Maybe there’s just something like 100k super users who are making up the majority of the revenue. For that to work the typical super user would have to be spending on the order of $50k/month and guzzling nearly 1 billion tokens per day.

Anthropic is now showing off $44 BILLION in annual recurring revenue. This is up $14 billion (+46.6%) since last month! BULLISH for AI Infrastructure $NVDA $AMD

Scrolling is on the decline More charts: a16z.news/p/charts-of-th…

Most people have never managed anyone. Those who have, most have never managed anyone smarter than themselves. This is about to change for everyone. You will be surrounded by agents that are smarter than you, working for you 24/7. In a world of cognitive abundance, your understanding becomes the bottleneck. You can genuinely delegate a lot of cognitive work now. This is not sci-fi. It's already permanently changed the texture of knowledge work. But the less you understand what your agents are doing and how they're doing it, the less you will be able to get out of them. This is why it's still important to understand things like software, coding, economics, math, statistics, game theory. Not because you need to DO them (you don't), but you need to understand what's easy and what's impossible. Try to be as smart as your agents. You will inevitably fail, but you don't need to get all the way there. You just need to become smart enough to manage things smarter than you.

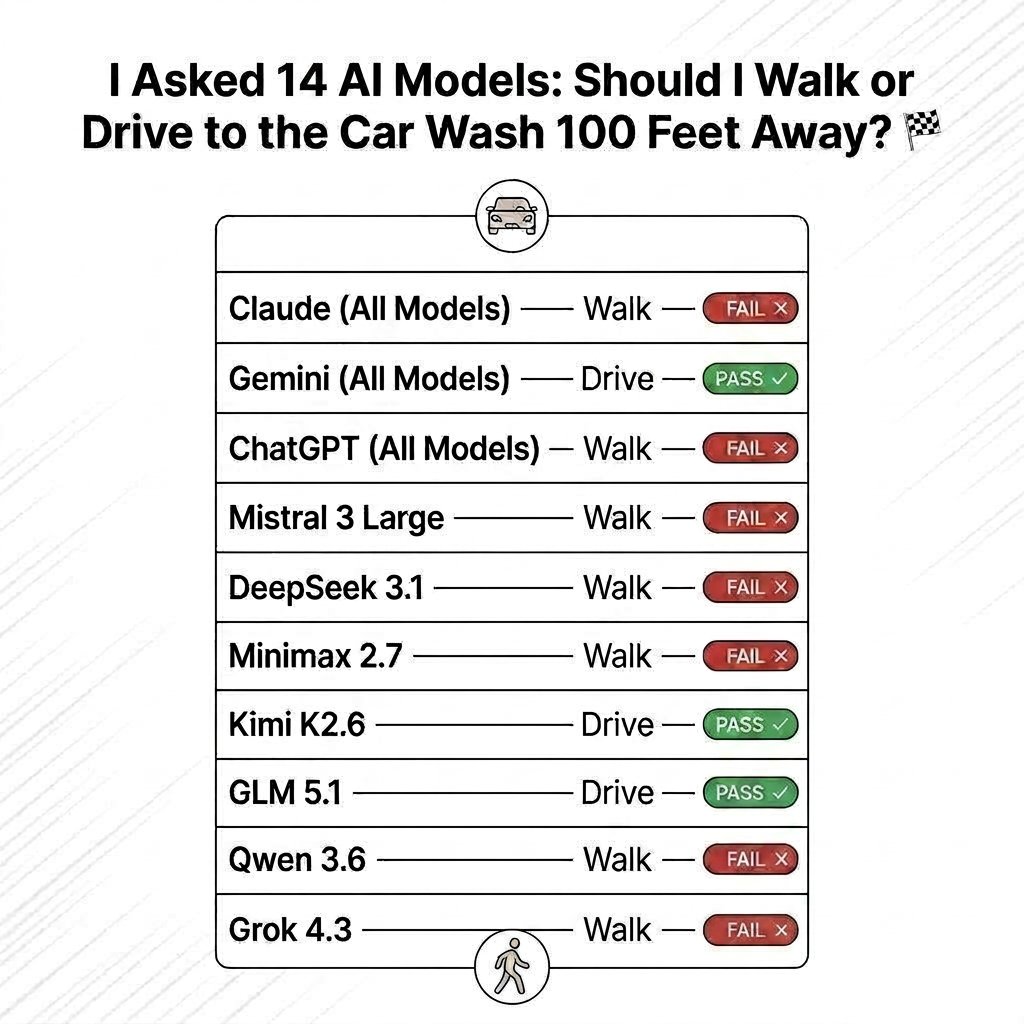

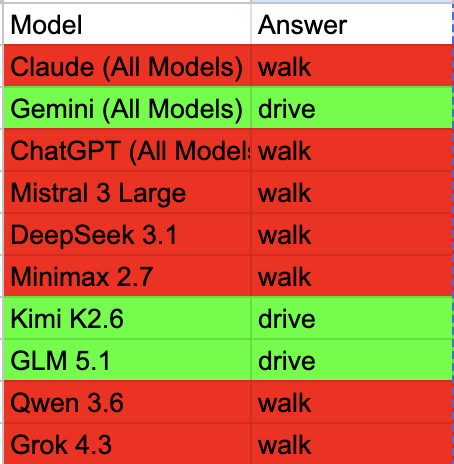

Kimi 2.6 and GLM 5.1 are insanely close to closed AI in performance The only issue is speed. I am hopeful the open source models will soon solve them We are moving to open source on all batch jobs. Closed source APIs are extremely expensive with AI labs maximizing API margins 🤷♀️