Stellan Haglund

800 posts

Stellan Haglund

@Stellanhaglund

Entrepreneur and code ninja. https://t.co/GyK8fQSFZe

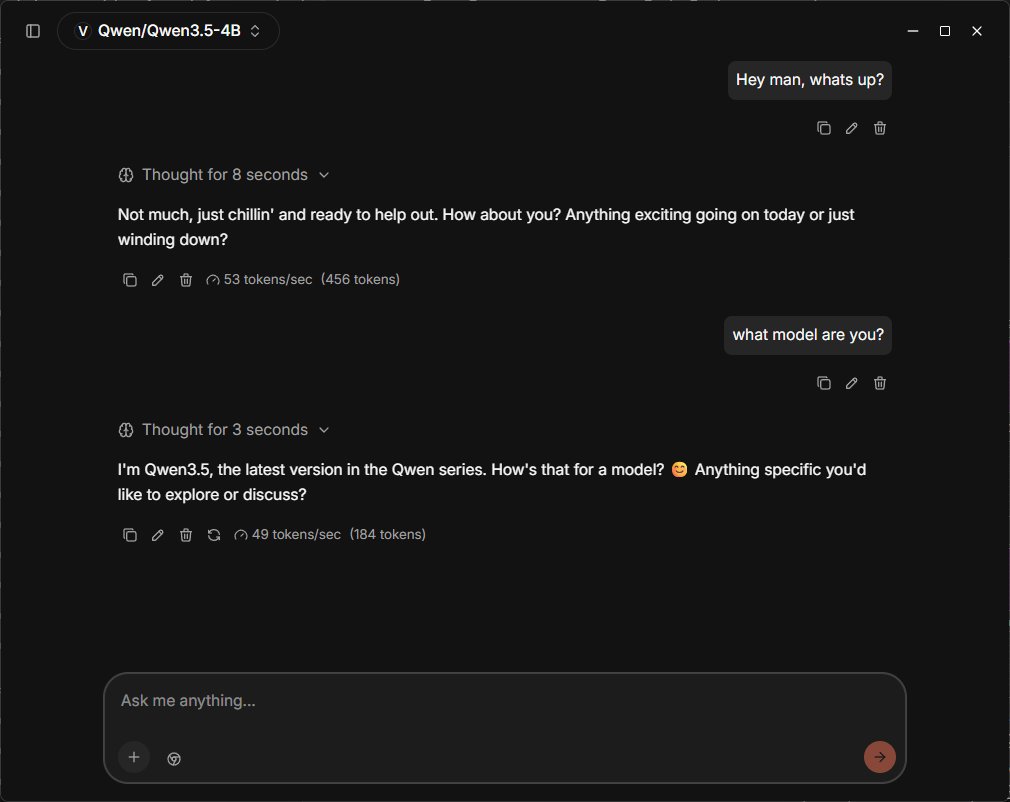

We’ve identified industrial-scale distillation attacks on our models by DeepSeek, Moonshot AI, and MiniMax. These labs created over 24,000 fraudulent accounts and generated over 16 million exchanges with Claude, extracting its capabilities to train and improve their own models.

MMaDA: Multimodal Large Diffusion Language Models - UniGRPO, a unified RL algo tailored for diffusion foundation models - MMaDA-8B surpasses Show-o and SEED-X in multimodal understanding, and excels over SDXL and Janus in text-to-image generation

'You have full backing across the United Kingdom' Prime Minister Sir Keir Starmer tells Ukrainian President Volodymyr Zelenskyy, 'We stand with you and Ukraine for as long as it may take'. Live updates: trib.al/lGomQ0C 📺 Sky 501, Virgin 602, Freeview 233 and YouTube