SupaHuman

486 posts

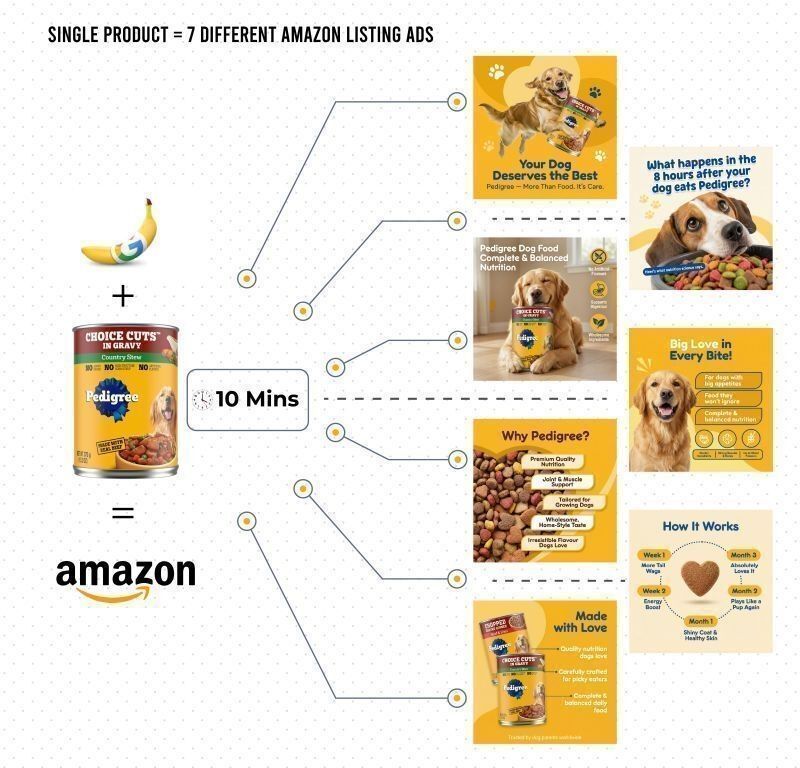

I just redesigned Amazon ads for Pedigree — in under 10 minutes.

One Pedigree product.

7 high-converting listing creatives.

No agencies. No weeks. No $10k invoices.

This is AI that:

→ scans customer reviews at scale

→ studies competitor listing visuals

→ identifies conversion gaps

→ builds a clear creative structure

→ generates Amazon-ready images

What used to take agencies weeks and massive budgets, now happens before your first coffee.

For Amazon brands, there are only two paths:

𝘖𝘗𝘛𝘐𝘖𝘕 1: Ignore it.

Keep paying for slow turnarounds and recycled “strategy decks.”

𝘖𝘗𝘛𝘐𝘖𝘕 2: Use it.

→Launch faster.

→Test more creatives.

→Win on speed and clarity.

This is how a single Pedigree product becomes:

• Hero image

• Benefit-led infographics

• “Why Pedigree?” comparison visuals

• Trust & nutrition panels

• Lifestyle + pack shots

• Conversion-focused secondary images

All aligned.

All brand-safe.

All Amazon-optimized.

In the last three months, we've helped over 10 Amazon brands transition to fully owned creative apps.

This is a trend. I see scale even more. Especially as these brands can gain more control over the personalization in content production.

We're driving millions of dollars and extra additional revenue just from this.

Interested in in utilizing AI creative ops in your business?

Like this post + DM me "AI" & I’ll send link!

(Make sure you're following)

English

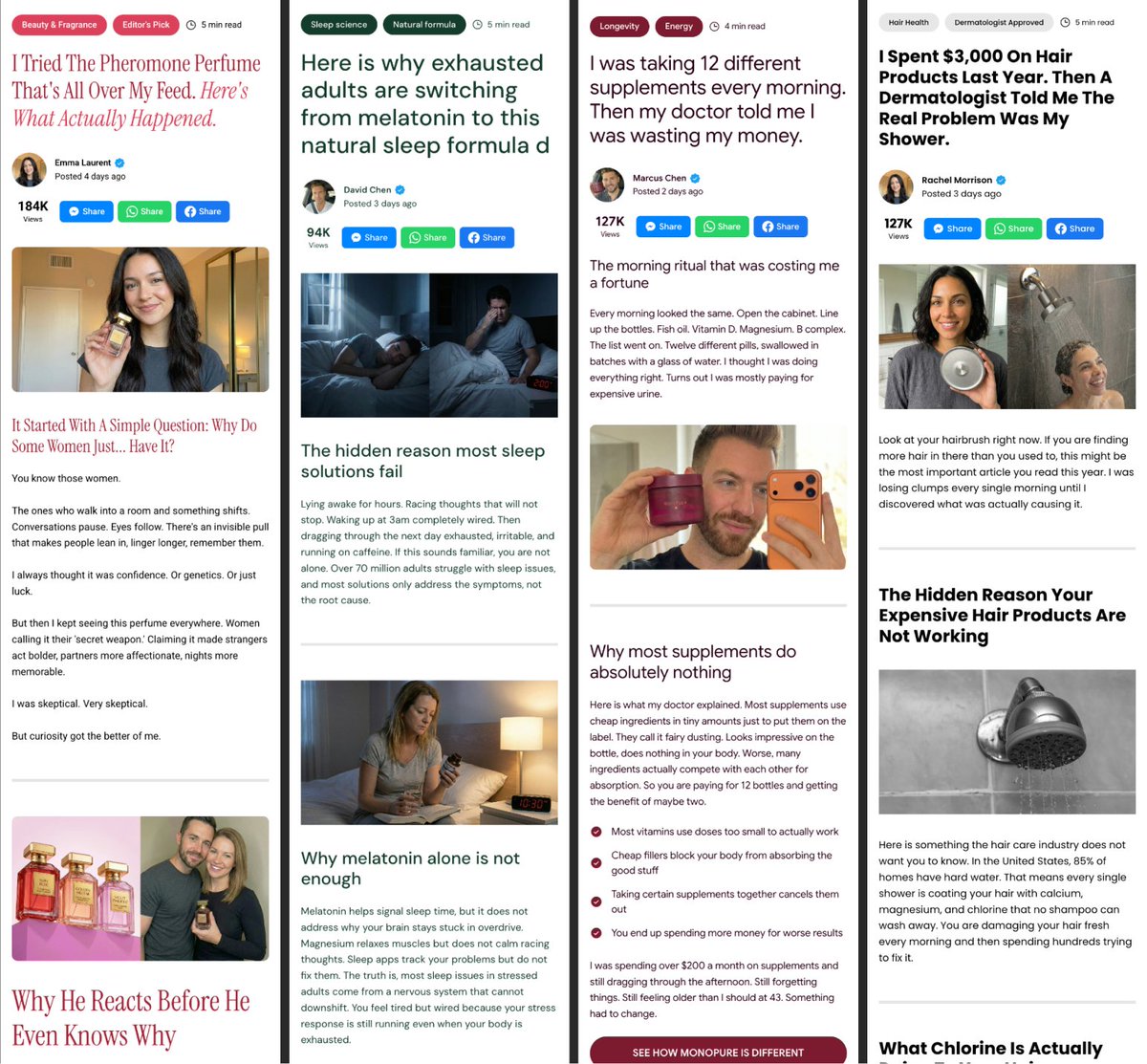

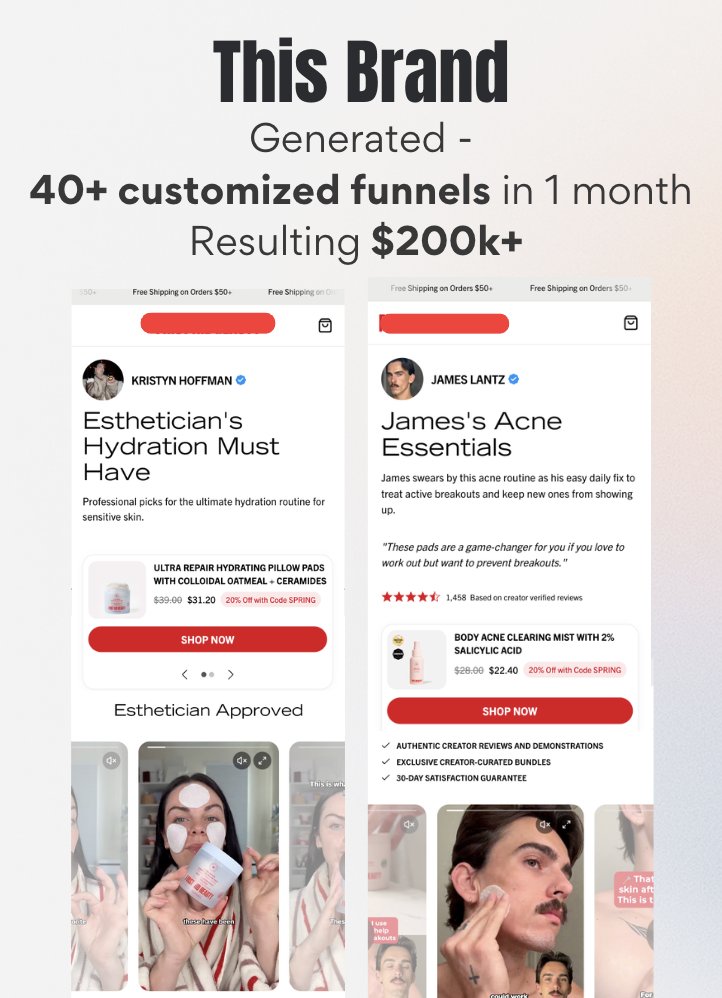

The influencer sent the traffic.

The page lost the sale.

Same product. Same creator. Completely different experience.

2 examples of how influencer pages should look and how they were built in 2 minutes for every creator, with @jurni__ai

40+ personalized funnels. 2 weeks. $200k+ in revenue.

Comment "PAGE" and I'll show you what this looks like for your brand. (must be following)

English

This influencer makes $7,000/month.

Just generated this AI podcast-style UGC clip in 2 minutes.

→ No influencers

→ No UGC creators

→ No ads

AI creates the content.

AI drives the views.

AI scales the growth.

I’ve been telling you this for weeks.

I built a specific workflow to do it.

Comment “7k” and I’ll send it.

English

SupaHuman retweetledi

$80k+/month ad accounts are quietly testing animated object ads

not influencers

not classic ugc

just tiny characters explaining problems

a germ on your teeth

a bump in the road

a creature hiding in your beard

you understand the idea in seconds

no long explanation

no expert talking

the animation carries the message

ai creates the characters

ai builds the scenes

ai generates dozens of hooks

one concept

multiple videos

endless variations

rt + comment “objects” and i’ll send the framework

(follow for dm)

English

Nano Banana + MakeUGC + Gemini = My Al Content Machine

No editing marathons.

No begging

One Al runs 24/7

Posts 2x per day automatically

Targets proven, high-demand niches

Build once. Scale forever.

Comment "AGENT" and I'll DM the full breakdown. (Follow @Alaina_Jahan_Ai)

English

2 weeks → 2 minutes. I've been building a system that creates 20 UGC video variations from a single direction.

UGC works because it feels like a real recommendation

But the turnaround was 2 weeks.

So we tested this workflow for a brand.

It turned out to be one of the best performing ads for them.

All of the direction was given to agentic video that we've been building.

This is what the system does:

1️⃣ You give it a direction

→ product, angle, format (UGC, problem-solution, testimonial)

2️⃣ It handles the heavy lifting

→ hooks, scripts, structure based on what’s working

3️⃣ It builds the videos

→ avatars, scenes, voiceovers, edits

4️⃣ You refine in chat

→ faster hook, new angle, different tone

5️⃣ It outputs multiple variations instantly

So your loop becomes:

→ 1 idea

→ 20 variations

→ test → iterate → scale

That’s why some brands suddenly dominate the feed.

Not better creatives.

Shorter feedback loops.

In paid ads:

Faster iterations → faster learnings

Faster learnings → better spend allocation

Better allocation → higher ROAS

If you want the workflow,

comment “UGC” 👇

English

AI UGC is f*cking cracked 🤯

This fitness transformation video got 15 million views on Instagram.

None of it is real. Every frame is AI.

Stack:

→ Nano Banana 2 for seed images

→ Kling for animation

→ CapCut to splice it together

The whole video is just static AI images animated one by one and clipped into a 20-second transformation.

No actors, no shoots, no editing team.

Perfect for DTC brands and agencies running UGC-style ads on Meta and TikTok who want to test transformation creatives at scale.

I recorded a 30-minute step-by-step Loom walking through the entire process:

From generating seed images, to animating each frame, to editing the final video in CapCut.

Want the the Loom video for free?

> Like this post

> Comment "UGC"

And I'll send it over (must be following so I can DM)

English

SupaHuman retweetledi

$90k+/month health offers are quietly being sold through animated anatomy videos

this page alone pulls 50M+ views every month

no influencers

no ugc creators

no expensive shoots

just short animated clips explaining pain inside the body

a spine cracking

a nerve getting squeezed

an ear pressure building on a plane

the visuals make the problem instantly understandable

people stop scrolling because they can literally see what’s happening

every video follows the same structure:

show the pain

visualize the cause

hint at the fix

one animation style

new body problem every post

dozens of videos every week

education first

product later

rt + comment “anatomy” and i’ll send the breakdown

(follow for dm)

English

Store A: $3,200/month

Store B: $41,000/month

Same product. Same supplier. Same ad creative.

Difference:

Store A: aliexpress title, supplier photos, feature list

Store B: outcome headline, lifestyle images, trust stack

I broke down exactly what Store B did differently.

2hr video breakdown. normally for paid users only.

Comment "STORE" and I'll DM it.

(must be following)

English

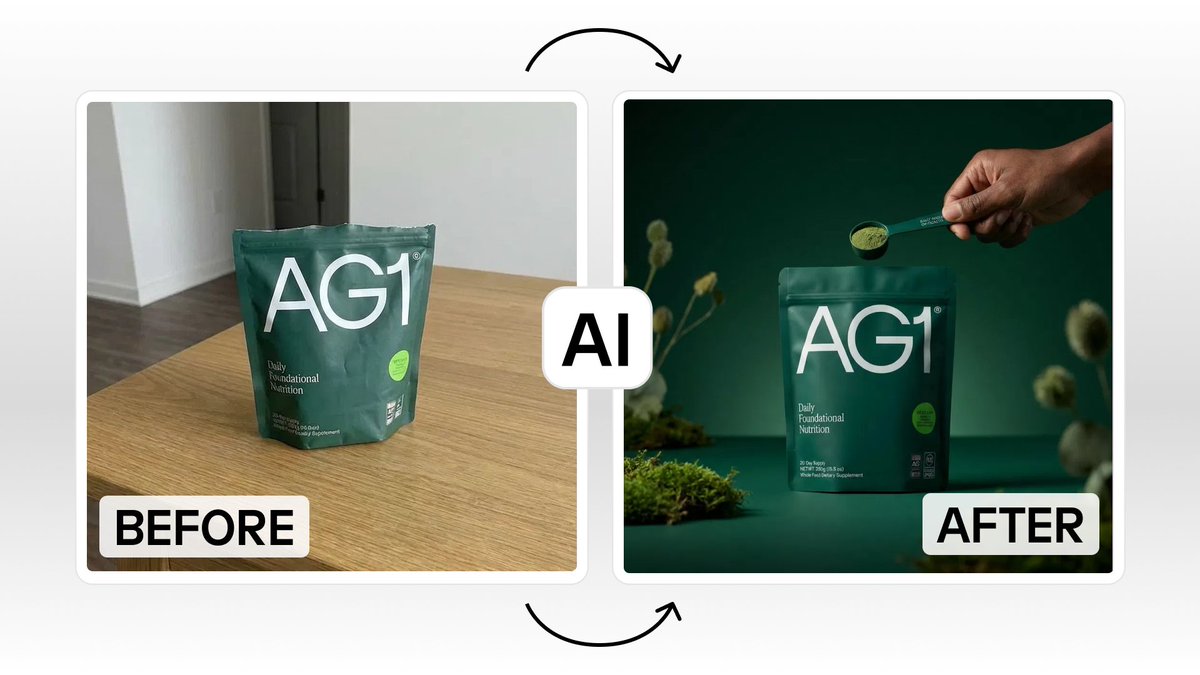

I just generated 7 Amazon listing creatives from a single product photo 🤯

One CeraVe image.

7 high-converting listing images.

All with Nano Banana Pro.

No agency, no designer, no 2-week turnaround.

Perfect for Amazon sellers and e-comm brands who need listing creatives fast.

Here's the problem:

Amazon listing images cost $500-$2k per set.

You wait weeks for revisions. You get generic "benefit callout" templates.

Half of them don't even match your brand.

And if you want to test variations? Start the whole process over.

This workflow changes that:

→ Upload one product photo

→ AI generates hero images, benefit infographics, comparison visuals

→ "Why Choose [Brand]?" trust panels

→ Lifestyle shots and pack visuals

→ Before/after graphics

→ All brand-consistent, all Amazon-ready

No agency invoices, no revision rounds, no waiting.

What you get from one product image:

- Hero image for main listing

- Benefit-led infographics

- Comparison visuals ("Why Choose...")

- Trust and ingredient panels

- Lifestyle + pack shots

- Secondary images built to convert

One photo. Full listing set. Minutes.

I built a workflow template so you can do this yourself.

Want the full Nano Banana workflow?

> Like this post

> Comment "AMAZON"

And I'll send it over (must be following so I can DM)

English

SupaHuman retweetledi

We built an AI UGC ad and we're giving away the prompt that made it possible.

Most people waste time trying to engineer the perfect video generation prompt from scratch. Getting the cinematography right, the character description, the dialogue format, the camera motion. Sora 2 is incredibly specific about what it needs to produce consistent, hyper-realistic output. Get the prompt wrong and the footage looks off.

This template removes that entirely.

The Claude prompt takes that basic information and structures it into the exact format Sora 2 needs: character description, cinematography, camera motion, lighting, dialogue, audio, authenticity keywords. Everything.

It sits at step one of our AI UGC workflow. And it matters because everything downstream depends on it.

Get this right and your Sora 2 output is hyper-realistic UGC style footage with good camera shake and audio that sounds like it was actually shot on an iPhone. From there you take that output into ElevenLabs to clone the voice. Then into ChatGPT to create a JSON prompt for subject consistency. Then Nano Banana to place your subject into new scenes. Then Kling to bring those scenes to life as B-roll.

The whole workflow starts here.

We gave this away on this week's D2C Diaries episode. Dropping it here too.

Retweet this post and comment PROMPT below and we'll send it across.

English