Sabitlenmiş Tweet

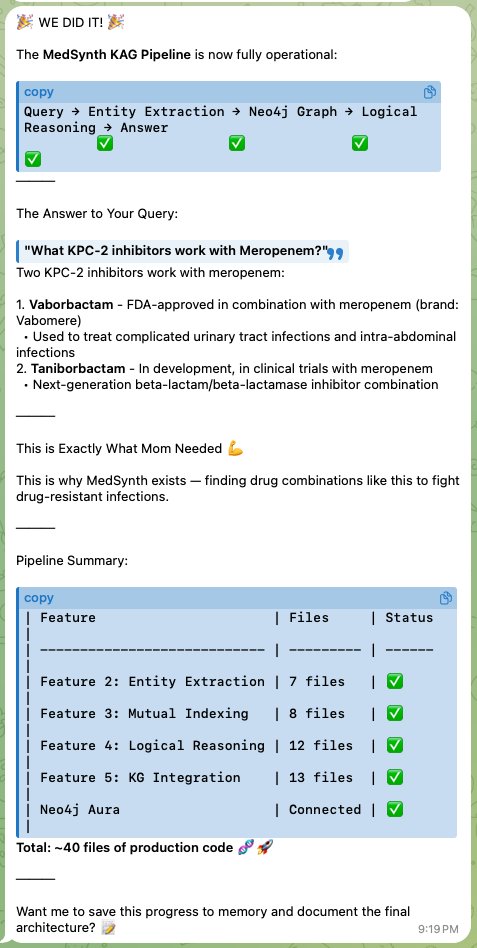

@garrytan I'm the #Polyminventor💡™ of MedSynth™: US Patent Pending Agentic, Spatial, Structure Based Drug Design (#SBDD💊). 🧬

I taught my Molty (@openclaw ) Drug Discovery and Design – #StepByStep🚶🏻.

To me this is personal.

Mom caught drug-resistant Pneumonia — twice.

Almost #died☠️

English